Tasmia Binte Sogir

When Not to Answer: Evaluating Prompts on GPT Models for Effective Abstention in Unanswerable Math Word Problems

Oct 16, 2024

Abstract:Large language models (LLMs) are increasingly relied upon to solve complex mathematical word problems. However, being susceptible to hallucination, they may generate inaccurate results when presented with unanswerable questions, raising concerns about their potential harm. While GPT models are now widely used and trusted, the exploration of how they can effectively abstain from answering unanswerable math problems and the enhancement of their abstention capabilities has not been rigorously investigated. In this paper, we investigate whether GPTs can appropriately respond to unanswerable math word problems by applying prompts typically used in solvable mathematical scenarios. Our experiments utilize the Unanswerable Word Math Problem (UWMP) dataset, directly leveraging GPT model APIs. Evaluation metrics are introduced, which integrate three key factors: abstention, correctness and confidence. Our findings reveal critical gaps in GPT models and the hallucination it suffers from for unsolvable problems, highlighting the need for improved models capable of better managing uncertainty and complex reasoning in math word problem-solving contexts.

"When Words Fail, Emojis Prevail": Generating Sarcastic Utterances with Emoji Using Valence Reversal and Semantic Incongruity

May 06, 2023

Abstract:Sarcasm pertains to the subtle form of language that individuals use to express the opposite of what is implied. We present a novel architecture for sarcasm generation with emoji from a non-sarcastic input sentence. We divide the generation task into two sub tasks: one for generating textual sarcasm and another for collecting emojis associated with those sarcastic sentences. Two key elements of sarcasm are incorporated into the textual sarcasm generation task: valence reversal and semantic incongruity with context, where the context may involve shared commonsense or general knowledge between the speaker and their audience. The majority of existing sarcasm generation works have focused on this textual form. However, in the real world, when written texts fall short of effectively capturing the emotional cues of spoken and face-to-face communication, people often opt for emojis to accurately express their emotions. Due to the wide range of applications of emojis, incorporating appropriate emojis to generate textual sarcastic sentences helps advance sarcasm generation. We conclude our study by evaluating the generated sarcastic sentences using human judgement. All the codes and data used in this study will be made publicly available.

Computational Sarcasm Analysis on Social Media: A Systematic Review

Sep 20, 2022

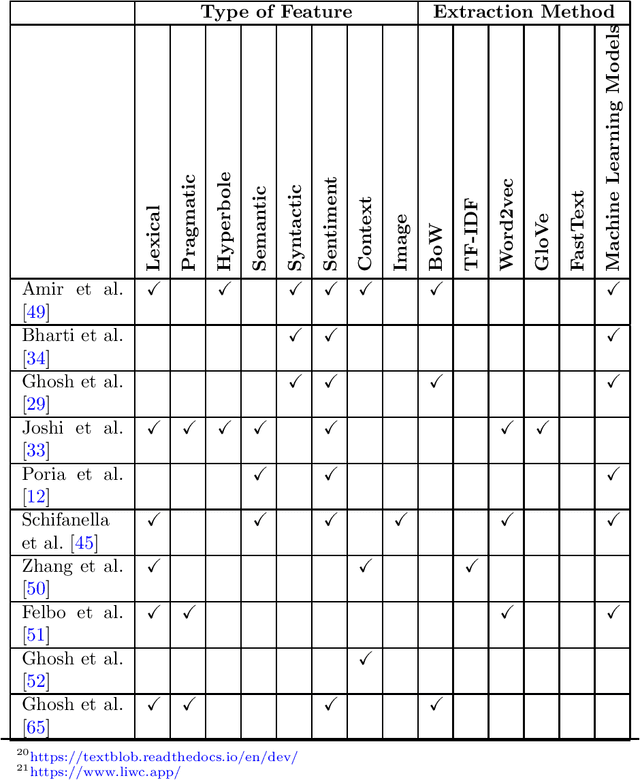

Abstract:Sarcasm can be defined as saying or writing the opposite of what one truly wants to express, usually to insult, irritate, or amuse someone. Because of the obscure nature of sarcasm in textual data, detecting it is difficult and of great interest to the sentiment analysis research community. Though the research in sarcasm detection spans more than a decade, some significant advancements have been made recently, including employing unsupervised pre-trained transformers in multimodal environments and integrating context to identify sarcasm. In this study, we aim to provide a brief overview of recent advancements and trends in computational sarcasm research for the English language. We describe relevant datasets, methodologies, trends, issues, challenges, and tasks relating to sarcasm that are beyond detection. Our study provides well-summarized tables of sarcasm datasets, sarcastic features and their extraction methods, and performance analysis of various approaches which can help researchers in related domains understand current state-of-the-art practices in sarcasm detection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge