Tao Ruan Wan

Context-Aware Mixed Reality: A Framework for Ubiquitous Interaction

Mar 14, 2018

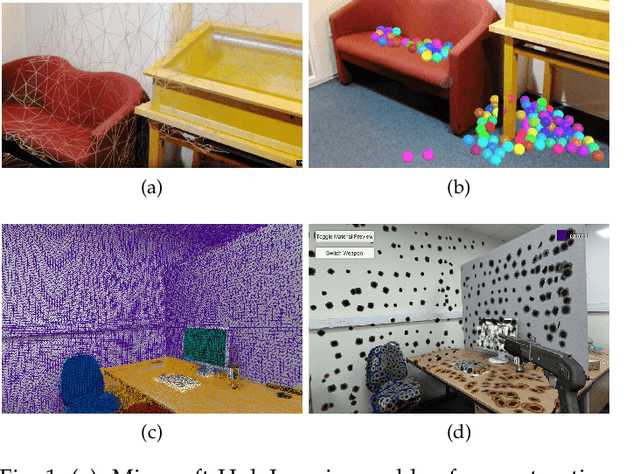

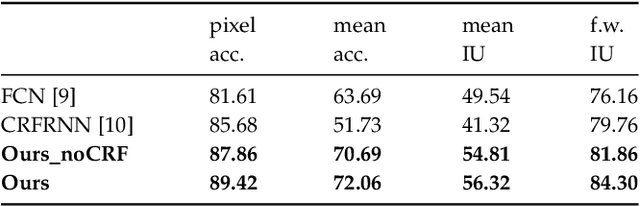

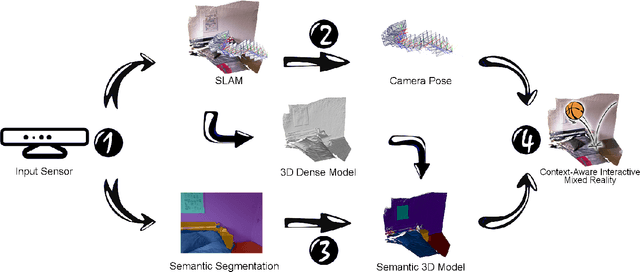

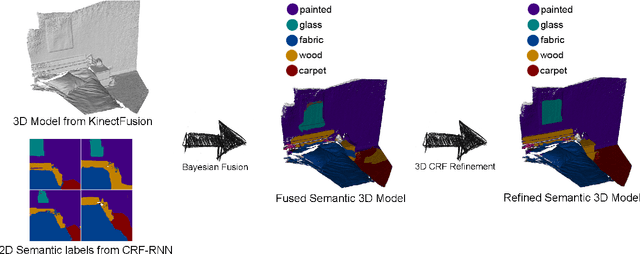

Abstract:Mixed Reality (MR) is a powerful interactive technology that yields new types of user experience. We present a semantic based interactive MR framework that exceeds the current geometry level approaches, a step change in generating high-level context-aware interactions. Our key insight is to build semantic understanding in MR that not only can greatly enhance user experience through object-specific behaviours, but also pave the way for solving complex interaction design challenges. The framework generates semantic properties of the real world environment through dense scene reconstruction and deep image understanding. We demonstrate our approach with a material-aware prototype system for generating context-aware physical interactions between the real and the virtual objects. Quantitative and qualitative evaluations are carried out and the results show that the framework delivers accurate and fast semantic information in interactive MR environment, providing effective semantic level interactions.

Augmented Reality for Depth Cues in Monocular Minimally Invasive Surgery

Mar 01, 2017

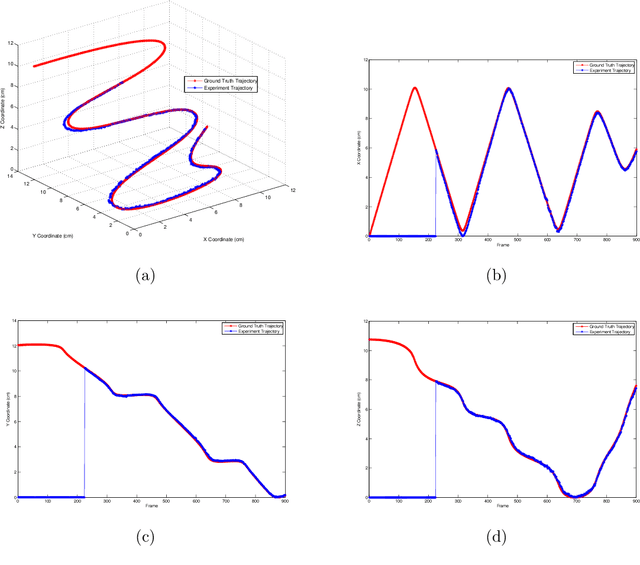

Abstract:One of the major challenges in Minimally Invasive Surgery (MIS) such as laparoscopy is the lack of depth perception. In recent years, laparoscopic scene tracking and surface reconstruction has been a focus of investigation to provide rich additional information to aid the surgical process and compensate for the depth perception issue. However, robust 3D surface reconstruction and augmented reality with depth perception on the reconstructed scene are yet to be reported. This paper presents our work in this area. First, we adopt a state-of-the-art visual simultaneous localization and mapping (SLAM) framework - ORB-SLAM - and extend the algorithm for use in MIS scenes for reliable endoscopic camera tracking and salient point mapping. We then develop a robust global 3D surface reconstruction frame- work based on the sparse point clouds extracted from the SLAM framework. Our approach is to combine an outlier removal filter within a Moving Least Squares smoothing algorithm and then employ Poisson surface reconstruction to obtain smooth surfaces from the unstructured sparse point cloud. Our proposed method has been quantitatively evaluated compared with ground-truth camera trajectories and the organ model surface we used to render the synthetic simulation videos. In vivo laparoscopic videos used in the tests have demonstrated the robustness and accuracy of our proposed framework on both camera tracking and surface reconstruction, illustrating the potential of our algorithm for depth augmentation and depth-corrected augmented reality in MIS with monocular endoscopes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge