Syed Shah

Multi-Object Grasping -- Estimating the Number of Objects in a Robotic Grasp

Nov 30, 2021

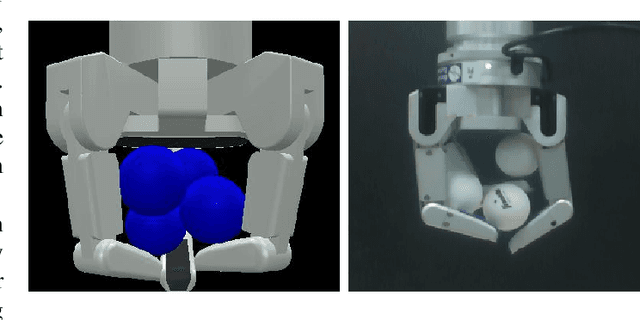

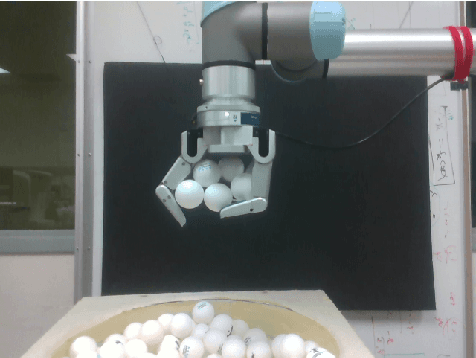

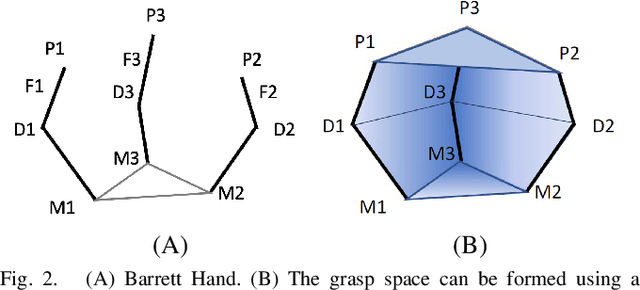

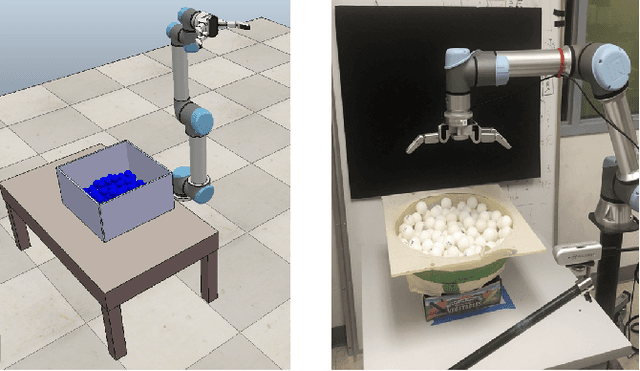

Abstract:A human hand can grasp a desired number of objects at once from a pile based solely on tactile sensing. To do so, a robot needs to grasp within a pile, sense the number of objects in the grasp before lifting, and predict the number of objects that will remain in the grasp after lifting. It is a challenging problem because when making the prediction, the robotic hand is still in the pile and the objects in the grasp are not observable to vision systems. Moreover, some objects that are grasped by the hand before lifting from the pile may fall out of the grasp when the hand is lifted. This occurs because they were supported by other objects in the pile instead of the fingers of the hand. Therefore, a robotic hand should sense the number of objects in a grasp using its tactile sensors before lifting. This paper presents novel multi-object grasping analyzing methods for solving this problem. They include a grasp volume calculation, tactile force analysis, and a data-driven deep learning approach. The methods have been implemented on a Barrett hand and then evaluated in simulations and a real setup with a robotic system. The evaluation results conclude that once the Barrett hand grasps multiple objects in the pile, the data-driven model can predict, before lifting, the number of objects that will remain in the hand after lifting. The root-mean-square errors for our approach are 0.74 for balls and 0.58 for cubes in simulations, and 1.06 for balls, and 1.45 for cubes in the real system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge