Sushmita Sarker

Learning Unified Representations of Normalcy for Time Series Anomaly Detection

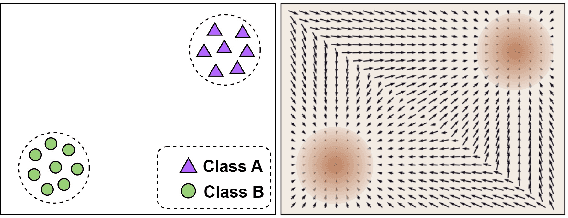

May 10, 2026Abstract:The core challenge in unsupervised anomaly detection is identifying abnormal patterns without prior knowledge of their characteristics. While existing methods have addressed aspects of this problem, they often struggle to learn a robust representation of the normal data distribution that is distinct from anomalous patterns. In this paper, we present a novel framework, Unified Unsupervised Anomaly Detection ($\text{U}^2\text{AD}$), that comprehensively addresses anomaly detection in multivariate time series. Our approach learns the underlying data distribution of normal samples by utilizing score-based generative modeling. We introduce a novel time-dependent score network and a unified training objective that together delineate the manifold of normal data while considering both local and global temporal contexts. Reconstruction is then performed via a deterministic sampling process using an ordinary differential equation solver. Our extensive experimental evaluations demonstrate that $\text{U}^2\text{AD}$ not only outperforms current state-of-the-art methods in detection accuracy but also identifies anomalies at significantly earlier stages of their occurrence.

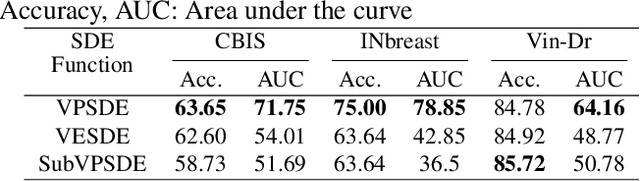

Can Score-Based Generative Modeling Effectively Handle Medical Image Classification?

Feb 24, 2025

Abstract:The remarkable success of deep learning in recent years has prompted applications in medical image classification and diagnosis tasks. While classification models have demonstrated robustness in classifying simpler datasets like MNIST or natural images such as ImageNet, this resilience is not consistently observed in complex medical image datasets where data is more scarce and lacks diversity. Moreover, previous findings on natural image datasets have indicated a potential trade-off between data likelihood and classification accuracy. In this study, we explore the use of score-based generative models as classifiers for medical images, specifically mammographic images. Our findings suggest that our proposed generative classifier model not only achieves superior classification results on CBIS-DDSM, INbreast and Vin-Dr Mammo datasets, but also introduces a novel approach to image classification in a broader context. Our code is publicly available at https://github.com/sushmitasarker/sgc_for_medical_image_classification

A comprehensive overview of deep learning techniques for 3D point cloud classification and semantic segmentation

May 20, 2024Abstract:Point cloud analysis has a wide range of applications in many areas such as computer vision, robotic manipulation, and autonomous driving. While deep learning has achieved remarkable success on image-based tasks, there are many unique challenges faced by deep neural networks in processing massive, unordered, irregular and noisy 3D points. To stimulate future research, this paper analyzes recent progress in deep learning methods employed for point cloud processing and presents challenges and potential directions to advance this field. It serves as a comprehensive review on two major tasks in 3D point cloud processing-- namely, 3D shape classification and semantic segmentation.

* Published in Springer Nature (Machine Vision and Applications)

MV-Swin-T: Mammogram Classification with Multi-view Swin Transformer

Feb 26, 2024Abstract:Traditional deep learning approaches for breast cancer classification has predominantly concentrated on single-view analysis. In clinical practice, however, radiologists concurrently examine all views within a mammography exam, leveraging the inherent correlations in these views to effectively detect tumors. Acknowledging the significance of multi-view analysis, some studies have introduced methods that independently process mammogram views, either through distinct convolutional branches or simple fusion strategies, inadvertently leading to a loss of crucial inter-view correlations. In this paper, we propose an innovative multi-view network exclusively based on transformers to address challenges in mammographic image classification. Our approach introduces a novel shifted window-based dynamic attention block, facilitating the effective integration of multi-view information and promoting the coherent transfer of this information between views at the spatial feature map level. Furthermore, we conduct a comprehensive comparative analysis of the performance and effectiveness of transformer-based models under diverse settings, employing the CBIS-DDSM and Vin-Dr Mammo datasets. Our code is publicly available at https://github.com/prithuls/MV-Swin-T

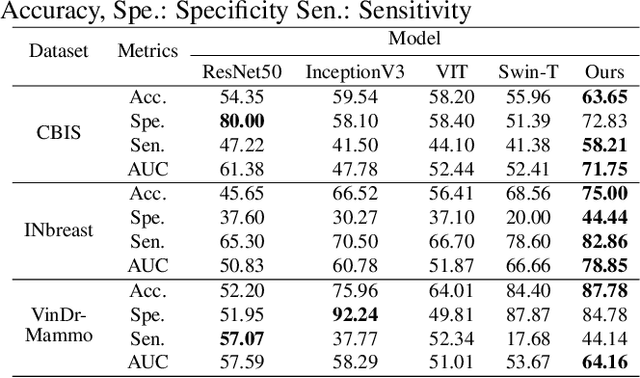

ConnectedUNets++: Mass Segmentation from Whole Mammographic Images

Nov 04, 2022

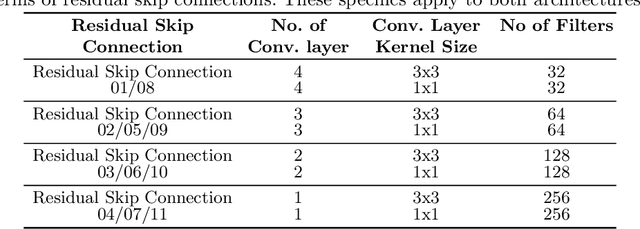

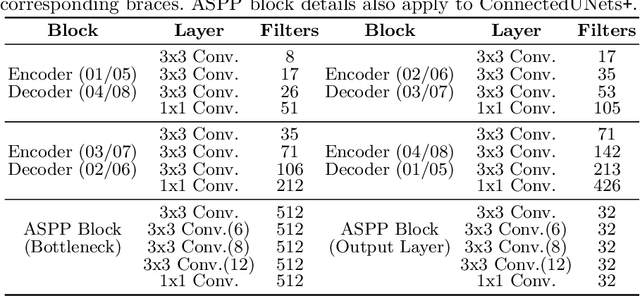

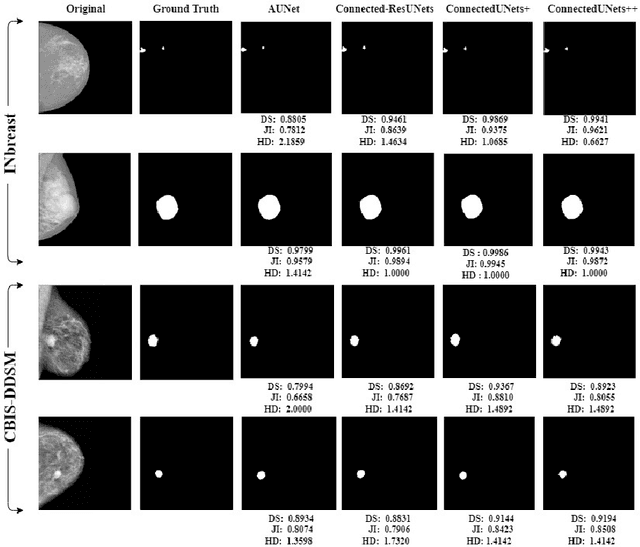

Abstract:Deep learning has made a breakthrough in medical image segmentation in recent years due to its ability to extract high-level features without the need for prior knowledge. In this context, U-Net is one of the most advanced medical image segmentation models, with promising results in mammography. Despite its excellent overall performance in segmenting multimodal medical images, the traditional U-Net structure appears to be inadequate in various ways. There are certain U-Net design modifications, such as MultiResUNet, Connected-UNets, and AU-Net, that have improved overall performance in areas where the conventional U-Net architecture appears to be deficient. Following the success of UNet and its variants, we have presented two enhanced versions of the Connected-UNets architecture: ConnectedUNets+ and ConnectedUNets++. In ConnectedUNets+, we have replaced the simple skip connections of Connected-UNets architecture with residual skip connections, while in ConnectedUNets++, we have modified the encoder-decoder structure along with employing residual skip connections. We have evaluated our proposed architectures on two publicly available datasets, the Curated Breast Imaging Subset of Digital Database for Screening Mammography (CBIS-DDSM) and INbreast.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge