Stephen Scott

BEVERS: A General, Simple, and Performant Framework for Automatic Fact Verification

Mar 29, 2023Abstract:Automatic fact verification has become an increasingly popular topic in recent years and among datasets the Fact Extraction and VERification (FEVER) dataset is one of the most popular. In this work we present BEVERS, a tuned baseline system for the FEVER dataset. Our pipeline uses standard approaches for document retrieval, sentence selection, and final claim classification, however, we spend considerable effort ensuring optimal performance for each component. The results are that BEVERS achieves the highest FEVER score and label accuracy among all systems, published or unpublished. We also apply this pipeline to another fact verification dataset, Scifact, and achieve the highest label accuracy among all systems on that dataset as well. We also make our full code available.

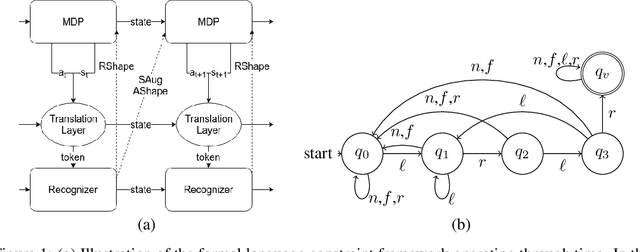

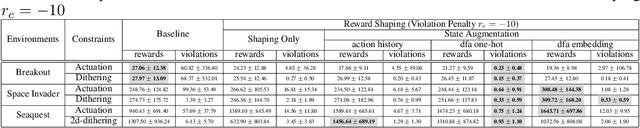

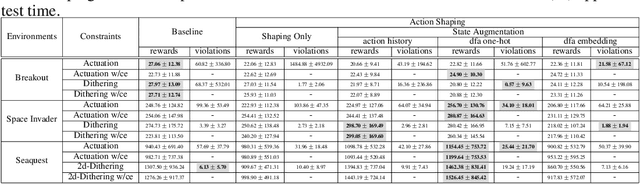

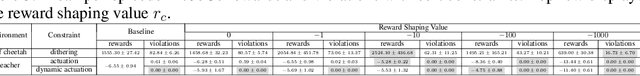

Formal Language Constraints for Markov Decision Processes

Oct 02, 2019

Abstract:In order to satisfy safety conditions, a reinforcement learned (RL) agent maybe constrained from acting freely, e.g., to prevent trajectories that might cause unwanted behavior or physical damage in a robot. We propose a general framework for augmenting a Markov decision process (MDP) with constraints that are described in formal languages over sequences of MDP states and agent actions. Constraint enforcement is implemented by filtering the allowed action set or by applying potential-based reward shaping to implement hard and soft constraint enforcement, respectively. We instantiate this framework using deterministic finite automata to encode constraints and propose methods of augmenting MDP observations with the state of the constraint automaton for learning. We empirically evaluate these methods with a variety of constraints by training Deep Q-Networks in Atari games as well as Proximal Policy Optimization in MuJoCo environments. We experimentally find that our approaches are effective in significantly reducing or eliminating constraint violations with either minimal negative or, depending on the constraint, a clear positive impact on final performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge