Stefano Romeo

Neural Network Training with Highly Incomplete Datasets

Jul 01, 2021

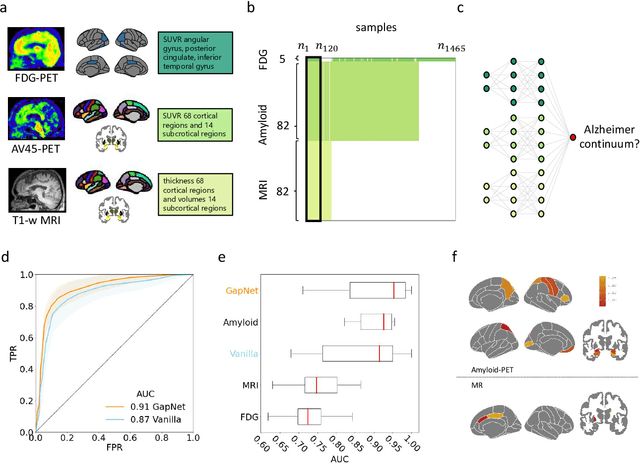

Abstract:Neural network training and validation rely on the availability of large high-quality datasets. However, in many cases only incomplete datasets are available, particularly in health care applications, where each patient typically undergoes different clinical procedures or can drop out of a study. Since the data to train the neural networks need to be complete, most studies discard the incomplete datapoints, which reduces the size of the training data, or impute the missing features, which can lead to artefacts. Alas, both approaches are inadequate when a large portion of the data is missing. Here, we introduce GapNet, an alternative deep-learning training approach that can use highly incomplete datasets. First, the dataset is split into subsets of samples containing all values for a certain cluster of features. Then, these subsets are used to train individual neural networks. Finally, this ensemble of neural networks is combined into a single neural network whose training is fine-tuned using all complete datapoints. Using two highly incomplete real-world medical datasets, we show that GapNet improves the identification of patients with underlying Alzheimer's disease pathology and of patients at risk of hospitalization due to Covid-19. By distilling the information available in incomplete datasets without having to reduce their size or to impute missing values, GapNet will permit to extract valuable information from a wide range of datasets, benefiting diverse fields from medicine to engineering.

Extracting quantitative biological information from brightfield cell images using deep learning

Dec 23, 2020

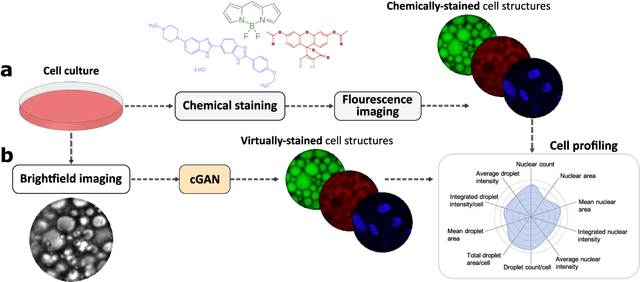

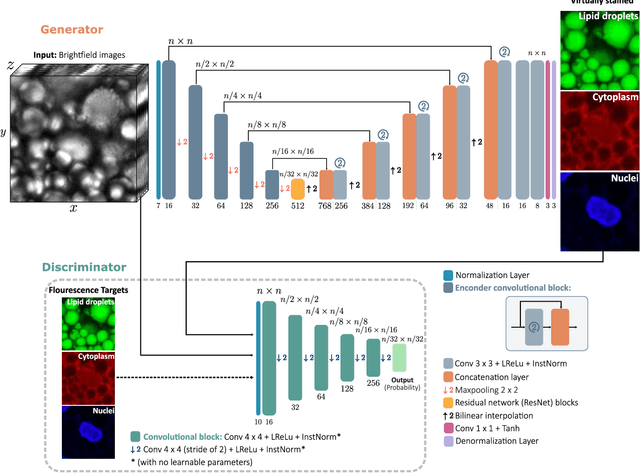

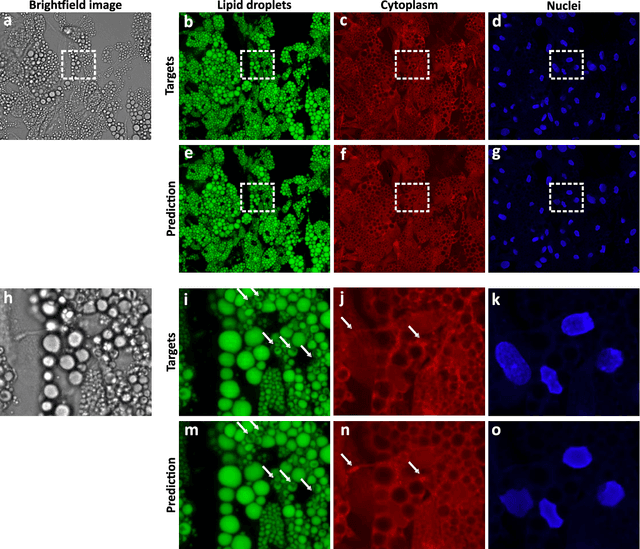

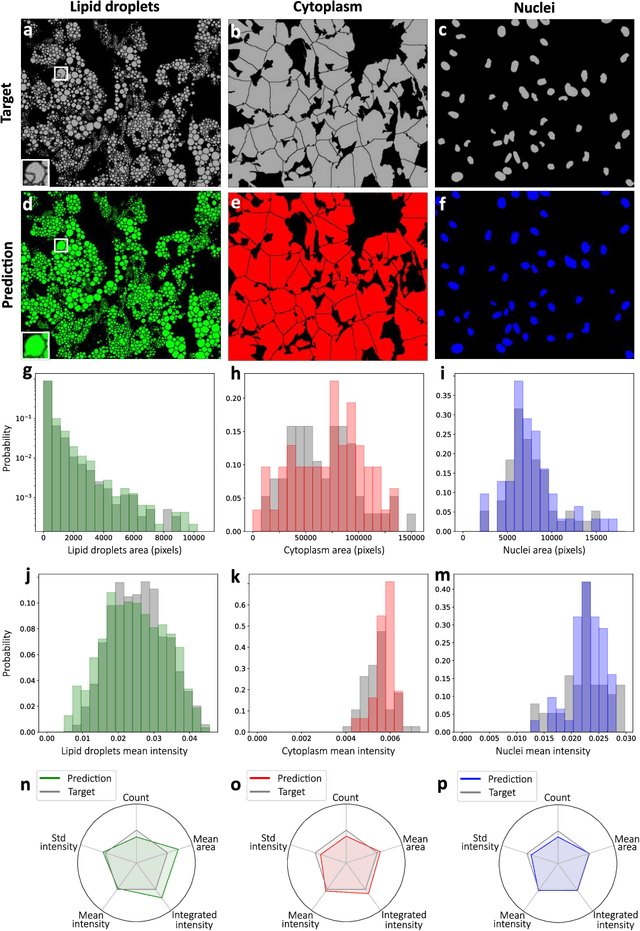

Abstract:Quantitative analysis of cell structures is essential for biomedical and pharmaceutical research. The standard imaging approach relies on fluorescence microscopy, where cell structures of interest are labeled by chemical staining techniques. However, these techniques are often invasive and sometimes even toxic to the cells, in addition to being time-consuming, labor-intensive, and expensive. Here, we introduce an alternative deep-learning-powered approach based on the analysis of brightfield images by a conditional generative adversarial neural network (cGAN). We show that this approach can extract information from the brightfield images to generate virtually-stained images, which can be used in subsequent downstream quantitative analyses of cell structures. Specifically, we train a cGAN to virtually stain lipid droplets, cytoplasm, and nuclei using brightfield images of human stem-cell-derived fat cells (adipocytes), which are of particular interest for nanomedicine and vaccine development. Subsequently, we use these virtually-stained images to extract quantitative measures about these cell structures. Generating virtually-stained fluorescence images is less invasive, less expensive, and more reproducible than standard chemical staining; furthermore, it frees up the fluorescence microscopy channels for other analytical probes, thus increasing the amount of information that can be extracted from each cell.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge