Sota Nishiyama

Precise Dynamics of Diagonal Linear Networks: A Unifying Analysis by Dynamical Mean-Field Theory

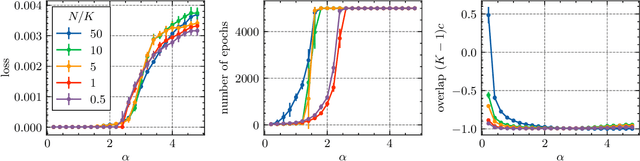

Oct 02, 2025Abstract:Diagonal linear networks (DLNs) are a tractable model that captures several nontrivial behaviors in neural network training, such as initialization-dependent solutions and incremental learning. These phenomena are typically studied in isolation, leaving the overall dynamics insufficiently understood. In this work, we present a unified analysis of various phenomena in the gradient flow dynamics of DLNs. Using Dynamical Mean-Field Theory (DMFT), we derive a low-dimensional effective process that captures the asymptotic gradient flow dynamics in high dimensions. Analyzing this effective process yields new insights into DLN dynamics, including loss convergence rates and their trade-off with generalization, and systematically reproduces many of the previously observed phenomena. These findings deepen our understanding of DLNs and demonstrate the effectiveness of the DMFT approach in analyzing high-dimensional learning dynamics of neural networks.

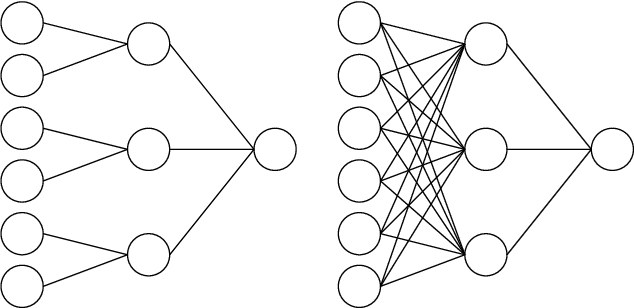

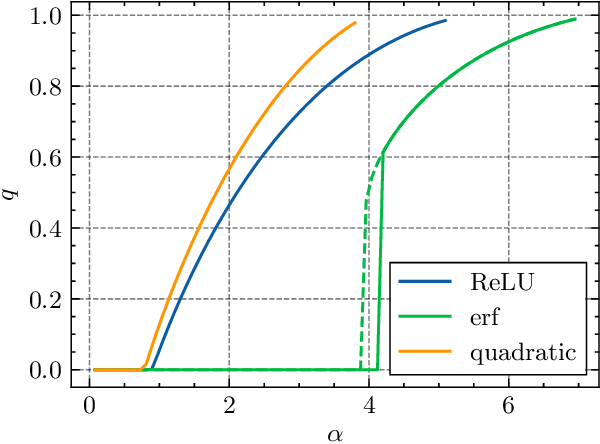

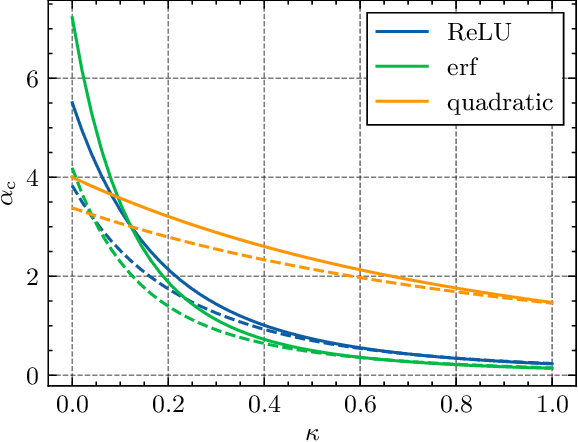

Solution space and storage capacity of fully connected two-layer neural networks with generic activation functions

Apr 20, 2024

Abstract:The storage capacity of a binary classification model is the maximum number of random input-output pairs per parameter that the model can learn. It is one of the indicators of the expressive power of machine learning models and is important for comparing the performance of various models. In this study, we analyze the structure of the solution space and the storage capacity of fully connected two-layer neural networks with general activation functions using the replica method from statistical physics. Our results demonstrate that the storage capacity per parameter remains finite even with infinite width and that the weights of the network exhibit negative correlations, leading to a 'division of labor'. In addition, we find that increasing the dataset size triggers a phase transition at a certain transition point where the permutation symmetry of weights is broken, resulting in the solution space splitting into disjoint regions. We identify the dependence of this transition point and the storage capacity on the choice of activation function. These findings contribute to understanding the influence of activation functions and the number of parameters on the structure of the solution space, potentially offering insights for selecting appropriate architectures based on specific objectives.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge