Soham Gandhi

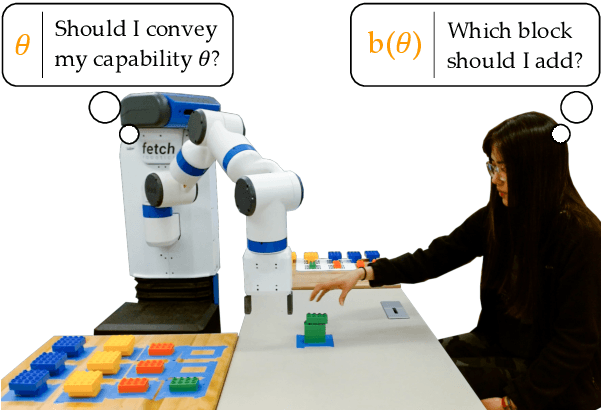

Should Collaborative Robots be Transparent?

Apr 23, 2023

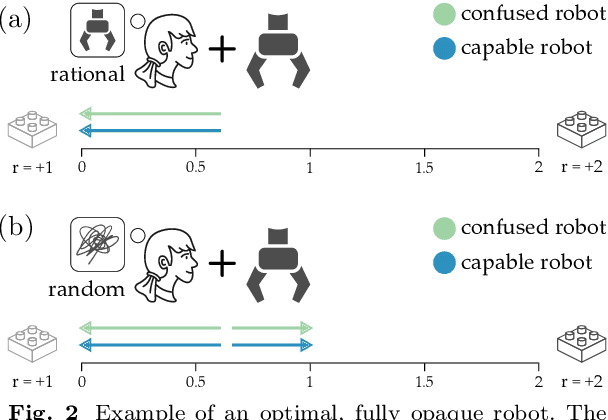

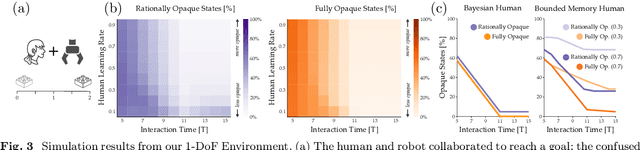

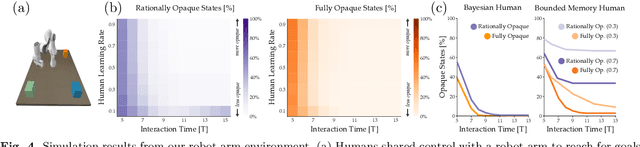

Abstract:Today's robots often assume that their behavior should be transparent. These transparent (e.g., legible, explainable) robots intentionally choose actions that convey their internal state to nearby humans. But while transparent behavior seems beneficial, is it actually optimal? In this paper we consider collaborative settings where the human and robot have the same objective, and the human is uncertain about the robot's type (i.e., the robot's internal state). We extend a recursive combination of Bayesian Nash equilibrium and the Bellman equation to solve for optimal robot policies. Interestingly, we discover that it is not always optimal for collaborative robots to be transparent; instead, human and robot teams can sometimes achieve higher rewards when the robot is opaque. Opaque robots select the same actions regardless of their internal state: because each type of opaque robot behaves in the same way, the human cannot infer the robot's type. Our analysis suggests that opaque behavior becomes optimal when either (a) human-robot interactions have a short time horizon or (b) users are slow to learn from the robot's actions. Across online and in-person user studies with 43 total participants, we find that users reach higher rewards when working with opaque partners, and subjectively rate opaque robots as about equal to transparent robots. See videos of our experiments here: https://youtu.be/u8q1Z7WHUuI

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge