Simon Allen-Raffl

A Hierarchical Descriptor Framework for On-the-Fly Anatomical Location Matching between Longitudinal Studies

Aug 11, 2023

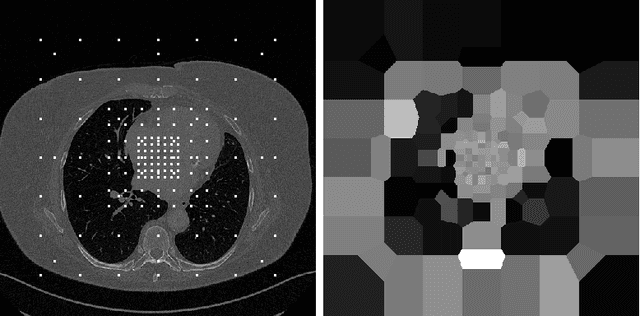

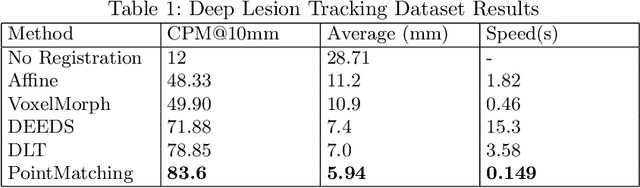

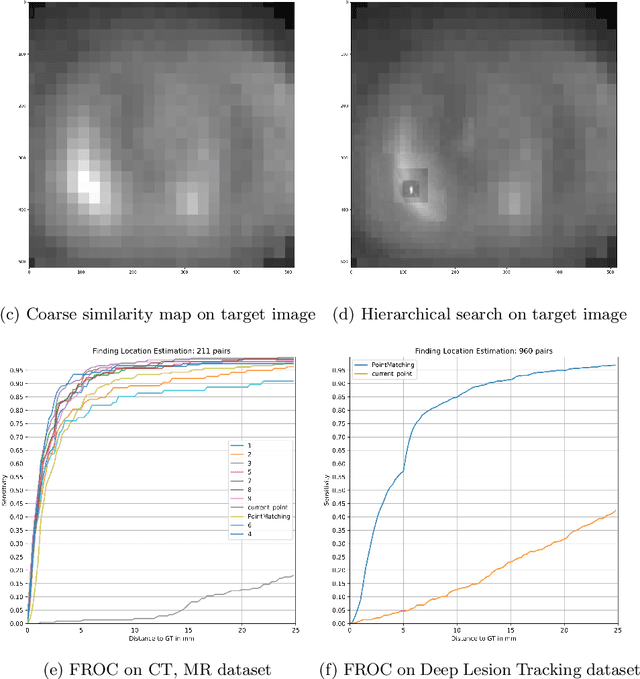

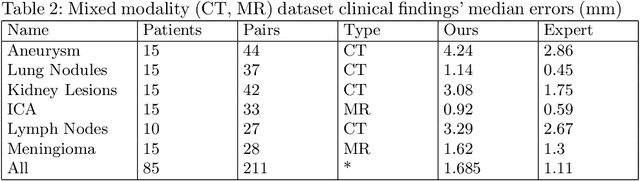

Abstract:We propose a method to match anatomical locations between pairs of medical images in longitudinal comparisons. The matching is made possible by computing a descriptor of the query point in a source image based on a hierarchical sparse sampling of image intensities that encode the location information. Then, a hierarchical search operation finds the corresponding point with the most similar descriptor in the target image. This simple yet powerful strategy reduces the computational time of mapping points to a millisecond scale on a single CPU. Thus, radiologists can compare similar anatomical locations in near real-time without requiring extra architectural costs for precomputing or storing deformation fields from registrations. Our algorithm does not require prior training, resampling, segmentation, or affine transformation steps. We have tested our algorithm on the recently published Deep Lesion Tracking dataset annotations. We observed more accurate matching compared to Deep Lesion Tracker while being 24 times faster than the most precise algorithm reported therein. We also investigated the matching accuracy on CT and MR modalities and compared the proposed algorithm's accuracy against ground truth consolidated from multiple radiologists.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge