Shawn L. Beaulieu

Glamour muscles: why having a body is not what it means to be embodied

Jul 17, 2023

Abstract:Embodiment has recently enjoyed renewed consideration as a means to amplify the faculties of smart machines. Proponents of embodiment seem to imply that optimizing for movement in physical space promotes something more than the acquisition of niche capabilities for solving problems in physical space. However, there is nothing in principle which should so distinguish the problem of action selection in physical space from the problem of action selection in more abstract spaces, like that of language. Rather, what makes embodiment persuasive as a means toward higher intelligence is that it promises to capture, but does not actually realize, contingent facts about certain bodies (living intelligence) and the patterns of activity associated with them. These include an active resistance to annihilation and revisable constraints on the processes that make the world intelligible. To be theoretically or practically useful beyond the creation of niche tools, we argue that "embodiment" cannot be the trivial fact of a body, nor its movement through space, but the perpetual negotiation of the function, design, and integrity of that body$\unicode{x2013}$that is, to participate in what it means to $\textit{constitute}$ a given body. It follows that computer programs which are strictly incapable of traversing physical space might, under the right conditions, be more embodied than a walking, talking robot.

Continual learning under domain transfer with sparse synaptic bursting

Aug 26, 2021

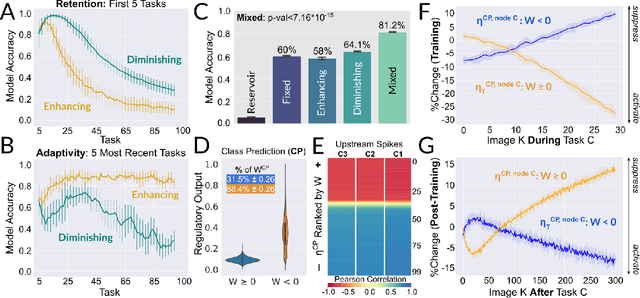

Abstract:Existing machines are functionally specific tools that were made for easy prediction and control. Tomorrow's machines may be closer to biological systems in their mutability, resilience, and autonomy. But first they must be capable of learning, and retaining, new information without repeated exposure to it. Past efforts to engineer such systems have sought to build or regulate artificial neural networks using task-specific modules with constrained circumstances of application. This has not yet enabled continual learning over long sequences of previously unseen data without corrupting existing knowledge: a problem known as catastrophic forgetting. In this paper, we introduce a system that can learn sequentially over previously unseen datasets (ImageNet, CIFAR-100) with little forgetting over time. This is accomplished by regulating the activity of weights in a convolutional neural network on the basis of inputs using top-down modulation generated by a second feed-forward neural network. We find that our method learns continually under domain transfer with sparse bursts of activity in weights that are recycled across tasks, rather than by maintaining task-specific modules. Sparse synaptic bursting is found to balance enhanced and diminished activity in a way that facilitates adaptation to new inputs without corrupting previously acquired functions. This behavior emerges during a prior meta-learning phase in which regulated synapses are selectively disinhibited, or grown, from an initial state of uniform suppression.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge