Savina Dine Kim

The Double-Edged Sword of Big Data and Information Technology for the Disadvantaged: A Cautionary Tale from Open Banking

Aug 01, 2023

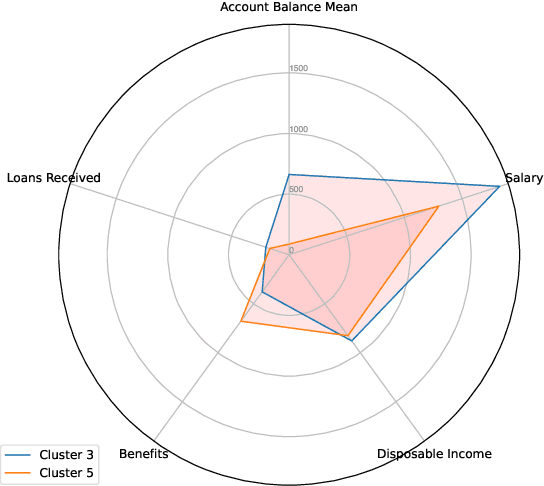

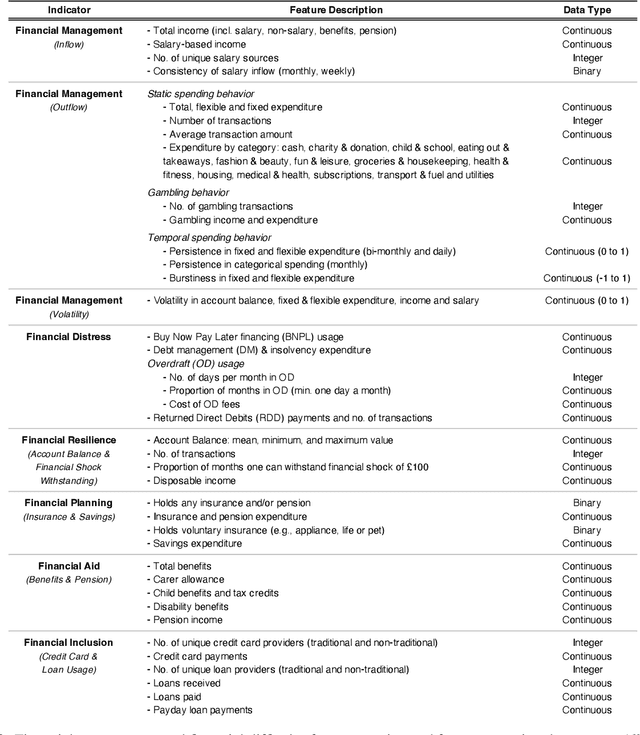

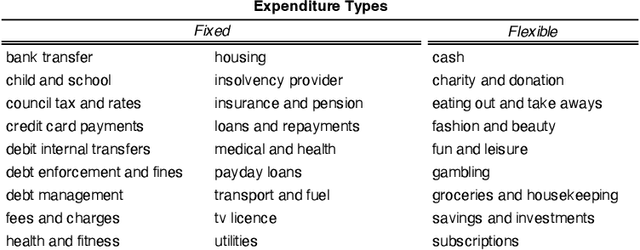

Abstract:This research article analyses and demonstrates the hidden implications for fairness of seemingly neutral data coupled with powerful technology, such as machine learning (ML), using Open Banking as an example. Open Banking has ignited a revolution in financial services, opening new opportunities for customer acquisition, management, retention, and risk assessment. However, the granularity of transaction data holds potential for harm where unnoticed proxies for sensitive and prohibited characteristics may lead to indirect discrimination. Against this backdrop, we investigate the dimensions of financial vulnerability (FV), a global concern resulting from COVID-19 and rising inflation. Specifically, we look to understand the behavioral elements leading up to FV and its impact on at-risk, disadvantaged groups through the lens of fair interpretation. Using a unique dataset from a UK FinTech lender, we demonstrate the power of fine-grained transaction data while simultaneously cautioning its safe usage. Three ML classifiers are compared in predicting the likelihood of FV, and groups exhibiting different magnitudes and forms of FV are identified via clustering to highlight the effects of feature combination. Our results indicate that engineered features of financial behavior can be predictive of omitted personal information, particularly sensitive or protected characteristics, shedding light on the hidden dangers of Open Banking data. We discuss the implications and conclude fairness via unawareness is ineffective in this new technological environment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge