Saurabh Amarnath Mahindre

Consensus Based Multi-Layer Perceptrons for Edge Computing

Feb 09, 2021

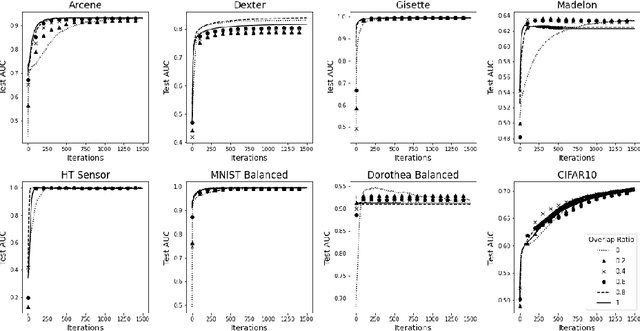

Abstract:In recent years, storing large volumes of data on distributed devices has become commonplace. Applications involving sensors, for example, capture data in different modalities including image, video, audio, GPS and others. Novel algorithms are required to learn from this rich distributed data. In this paper, we present consensus based multi-layer perceptrons for resource-constrained devices. Assuming nodes (devices) in the distributed system are arranged in a graph and contain vertically partitioned data, the goal is to learn a global function that minimizes the loss. Each node learns a feed-forward multi-layer perceptron and obtains a loss on data stored locally. It then gossips with a neighbor, chosen uniformly at random, and exchanges information about the loss. The updated loss is used to run a back propagation algorithm and adjust weights appropriately. This method enables nodes to learn the global function without exchange of data in the network. Empirical results reveal that the consensus algorithm converges to the centralized model and has performance comparable to centralized multi-layer perceptrons and tree-based algorithms including random forests and gradient boosted decision trees.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge