Sanjai Rayadurgam

Manifold-based Test Generation for Image Classifiers

Feb 15, 2020

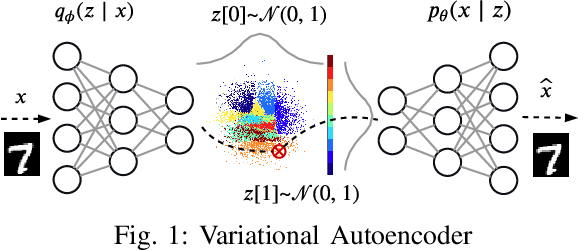

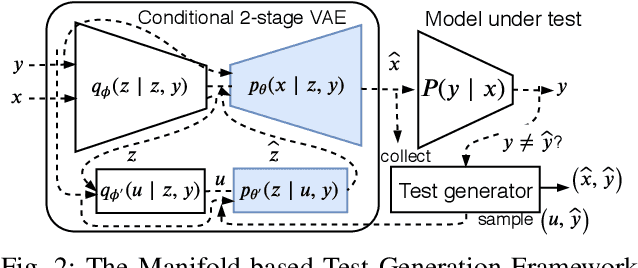

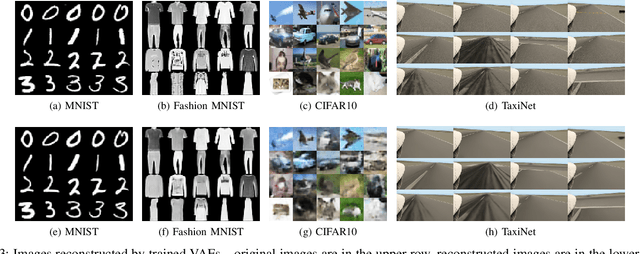

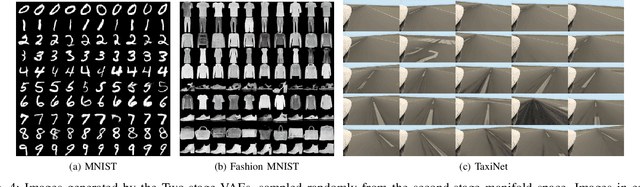

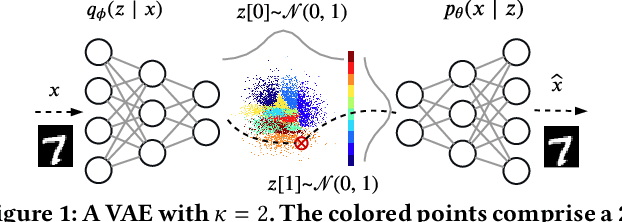

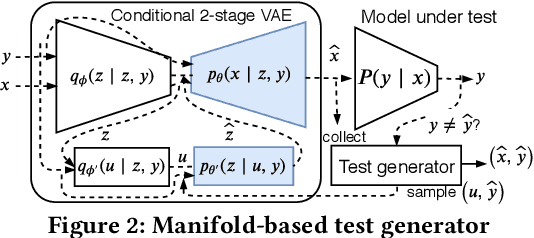

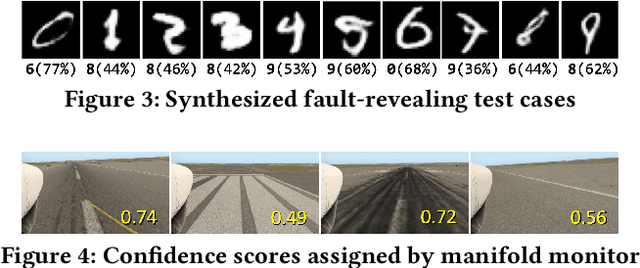

Abstract:Neural networks used for image classification tasks in critical applications must be tested with sufficient realistic data to assure their correctness. To effectively test an image classification neural network, one must obtain realistic test data adequate enough to inspire confidence that differences between the implicit requirements and the learned model would be exposed. This raises two challenges: first, an adequate subset of the data points must be carefully chosen to inspire confidence, and second, the implicit requirements must be meaningfully extrapolated to data points beyond those in the explicit training set. This paper proposes a novel framework to address these challenges. Our approach is based on the premise that patterns in a large input data space can be effectively captured in a smaller manifold space, from which similar yet novel test cases---both the input and the label---can be sampled and generated. A variant of Conditional Variational Autoencoder (CVAE) is used for capturing this manifold with a generative function, and a search technique is applied on this manifold space to efficiently find fault-revealing inputs. Experiments show that this approach enables generation of thousands of realistic yet fault-revealing test cases efficiently even for well-trained models.

Manifold for Machine Learning Assurance

Feb 08, 2020

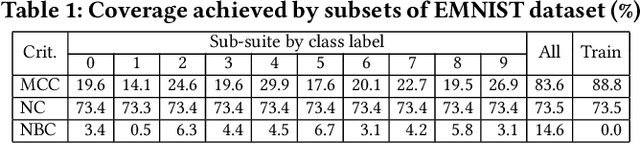

Abstract:The increasing use of machine-learning (ML) enabled systems in critical tasks fuels the quest for novel verification and validation techniques yet grounded in accepted system assurance principles. In traditional system development, model-based techniques have been widely adopted, where the central premise is that abstract models of the required system provide a sound basis for judging its implementation. We posit an analogous approach for ML systems using an ML technique that extracts from the high-dimensional training data implicitly describing the required system, a low-dimensional underlying structure--a manifold. It is then harnessed for a range of quality assurance tasks such as test adequacy measurement, test input generation, and runtime monitoring of the target ML system. The approach is built on variational autoencoder, an unsupervised method for learning a pair of mutually near-inverse functions between a given high-dimensional dataset and a low-dimensional representation. Preliminary experiments establish that the proposed manifold-based approach, for test adequacy drives diversity in test data, for test generation yields fault-revealing yet realistic test cases, and for runtime monitoring provides an independent means to assess trustability of the target system's output.

Input Prioritization for Testing Neural Networks

Jan 11, 2019

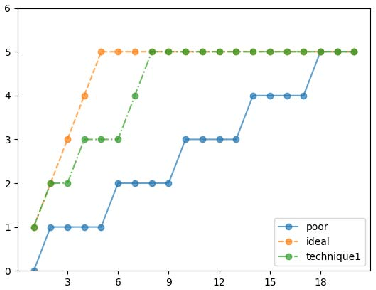

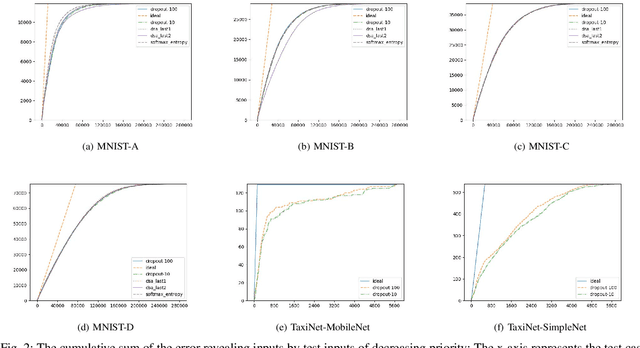

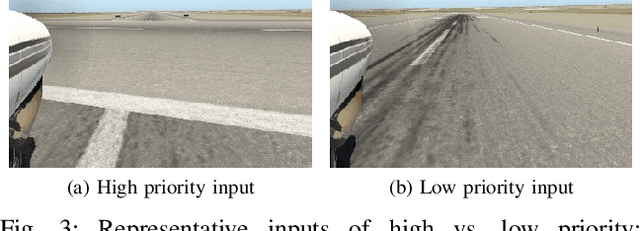

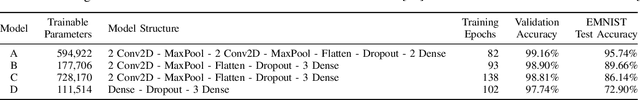

Abstract:Deep neural networks (DNNs) are increasingly being adopted for sensing and control functions in a variety of safety and mission-critical systems such as self-driving cars, autonomous air vehicles, medical diagnostics, and industrial robotics. Failures of such systems can lead to loss of life or property, which necessitates stringent verification and validation for providing high assurance. Though formal verification approaches are being investigated, testing remains the primary technique for assessing the dependability of such systems. Due to the nature of the tasks handled by DNNs, the cost of obtaining test oracle data---the expected output, a.k.a. label, for a given input---is high, which significantly impacts the amount and quality of testing that can be performed. Thus, prioritizing input data for testing DNNs in meaningful ways to reduce the cost of labeling can go a long way in increasing testing efficacy. This paper proposes using gauges of the DNN's sentiment derived from the computation performed by the model, as a means to identify inputs that are likely to reveal weaknesses. We empirically assessed the efficacy of three such sentiment measures for prioritization---confidence, uncertainty, and surprise---and compare their effectiveness in terms of their fault-revealing capability and retraining effectiveness. The results indicate that sentiment measures can effectively flag inputs that expose unacceptable DNN behavior. For MNIST models, the average percentage of inputs correctly flagged ranged from 88% to 94.8%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge