Salma Haidar

Enhancing Hyperspectral Image Prediction with Contrastive Learning in Low-Label Regime

Oct 10, 2024

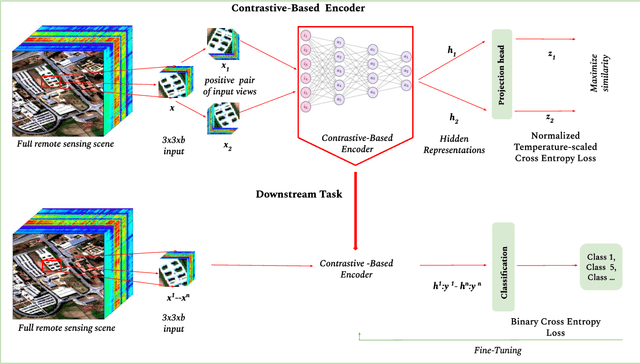

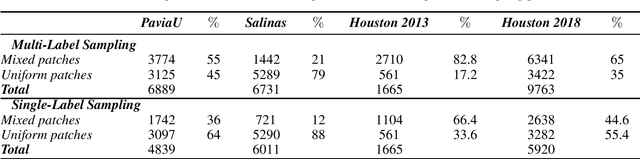

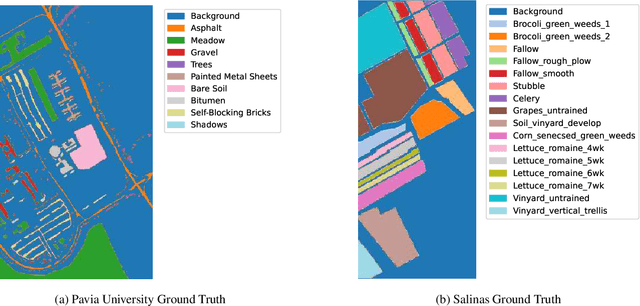

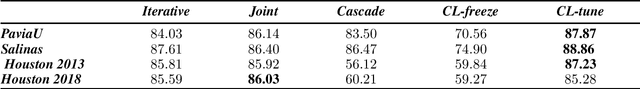

Abstract:Self-supervised contrastive learning is an effective approach for addressing the challenge of limited labelled data. This study builds upon the previously established two-stage patch-level, multi-label classification method for hyperspectral remote sensing imagery. We evaluate the method's performance for both the single-label and multi-label classification tasks, particularly under scenarios of limited training data. The methodology unfolds in two stages. Initially, we focus on training an encoder and a projection network using a contrastive learning approach. This step is crucial for enhancing the ability of the encoder to discern patterns within the unlabelled data. Next, we employ the pre-trained encoder to guide the training of two distinct predictors: one for multi-label and another for single-label classification. Empirical results on four public datasets show that the predictors trained with our method perform better than those trained under fully supervised techniques. Notably, the performance is maintained even when the amount of training data is reduced by $50\%$. This advantage is consistent across both tasks. The method's effectiveness comes from its streamlined architecture. This design allows for retraining the encoder along with the predictor. As a result, the encoder becomes more adaptable to the features identified by the classifier, improving the overall classification performance. Qualitative analysis reveals the contrastive-learning-based encoder's capability to provide representations that allow separation among classes and identify location-based features despite not being explicitly trained for that. This observation indicates the method's potential in uncovering implicit spatial information within the data.

Training Methods of Multi-label Prediction Classifiers for Hyperspectral Remote Sensing Images

Jan 17, 2023Abstract:With their combined spectral depth and geometric resolution, hyperspectral remote sensing images embed a wealth of complex, non-linear information that challenges traditional computer vision techniques. Yet, deep learning methods known for their representation learning capabilities prove more suitable for handling such complexities. Unlike applications that focus on single-label, pixel-level classification methods for hyperspectral remote sensing images, we propose a multi-label, patch-level classification method based on a two-component deep-learning network. We use patches of reduced spatial dimension and a complete spectral depth extracted from the remote sensing images. Additionally, we investigate three training schemes for our network: Iterative, Joint, and Cascade. Experiments suggest that the Joint scheme is the best-performing scheme; however, its application requires an expensive search for the best weight combination of the loss constituents. The Iterative scheme enables the sharing of features between the two parts of the network at the early stages of training. It performs better on complex data with multi-labels. Further experiments showed that methods designed with different architectures performed well when trained on patches extracted and labeled according to our sampling method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge