Sachin Konan

Iterated Reasoning with Mutual Information in Cooperative and Byzantine Decentralized Teaming

Jan 20, 2022

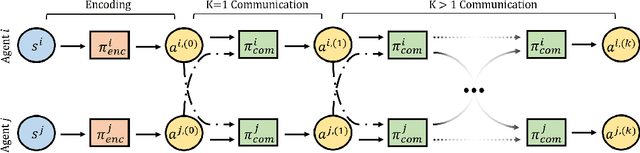

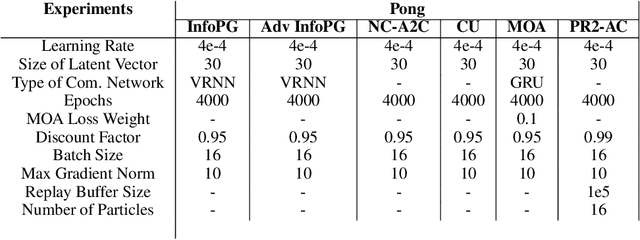

Abstract:Information sharing is key in building team cognition and enables coordination and cooperation. High-performing human teams also benefit from acting strategically with hierarchical levels of iterated communication and rationalizability, meaning a human agent can reason about the actions of their teammates in their decision-making. Yet, the majority of prior work in Multi-Agent Reinforcement Learning (MARL) does not support iterated rationalizability and only encourage inter-agent communication, resulting in a suboptimal equilibrium cooperation strategy. In this work, we show that reformulating an agent's policy to be conditional on the policies of its neighboring teammates inherently maximizes Mutual Information (MI) lower-bound when optimizing under Policy Gradient (PG). Building on the idea of decision-making under bounded rationality and cognitive hierarchy theory, we show that our modified PG approach not only maximizes local agent rewards but also implicitly reasons about MI between agents without the need for any explicit ad-hoc regularization terms. Our approach, InfoPG, outperforms baselines in learning emergent collaborative behaviors and sets the state-of-the-art in decentralized cooperative MARL tasks. Our experiments validate the utility of InfoPG by achieving higher sample efficiency and significantly larger cumulative reward in several complex cooperative multi-agent domains.

Extending One-Stage Detection with Open-World Proposals

Jan 12, 2022

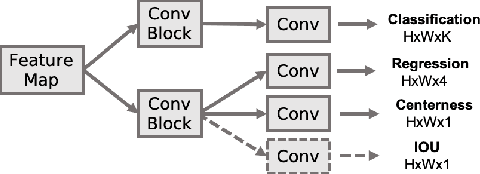

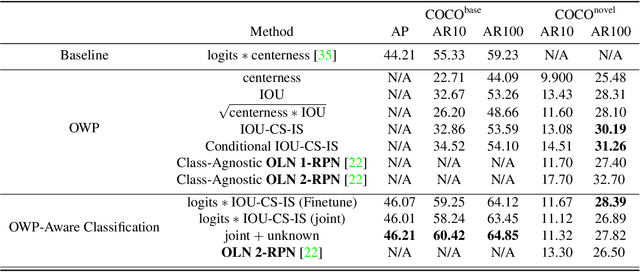

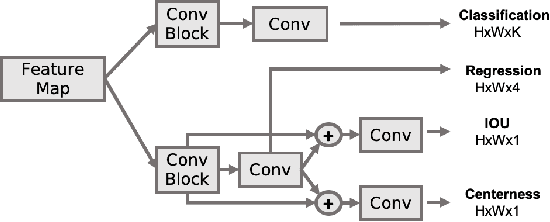

Abstract:In many applications, such as autonomous driving, hand manipulation, or robot navigation, object detection methods must be able to detect objects unseen in the training set. Open World Detection(OWD) seeks to tackle this problem by generalizing detection performance to seen and unseen class categories. Recent works have seen success in the generation of class-agnostic proposals, which we call Open-World Proposals(OWP), but this comes at the cost of a big drop on the classification task when both tasks are considered in the detection model. These works have investigated two-stage Region Proposal Networks (RPN) by taking advantage of objectness scoring cues; however, for its simplicity, run-time, and decoupling of localization and classification, we investigate OWP through the lens of fully convolutional one-stage detection network, such as FCOS. We show that our architectural and sampling optimizations on FCOS can increase OWP performance by as much as 6% in recall on novel classes, marking the first proposal-free one-stage detection network to achieve comparable performance to RPN-based two-stage networks. Furthermore, we show that the inherent, decoupled architecture of FCOS has benefits to retaining classification performance. While two-stage methods worsen by 6% in recall on novel classes, we show that FCOS only drops 2% when jointly optimizing for OWP and classification.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge