S. Sangeetha

Want to Identify, Extract and Normalize Adverse Drug Reactions in Tweets? Use RoBERTa

Jun 29, 2020

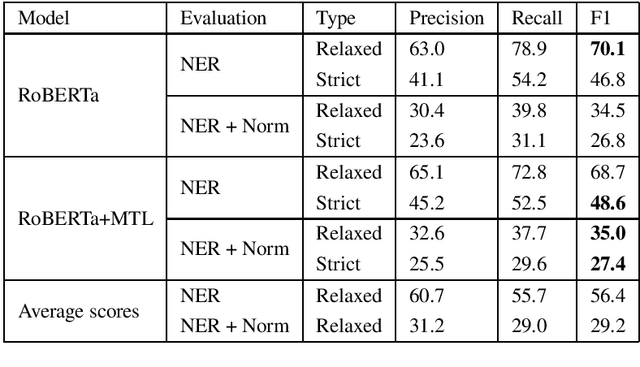

Abstract:This paper presents our approach for task 2 and task 3 of Social Media Mining for Health (SMM4H) 2020 shared tasks. In task 2, we have to differentiate adverse drug reaction (ADR) tweets from nonADR tweets and is treated as binary classification. Task3 involves extracting ADR mentions and then mapping them to MedDRA codes. Extracting ADR mentions is treated as sequence labeling and normalizing ADR mentions is treated as multi-class classification. Our system is based on pre-trained language model RoBERTa and it achieves a) F1-score of 58% in task2 which is 12% more than the average score b) relaxed F1-score of 70.1% in ADR extraction of task 3 which is 13.7% more than the average score and relaxed F1-score of 35% in ADR extraction + normalization of task3 which is 5.8% more than the average score. Overall, our models achieve promising results in both the tasks with significant improvements over average scores.

Medical Concept Normalization in User Generated Texts by Learning Target Concept Embeddings

Jun 07, 2020

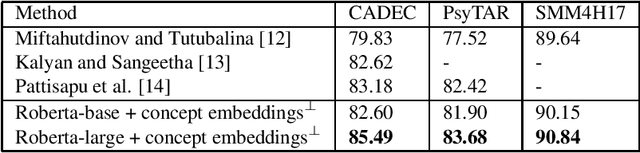

Abstract:Medical concept normalization helps in discovering standard concepts in free-form text i.e., maps health-related mentions to standard concepts in a vocabulary. It is much beyond simple string matching and requires a deep semantic understanding of concept mentions. Recent research approach concept normalization as either text classification or text matching. The main drawback in existing a) text classification approaches is ignoring valuable target concepts information in learning input concept mention representation b) text matching approach is the need to separately generate target concept embeddings which is time and resource consuming. Our proposed model overcomes these drawbacks by jointly learning the representations of input concept mention and target concepts. First, it learns the input concept mention representation using RoBERTa. Second, it finds cosine similarity between embeddings of input concept mention and all the target concepts. Here, embeddings of target concepts are randomly initialized and then updated during training. Finally, the target concept with maximum cosine similarity is assigned to the input concept mention. Our model surpasses all the existing methods across three standard datasets by improving accuracy up to 2.31%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge