S. Joshua Swamidass

Fair-Net: A Network Architecture For Reducing Performance Disparity Between Identifiable Sub-Populations

Jun 01, 2021

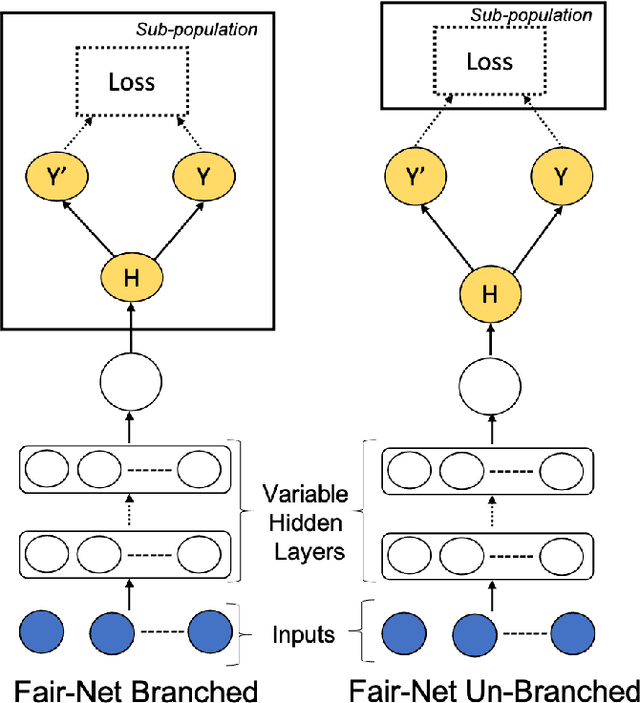

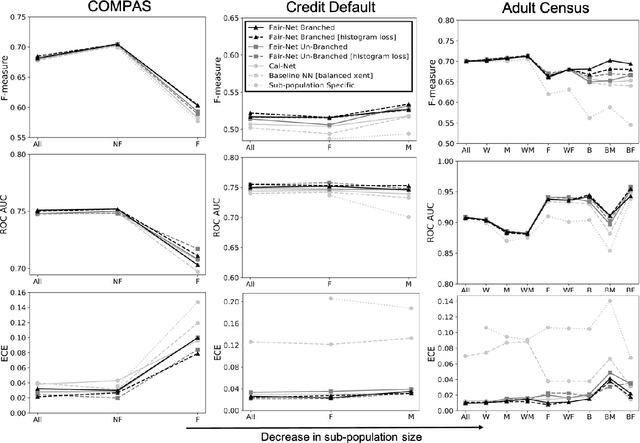

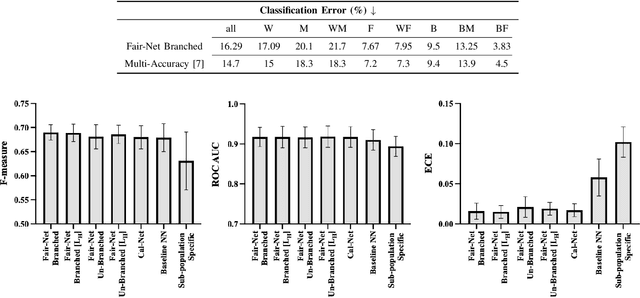

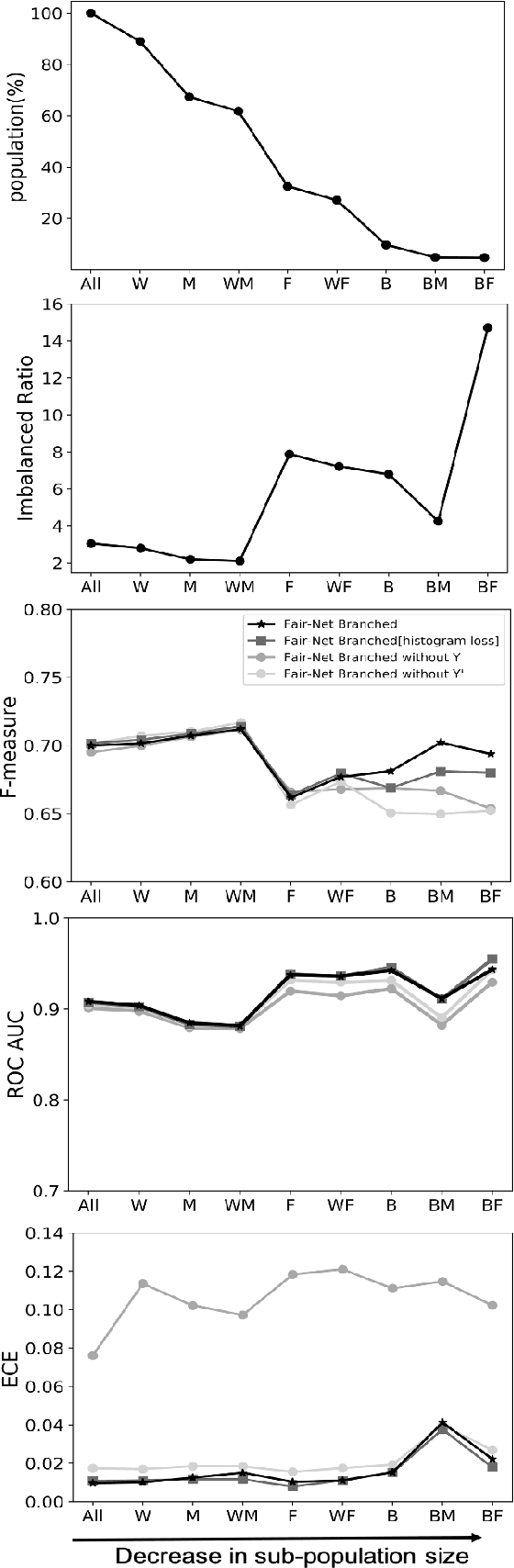

Abstract:In real world datasets, particular groups are under-represented, much rarer than others, and machine learning classifiers will often preform worse on under-represented populations. This problem is aggravated across many domains where datasets are class imbalanced, with a minority class far rarer than the majority class. Naive approaches to handle under-representation and class imbalance include training sub-population specific classifiers that handle class imbalance or training a global classifier that overlooks sub-population disparities and aims to achieve high overall accuracy by handling class imbalance. In this study, we find that these approaches are vulnerable in class imbalanced datasets with minority sub-populations. We introduced Fair-Net, a branched multitask neural network architecture that improves both classification accuracy and probability calibration across identifiable sub-populations in class imbalanced datasets. Fair-Nets is a straightforward extension to the output layer and error function of a network, so can be incorporated in far more complex architectures. Empirical studies with three real world benchmark datasets demonstrate that Fair-Net improves classification and calibration performance, substantially reducing performance disparity between gender and racial sub-populations.

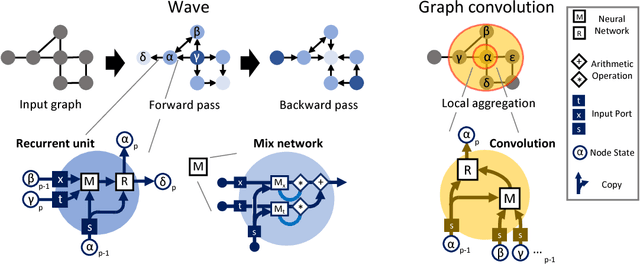

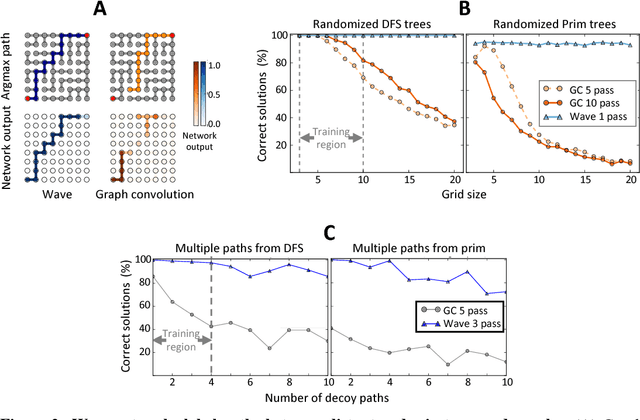

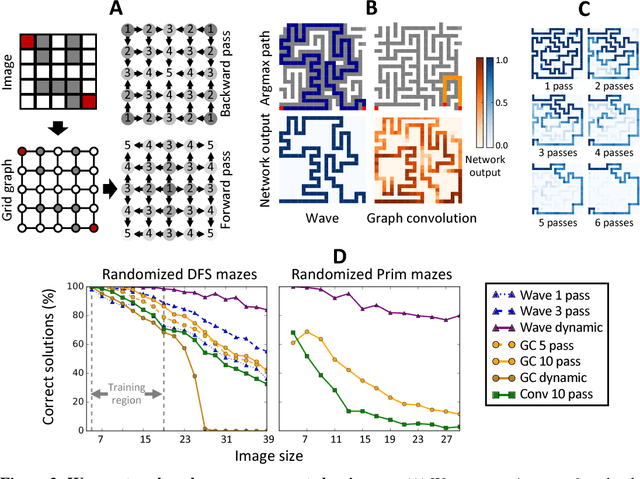

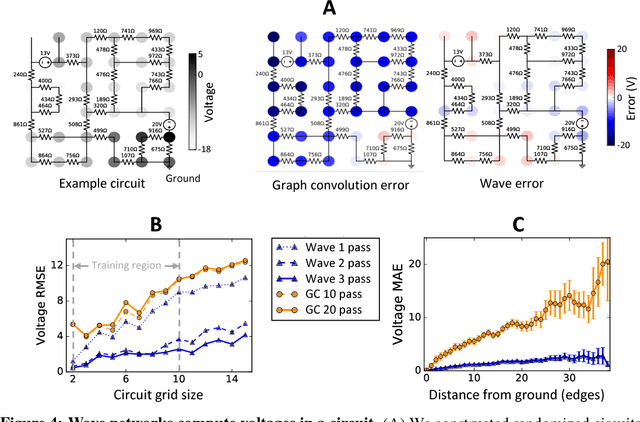

Deep learning long-range information in undirected graphs with wave networks

Oct 29, 2018

Abstract:Graph algorithms are key tools in many fields of science and technology. Some of these algorithms depend on propagating information between distant nodes in a graph. Recently, there have been a number of deep learning architectures proposed to learn on undirected graphs. However, most of these architectures aggregate information in the local neighborhood of a node, and therefore they may not be capable of efficiently propagating long-range information. To solve this problem we examine a recently proposed architecture, wave, which propagates information back and forth across an undirected graph in waves of nonlinear computation. We compare wave to graph convolution, an architecture based on local aggregation, and find that wave learns three different graph-based tasks with greater efficiency and accuracy. These three tasks include (1) labeling a path connecting two nodes in a graph, (2) solving a maze presented as an image, and (3) computing voltages in a circuit. These tasks range from trivial to very difficult, but wave can extrapolate from small training examples to much larger testing examples. These results show that wave may be able to efficiently solve a wide range of problems that require long-range information propagation across undirected graphs. An implementation of the wave network, and example code for the maze problem are included in the tflon deep learning toolkit (https://bitbucket.org/mkmatlock/tflon).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge