Rudolf Jagdhuber

Feature Selection Methods for Cost-Constrained Classification in Random Forests

Aug 17, 2020

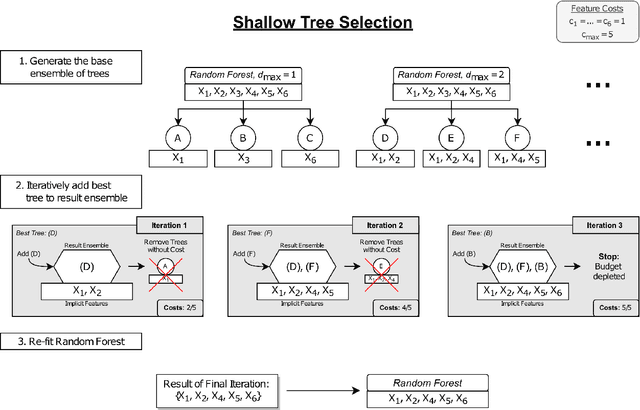

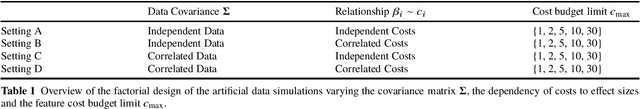

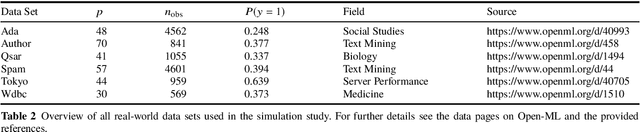

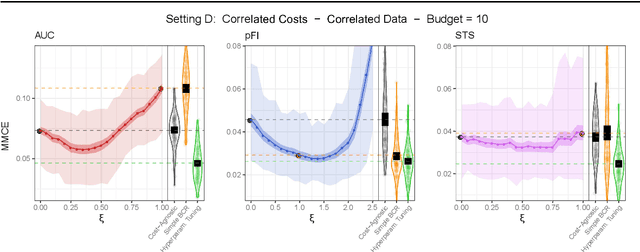

Abstract:Cost-sensitive feature selection describes a feature selection problem, where features raise individual costs for inclusion in a model. These costs allow to incorporate disfavored aspects of features, e.g. failure rates of as measuring device, or patient harm, in the model selection process. Random Forests define a particularly challenging problem for feature selection, as features are generally entangled in an ensemble of multiple trees, which makes a post hoc removal of features infeasible. Feature selection methods therefore often either focus on simple pre-filtering methods, or require many Random Forest evaluations along their optimization path, which drastically increases the computational complexity. To solve both issues, we propose Shallow Tree Selection, a novel fast and multivariate feature selection method that selects features from small tree structures. Additionally, we also adapt three standard feature selection algorithms for cost-sensitive learning by introducing a hyperparameter-controlled benefit-cost ratio criterion (BCR) for each method. In an extensive simulation study, we assess this criterion, and compare the proposed methods to multiple performance-based baseline alternatives on four artificial data settings and seven real-world data settings. We show that all methods using a hyperparameterized BCR criterion outperform the baseline alternatives. In a direct comparison between the proposed methods, each method indicates strengths in certain settings, but no one-fits-all solution exists. On a global average, we could identify preferable choices among our BCR based methods. Nevertheless, we conclude that a practical analysis should never rely on a single method only, but always compare different approaches to obtain the best results.

Implications on Feature Detection when using the Benefit-Cost Ratio

Aug 15, 2020

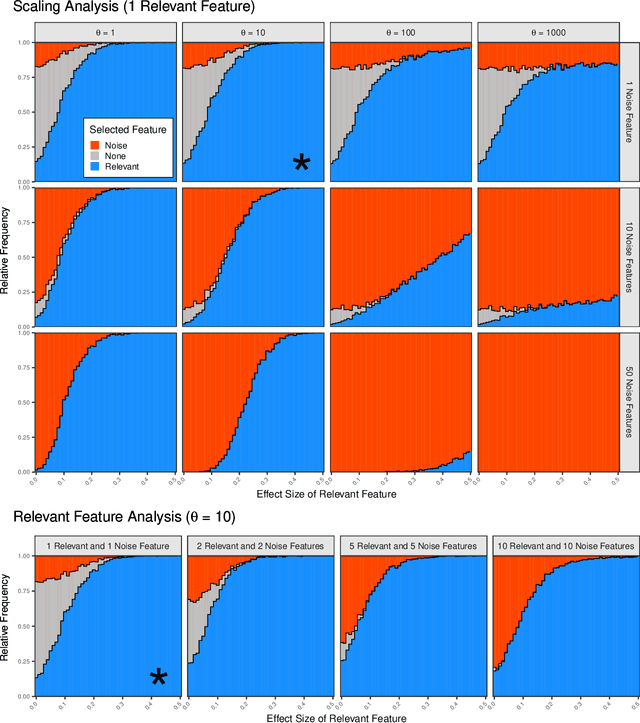

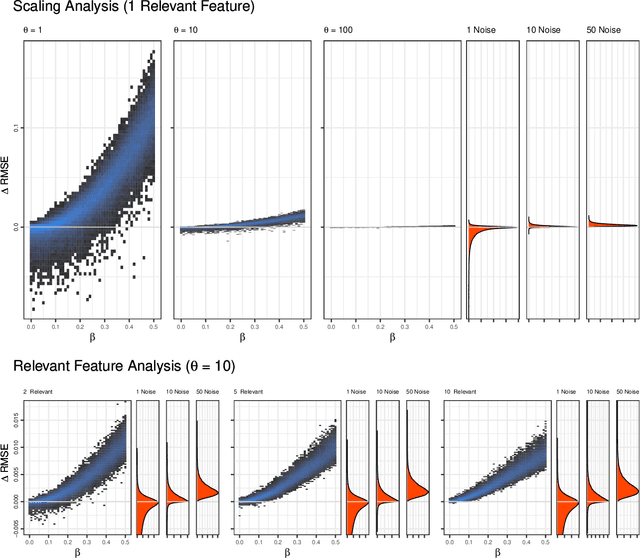

Abstract:In many practical machine learning applications, there are two objectives: one is to maximize predictive accuracy and the other is to minimize costs of the resulting model. These costs of individual features may be financial costs, but can also refer to other aspects, like for example evaluation time. Feature selection addresses both objectives, as it reduces the number of features and can improve the generalization ability of the model. If costs differ between features, the feature selection needs to trade-off the individual benefit and cost of each feature. A popular trade-off choice is the ratio of both, the BCR (benefit-cost ratio). In this paper we analyze implications of using this measure with special focus to the ability to distinguish relevant features from noise. We perform a simulation study for different cost and data settings and obtain detection rates of relevant features and empirical distributions of the trade-off ratio. Our simulation study exposed a clear impact of the cost setting on the detection rate. In situations with large cost differences and small effect sizes, the BCR missed relevant features and preferred cheap noise features. We conclude that a trade-off between predictive performance and costs without a controlling hyperparameter can easily overemphasize very cheap noise features. While the simple benefit-cost ratio offers an easy solution to incorporate costs, it is important to be aware of its risks. Avoiding costs close to 0, rescaling large cost differences, or using a hyperparameter trade-off are ways to counteract the adverse effects exposed in this paper.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge