Rodrigo Alves Lima

Challenges and Opportunities in Rapid Epidemic Information Propagation with Live Knowledge Aggregation from Social Media

Nov 09, 2020

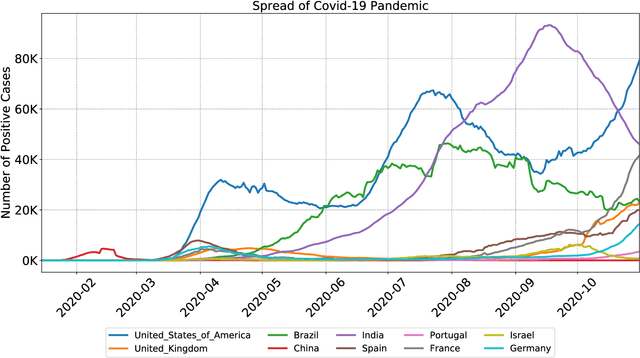

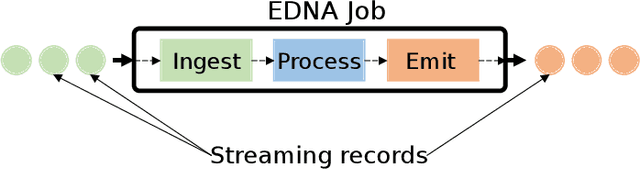

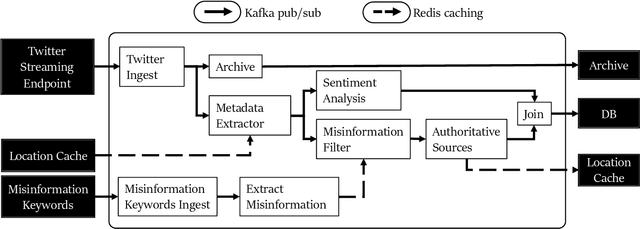

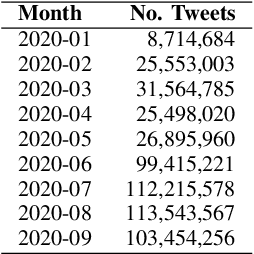

Abstract:A rapidly evolving situation such as the COVID-19 pandemic is a significant challenge for AI/ML models because of its unpredictability. %The most reliable indicator of the pandemic spreading has been the number of test positive cases. However, the tests are both incomplete (due to untested asymptomatic cases) and late (due the lag from the initial contact event, worsening symptoms, and test results). Social media can complement physical test data due to faster and higher coverage, but they present a different challenge: significant amounts of noise, misinformation and disinformation. We believe that social media can become good indicators of pandemic, provided two conditions are met. The first (True Novelty) is the capture of new, previously unknown, information from unpredictably evolving situations. The second (Fact vs. Fiction) is the distinction of verifiable facts from misinformation and disinformation. Social media information that satisfy those two conditions are called live knowledge. We apply evidence-based knowledge acquisition (EBKA) approach to collect, filter, and update live knowledge through the integration of social media sources with authoritative sources. Although limited in quantity, the reliable training data from authoritative sources enable the filtering of misinformation as well as capturing truly new information. We describe the EDNA/LITMUS tools that implement EBKA, integrating social media such as Twitter and Facebook with authoritative sources such as WHO and CDC, creating and updating live knowledge on the COVID-19 pandemic.

Robust, Extensible, and Fast: Teamed Classifiers for Vehicle Tracking and Vehicle Re-ID in Multi-Camera Networks

Jan 07, 2020

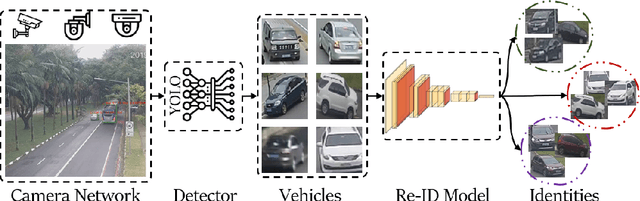

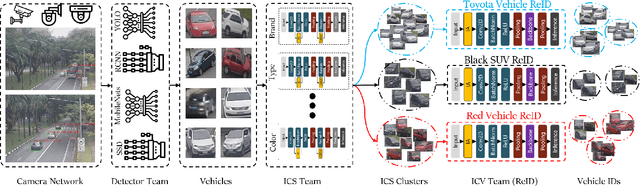

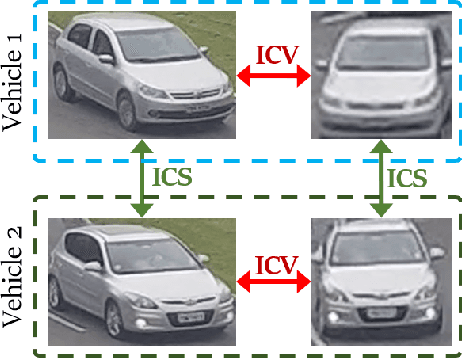

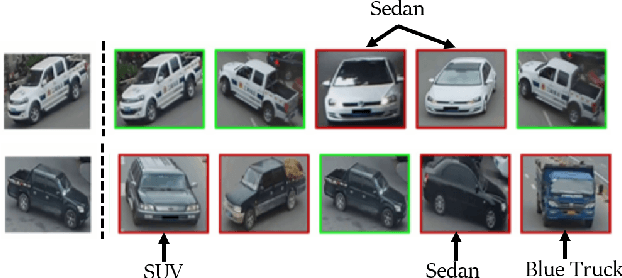

Abstract:As camera networks have become more ubiquitous over the past decade, the research interest in video management has shifted to analytics on multi-camera networks. This includes performing tasks such as object detection, attribute identification, and vehicle/person tracking across different cameras without overlap. Current frameworks for management are designed for multi-camera networks in a closed dataset environment where there is limited variability in cameras and characteristics of the surveillance environment are well known. Furthermore, current frameworks are designed for offline analytics with guidance from human operators for forensic applications. This paper presents a teamed classifier framework for video analytics in heterogeneous many-camera networks with adversarial conditions such as multi-scale, multi-resolution cameras capturing the environment with varying occlusion, blur, and orientations. We describe an implementation for vehicle tracking and vehicle re-identification (re-id), where we implement a zero-shot learning (ZSL) system that performs automated tracking of all vehicles all the time. Our evaluations on VeRi-776 and Cars196 show the teamed classifier framework is robust to adversarial conditions, extensible to changing video characteristics such as new vehicle types/brands and new cameras, and offers real-time performance compared to current offline video analytics approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge