Rod Grupen

Associating Grasp Configurations with Hierarchical Features in Convolutional Neural Networks

Jul 26, 2017

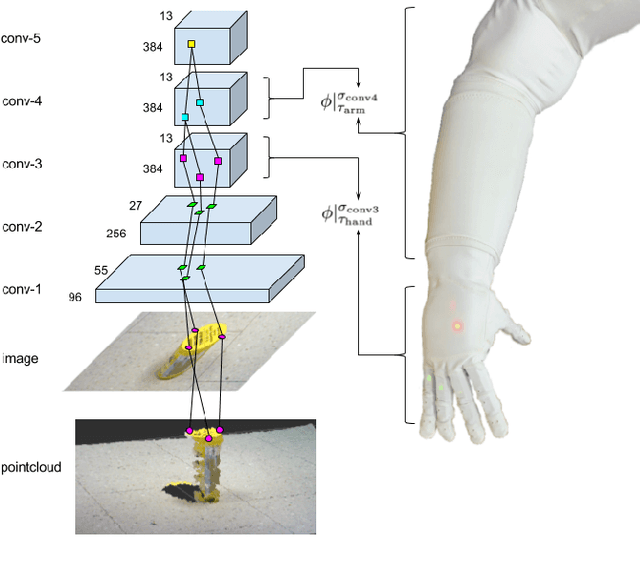

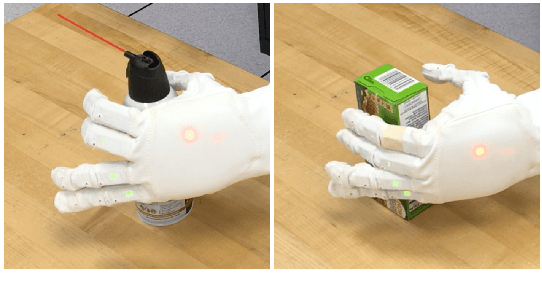

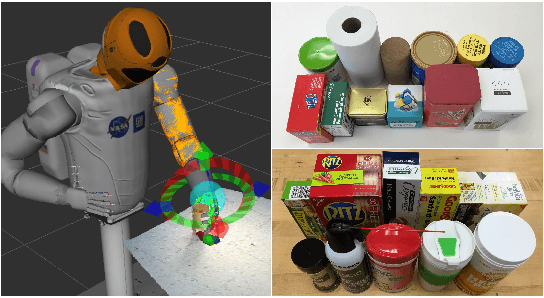

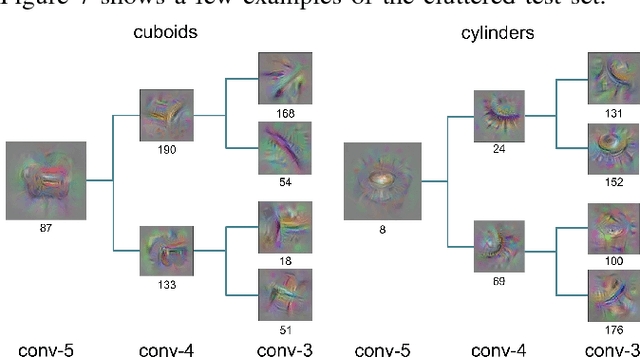

Abstract:In this work, we provide a solution for posturing the anthropomorphic Robonaut-2 hand and arm for grasping based on visual information. A mapping from visual features extracted from a convolutional neural network (CNN) to grasp points is learned. We demonstrate that a CNN pre-trained for image classification can be applied to a grasping task based on a small set of grasping examples. Our approach takes advantage of the hierarchical nature of the CNN by identifying features that capture the hierarchical support relations between filters in different CNN layers and locating their 3D positions by tracing activations backwards in the CNN. When this backward trace terminates in the RGB-D image, important manipulable structures are thereby localized. These features that reside in different layers of the CNN are then associated with controllers that engage different kinematic subchains in the hand/arm system for grasping. A grasping dataset is collected using demonstrated hand/object relationships for Robonaut-2 to evaluate the proposed approach in terms of the precision of the resulting preshape postures. We demonstrate that this approach outperforms baseline approaches in cluttered scenarios on the grasping dataset and a point cloud based approach on a grasping task using Robonaut-2.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge