Robert Kaufman

Improving Human-Autonomous Vehicle Interaction in Complex Systems

Apr 24, 2025

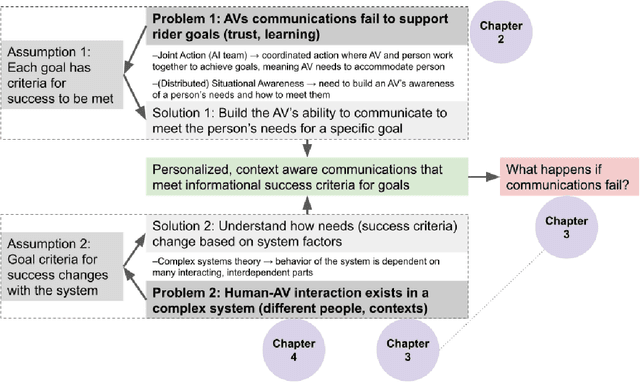

Abstract:Unresolved questions about how autonomous vehicles (AVs) should meet the informational needs of riders hinder real-world adoption. Complicating our ability to satisfy rider needs is that different people, goals, and driving contexts have different criteria for what constitutes interaction success. Unfortunately, most human-AV research and design today treats all people and situations uniformly. It is crucial to understand how an AV should communicate to meet rider needs, and how communications should change when the human-AV complex system changes. I argue that understanding the relationships between different aspects of the human-AV system can help us build improved and adaptable AV communications. I support this argument using three empirical studies. First, I identify optimal communication strategies that enhance driving performance, confidence, and trust for learning in extreme driving environments. Findings highlight the need for task-sensitive, modality-appropriate communications tuned to learner cognitive limits and goals. Next, I highlight the consequences of deploying faulty communication systems and demonstrate the need for context-sensitive communications. Third, I use machine learning (ML) to illuminate personal factors predicting trust in AVs, emphasizing the importance of tailoring designs to individual traits and concerns. Together, this dissertation supports the necessity of transparent, adaptable, and personalized AV systems that cater to individual needs, goals, and contextual demands. By considering the complex system within which human-AV interactions occur, we can deliver valuable insights for designers, researchers, and policymakers. This dissertation also provides a concrete domain to study theories of human-machine joint action and situational awareness, and can be used to guide future human-AI interaction research. [shortened for arxiv]

Predicting Trust In Autonomous Vehicles: Modeling Young Adult Psychosocial Traits, Risk-Benefit Attitudes, And Driving Factors With Machine Learning

Sep 13, 2024Abstract:Low trust remains a significant barrier to Autonomous Vehicle (AV) adoption. To design trustworthy AVs, we need to better understand the individual traits, attitudes, and experiences that impact people's trust judgements. We use machine learning to understand the most important factors that contribute to young adult trust based on a comprehensive set of personal factors gathered via survey (n = 1457). Factors ranged from psychosocial and cognitive attributes to driving style, experiences, and perceived AV risks and benefits. Using the explainable AI technique SHAP, we found that perceptions of AV risks and benefits, attitudes toward feasibility and usability, institutional trust, prior experience, and a person's mental model are the most important predictors. Surprisingly, psychosocial and many technology- and driving-specific factors were not strong predictors. Results highlight the importance of individual differences for designing trustworthy AVs for diverse groups and lead to key implications for future design and research.

What Did My Car Say? Impact of Autonomous Vehicle Explanation Errors and Driving Context On Comfort, Reliance, Satisfaction, and Driving Confidence

Sep 10, 2024

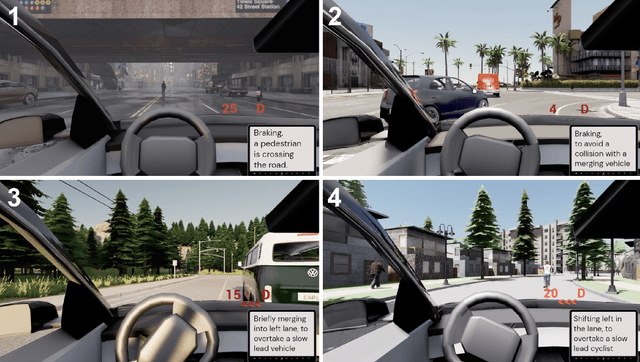

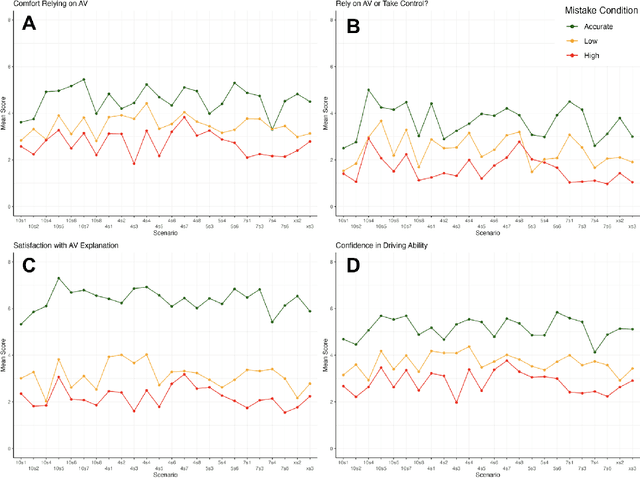

Abstract:Explanations for autonomous vehicle (AV) decisions may build trust, however, explanations can contain errors. In a simulated driving study (n = 232), we tested how AV explanation errors, driving context characteristics (perceived harm and driving difficulty), and personal traits (prior trust and expertise) affected a passenger's comfort in relying on an AV, preference for control, confidence in the AV's ability, and explanation satisfaction. Errors negatively affected all outcomes. Surprisingly, despite identical driving, explanation errors reduced ratings of the AV's driving ability. Severity and potential harm amplified the negative impact of errors. Contextual harm and driving difficulty directly impacted outcome ratings and influenced the relationship between errors and outcomes. Prior trust and expertise were positively associated with outcome ratings. Results emphasize the need for accurate, contextually adaptive, and personalized AV explanations to foster trust, reliance, satisfaction, and confidence. We conclude with design, research, and deployment recommendations for trustworthy AV explanation systems.

Developing Situational Awareness for Joint Action with Autonomous Vehicles

Apr 17, 2024

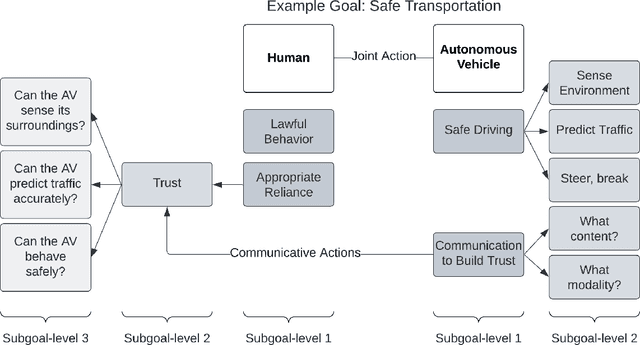

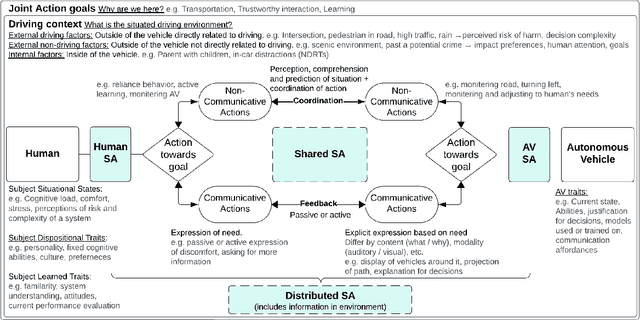

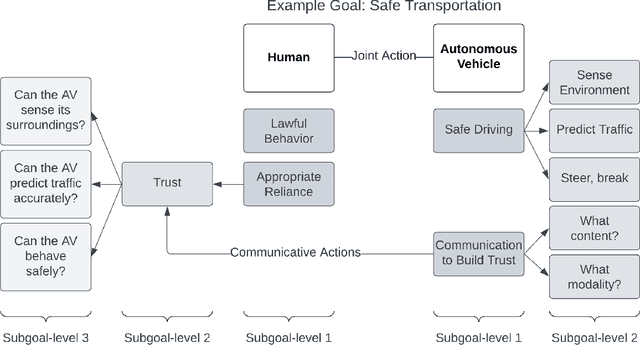

Abstract:Unanswered questions about how human-AV interaction designers can support rider's informational needs hinders Autonomous Vehicles (AV) adoption. To achieve joint human-AV action goals - such as safe transportation, trust, or learning from an AV - sufficient situational awareness must be held by the human, AV, and human-AV system collectively. We present a systems-level framework that integrates cognitive theories of joint action and situational awareness as a means to tailor communications that meet the criteria necessary for goal success. This framework is based on four components of the shared situation: AV traits, action goals, subject-specific traits and states, and the situated driving context. AV communications should be tailored to these factors and be sensitive when they change. This framework can be useful for understanding individual, shared, and distributed human-AV situational awareness and designing for future AV communications that meet the informational needs and goals of diverse groups and in diverse driving contexts.

Learning Racing From an AI Coach: Effects of Multimodal Autonomous Driving Explanations on Driving Performance, Cognitive Load, Expertise, and Trust

Jan 10, 2024Abstract:In a pre-post experiment (n = 41), we test the impact of an AI Coach's explanatory communications modeled after the instructions of human driving experts. Participants were divided into four (4) groups to assess two (2) dimensions of the AI coach's explanations: information type ('what' and 'why'-type explanations) and presentation modality (auditory and visual). We directly compare how AI Coaching sessions employing these techniques impact driving performance, cognitive load, confidence, expertise, and trust in an observation learning context. Through interviews, we delineate the learning process of our participants. Results show that an AI driving coach can be useful for teaching performance driving skills to novices. Comparing between groups, we find the type and modality of information influences performance outcomes. We attribute differences to how information directed attention, mitigated uncertainty, and influenced overload experienced by participants. These, in turn, affected how successfully participants were able to learn. Results suggest efficient, modality-appropriate explanations should be opted for when designing effective HMI communications that can instruct without overwhelming. Further, they support the need to align communications with human learning and cognitive processes. Results are synthesized into eight design implications for future autonomous vehicle HMI and AI coach design.

Explainable AI And Visual Reasoning: Insights From Radiology

Apr 06, 2023

Abstract:Why do explainable AI (XAI) explanations in radiology, despite their promise of transparency, still fail to gain human trust? Current XAI approaches provide justification for predictions, however, these do not meet practitioners' needs. These XAI explanations lack intuitive coverage of the evidentiary basis for a given classification, posing a significant barrier to adoption. We posit that XAI explanations that mirror human processes of reasoning and justification with evidence may be more useful and trustworthy than traditional visual explanations like heat maps. Using a radiology case study, we demonstrate how radiology practitioners get other practitioners to see a diagnostic conclusion's validity. Machine-learned classifications lack this evidentiary grounding and consequently fail to elicit trust and adoption by potential users. Insights from this study may generalize to guiding principles for human-centered explanation design based on human reasoning and justification of evidence.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge