Rhea Chowers

Control+Shift: Generating Controllable Distribution Shifts

Sep 12, 2024Abstract:We propose a new method for generating realistic datasets with distribution shifts using any decoder-based generative model. Our approach systematically creates datasets with varying intensities of distribution shifts, facilitating a comprehensive analysis of model performance degradation. We then use these generated datasets to evaluate the performance of various commonly used networks and observe a consistent decline in performance with increasing shift intensity, even when the effect is almost perceptually unnoticeable to the human eye. We see this degradation even when using data augmentations. We also find that enlarging the training dataset beyond a certain point has no effect on the robustness and that stronger inductive biases increase robustness.

Why do CNNs Learn Consistent Representations in their First Layer Independent of Labels and Architecture?

Jun 06, 2022

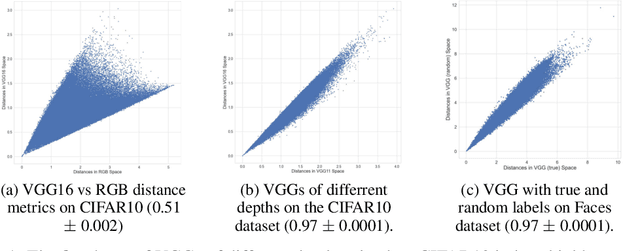

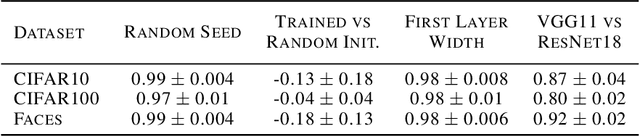

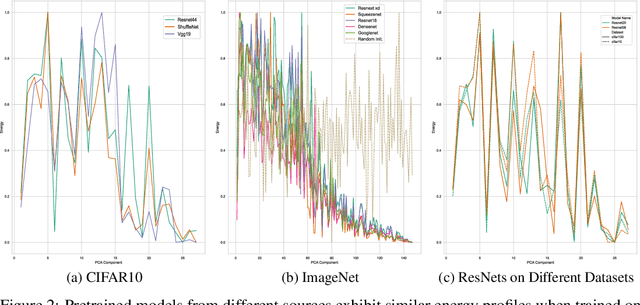

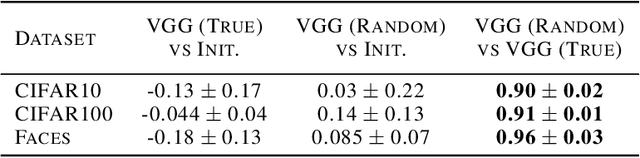

Abstract:It has previously been observed that the filters learned in the first layer of a CNN are qualitatively similar for different networks and tasks. We extend this finding and show a high quantitative similarity between filters learned by different networks. We consider the CNN filters as a filter bank and measure the sensitivity of the filter bank to different frequencies. We show that the sensitivity profile of different networks is almost identical, yet far from initialization. Remarkably, we show that it remains the same even when the network is trained with random labels. To understand this effect, we derive an analytic formula for the sensitivity of the filters in the first layer of a linear CNN. We prove that when the average patch in images of the two classes is identical, the sensitivity profile of the filters in the first layer will be identical in expectation when using the true labels or random labels and will only depend on the second-order statistics of image patches. We empirically demonstrate that the average patch assumption holds for realistic datasets. Finally we show that the energy profile of filters in nonlinear CNNs is highly correlated with the energy profile of linear CNNs and that our analysis of linear networks allows us to predict when representations learned by state-of-the-art networks trained on benchmark classification tasks will depend on the labels.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge