Raz Birman

Video QoE Metrics from Encrypted Traffic: Application-agnostic Methodology

Apr 20, 2025

Abstract:Instant Messaging-Based Video Call Applications (IMVCAs) and Video Conferencing Applications (VCAs) have become integral to modern communication. Ensuring a high Quality of Experience (QoE) for users in this context is critical for network operators, as network conditions significantly impact user QoE. However, network operators lack access to end-device QoE metrics due to encrypted traffic. Existing solutions estimate QoE metrics from encrypted traffic traversing the network, with the most advanced approaches leveraging machine learning models. Subsequently, the need for ground truth QoE metrics for training and validation poses a challenge, as not all video applications provide these metrics. To address this challenge, we propose an application-agnostic approach for objective QoE estimation from encrypted traffic. Independent of the video application, we obtained key video QoE metrics, enabling broad applicability to various proprietary IMVCAs and VCAs. To validate our solution, we created a diverse dataset from WhatsApp video sessions under various network conditions, comprising 25,680 seconds of traffic data and QoE metrics. Our evaluation shows high performance across the entire dataset, with 85.2% accuracy for FPS predictions within an error margin of two FPS, and 90.2% accuracy for PIQE-based quality rating classification.

WARLearn: Weather-Adaptive Representation Learning

Nov 21, 2024

Abstract:This paper introduces WARLearn, a novel framework designed for adaptive representation learning in challenging and adversarial weather conditions. Leveraging the in-variance principal used in Barlow Twins, we demonstrate the capability to port the existing models initially trained on clear weather data to effectively handle adverse weather conditions. With minimal additional training, our method exhibits remarkable performance gains in scenarios characterized by fog and low-light conditions. This adaptive framework extends its applicability beyond adverse weather settings, offering a versatile solution for domains exhibiting variations in data distributions. Furthermore, WARLearn is invaluable in scenarios where data distributions undergo significant shifts over time, enabling models to remain updated and accurate. Our experimental findings reveal a remarkable performance, with a mean average precision (mAP) of 52.6% on unseen real-world foggy dataset (RTTS). Similarly, in low light conditions, our framework achieves a mAP of 55.7% on unseen real-world low light dataset (ExDark). Notably, WARLearn surpasses the performance of state-of-the-art frameworks including FeatEnHancer, Image Adaptive YOLO, DENet, C2PNet, PairLIE and ZeroDCE, by a substantial margin in adverse weather, improving the baseline performance in both foggy and low light conditions. The WARLearn code is available at https://github.com/ShubhamAgarwal12/WARLearn

Real-Time Weather Image Classification with SVM

Sep 01, 2024

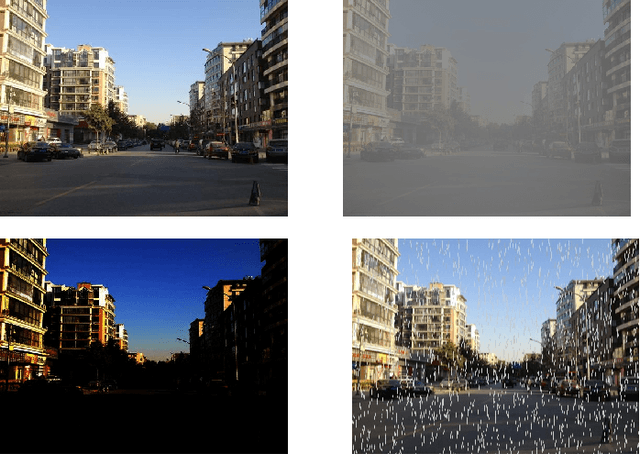

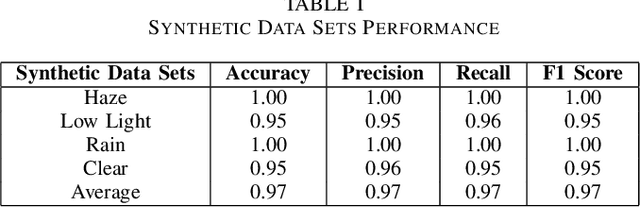

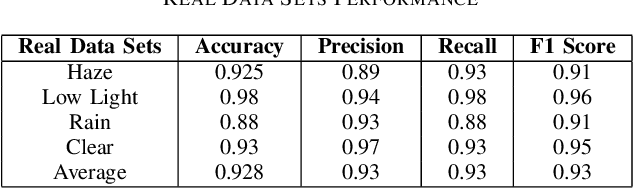

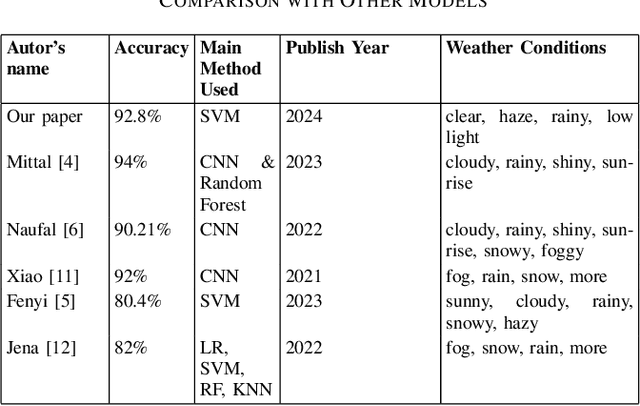

Abstract:Accurate classification of weather conditions in images is essential for enhancing the performance of object detection and classification models under varying weather conditions. This paper presents a comprehensive study on classifying weather conditions in images into four categories: rainy, low light, haze, and clear. The motivation for this work stems from the need to improve the reliability and efficiency of automated systems, such as autonomous vehicles and surveillance, which must operate under diverse weather conditions. Misclassification of weather conditions can lead to significant performance degradation in these systems, making robust weather classification crucial. Utilizing the Support Vector Machine (SVM) algorithm, our approach leverages a robust set of features, including brightness, saturation, noise level, blur metric, edge strength, motion blur, Local Binary Patterns (LBP) mean and variance for radii 1, 2, and 3, edges mean and variance, and color histogram mean and variance for blue, green, and red channels. Our SVM-based method achieved a notable accuracy of 92.8%, surpassing typical benchmarks in the literature, which range from 80% to 90% for classical machine learning methods. While deep learning methods can achieve up to 94% accuracy, our approach offers a competitive advantage in terms of computational efficiency and real-time classification capabilities. Detailed analysis of each feature's contribution highlights the effectiveness of texture, color, and edge-related features in capturing the unique characteristics of different weather conditions. This research advances the state-of-the-art in weather image classification and provides insights into the critical features necessary for accurate weather condition differentiation, underscoring the potential of SVMs in practical applications where accuracy is paramount.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge