Randy Price

A Note on Dimensionality Reduction in Deep Neural Networks using Empirical Interpolation Method

May 16, 2023

Abstract:Empirical interpolation method (EIM) is a well-known technique to efficiently approximate parameterized functions. This paper proposes to use EIM algorithm to efficiently reduce the dimension of the training data within supervised machine learning. This is termed as DNN-EIM. Applications in data science (e.g., MNIST) and parameterized (and time-dependent) partial differential equations (PDEs) are considered. The proposed DNNs in case of classification are trained in parallel for each class. This approach is sequential, i.e., new classes can be added without having to retrain the network. In case of PDEs, a DNN is designed corresponding to each EIM point. Again, these networks can be trained in parallel, for each EIM point. In all cases, the parallel networks require fewer than ten times the number of training weights. Significant gains are observed in terms of training times, without sacrificing accuracy.

NINNs: Nudging Induced Neural Networks

Mar 15, 2022

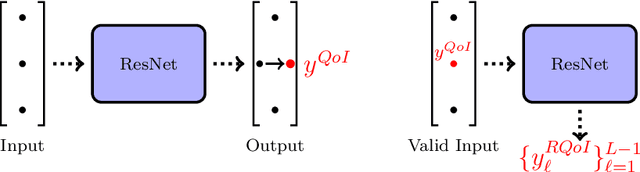

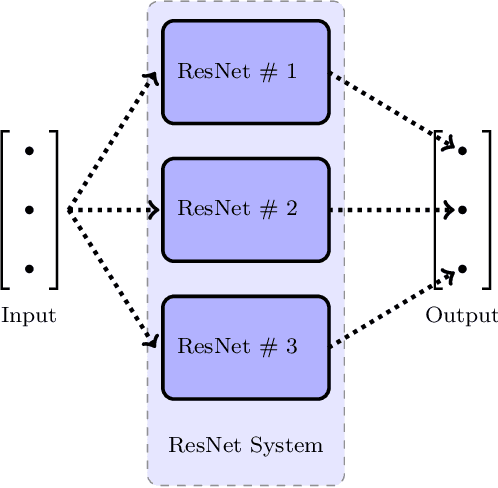

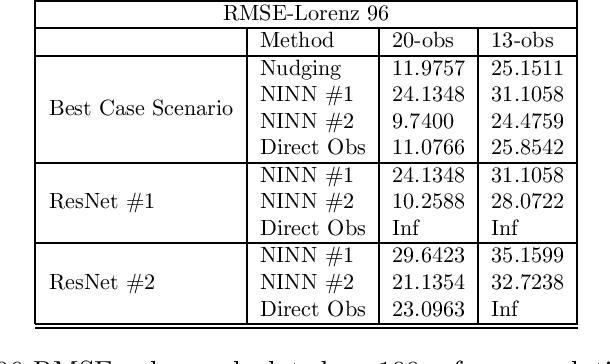

Abstract:New algorithms called nudging induced neural networks (NINNs), to control and improve the accuracy of deep neural networks (DNNs), are introduced. The NINNs framework can be applied to almost all pre-existing DNNs, with forward propagation, with costs comparable to existing DNNs. NINNs work by adding a feedback control term to the forward propagation of the network. The feedback term nudges the neural network towards a desired quantity of interest. NINNs offer multiple advantages, for instance, they lead to higher accuracy when compared with existing data assimilation algorithms such as nudging. Rigorous convergence analysis is established for NINNs. The algorithmic and theoretical findings are illustrated on examples from data assimilation and chemically reacting flows.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge