Raffaele Tavarone

Small footprint Text-Independent Speaker Verification for Embedded Systems

Nov 03, 2020

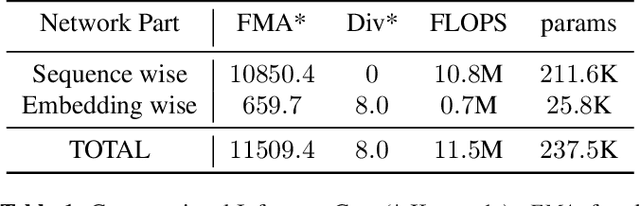

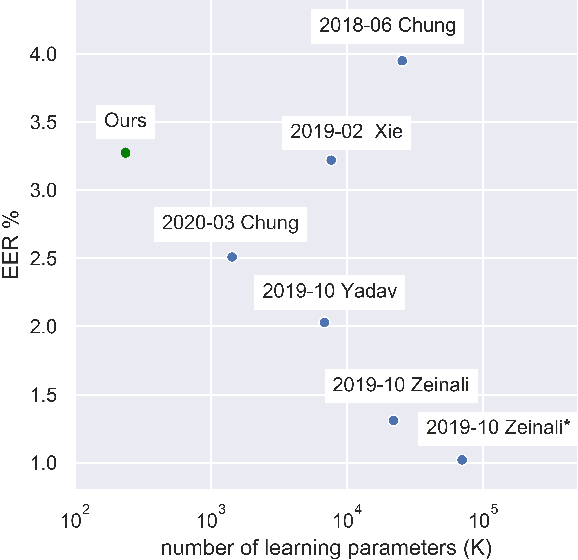

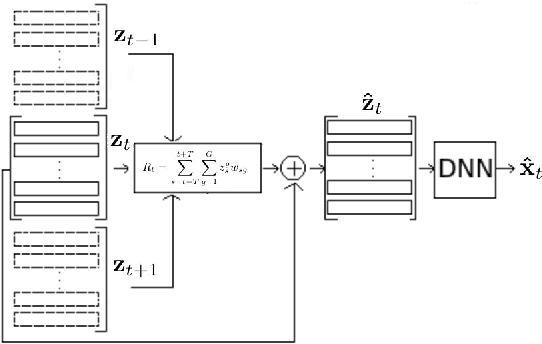

Abstract:Deep neural network approaches to speaker verification have proven successful, but typical computational requirements of State-Of-The-Art (SOTA) systems make them unsuited for embedded applications. In this work, we present a two-stage model architecture orders of magnitude smaller than common solutions (237.5K learning parameters, 11.5MFLOPS) reaching a competitive result of 3.31% Equal Error Rate (EER) on the well established VoxCeleb1 verification test set. We demonstrate the possibility of running our solution on small devices typical of IoT systems such as the Raspberry Pi 3B with a latency smaller than 200ms on a 5s long utterance. Additionally, we evaluate our model on the acoustically challenging VOiCES corpus. We report a limited increase in EER of 2.6 percentage points with respect to the best scoring model of the 2019 VOiCES from a Distance Challenge, against a reduction of 25.6 times in the number of learning parameters.

Improving generalization of vocal tract feature reconstruction: from augmented acoustic inversion to articulatory feature reconstruction without articulatory data

Sep 04, 2018

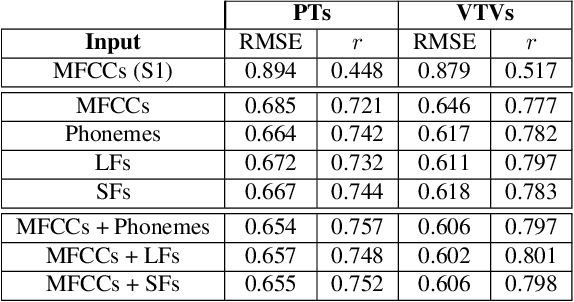

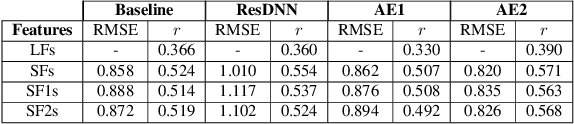

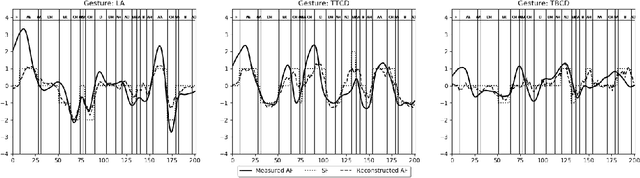

Abstract:We address the problem of reconstructing articulatory movements, given audio and/or phonetic labels. The scarce availability of multi-speaker articulatory data makes it difficult to learn a reconstruction that generalizes to new speakers and across datasets. We first consider the XRMB dataset where audio, articulatory measurements and phonetic transcriptions are available. We show that phonetic labels, used as input to deep recurrent neural networks that reconstruct articulatory features, are in general more helpful than acoustic features in both matched and mismatched training-testing conditions. In a second experiment, we test a novel approach that attempts to build articulatory features from prior articulatory information extracted from phonetic labels. Such approach recovers vocal tract movements directly from an acoustic-only dataset without using any articulatory measurement. Results show that articulatory features generated by this approach can correlate up to 0.59 Pearson product-moment correlation with measured articulatory features.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge