Qiuyue Sun

Navigation by Imitation in a Pedestrian-Rich Environment

Nov 01, 2018

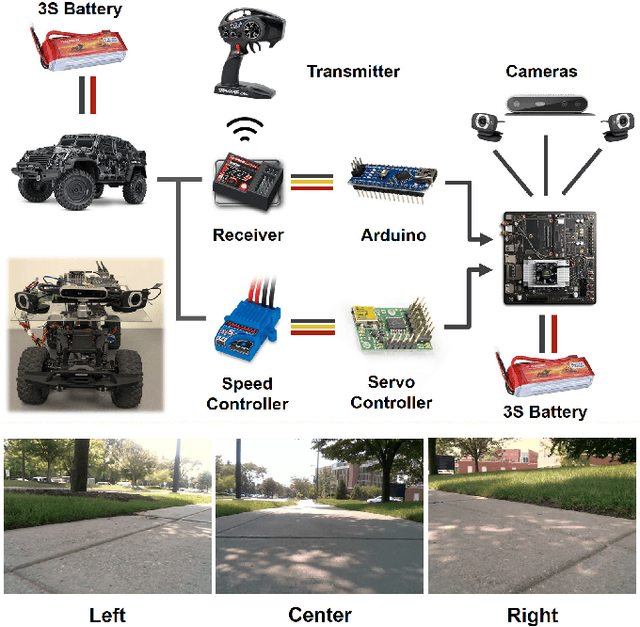

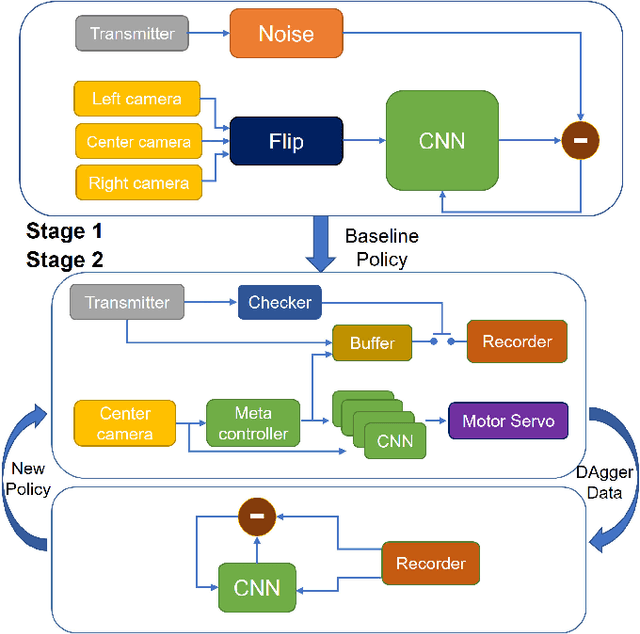

Abstract:Deep neural networks trained on demonstrations of human actions give robot the ability to perform self-driving on the road. However, navigation in a pedestrian-rich environment, such as a campus setup, is still challenging---one needs to take frequent interventions to the robot and take control over the robot from early steps leading to a mistake. An arduous burden is, hence, placed on the learning framework design and data acquisition. In this paper, we propose a new learning-from-intervention Dataset Aggregation (DAgger) algorithm to overcome the limitations brought by applying imitation learning to navigation in the pedestrian-rich environment. Our new learning algorithm implements an error backtrack function that is able to effectively learn from expert interventions. Combining our new learning algorithm with deep convolutional neural networks and a hierarchically-nested policy-selection mechanism, we show that our robot is able to map pixels direct to control commands and navigate successfully in real world without explicitly modeling the pedestrian behaviors or the world model.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge