Puqian Wang

ARO: A New Lens On Matrix Optimization For Large Models

Feb 09, 2026Abstract:Matrix-based optimizers have attracted growing interest for improving LLM training efficiency, with significant progress centered on orthogonalization/whitening based methods. While yielding substantial performance gains, a fundamental question arises: can we develop new paradigms beyond orthogonalization, pushing the efficiency frontier further? We present \textbf{Adaptively Rotated Optimization (ARO}, a new matrix optimization framework that treats gradient rotation as a first class design principle. ARO accelerates LLM training by performing normed steepest descent in a rotated coordinate system, where the rotation is determined by a novel norm-informed policy. This perspective yields update rules that go beyond existing orthogonalization and whitening optimizers, improving sample efficiency in practice. To make comparisons reliable, we propose a rigorously controlled benchmarking protocol that reduces confounding and bias. Under this protocol, ARO consistently outperforms AdamW (by 1.3 $\sim$1.35$\times$) and orthogonalization methods (by 1.1$\sim$1.15$\times$) in LLM pretraining at up to 8B activated parameters, and up to $8\times$ overtrain budget, without evidence of diminishing returns. Finally, we discuss how ARO can be reformulated as a symmetry-aware optimizer grounded in rotational symmetries of residual streams, motivating advanced designs that enable computationally efficient exploitation of cross-layer/cross module couplings.

Robustly Learning Monotone Single-Index Models

Aug 06, 2025Abstract:We consider the basic problem of learning Single-Index Models with respect to the square loss under the Gaussian distribution in the presence of adversarial label noise. Our main contribution is the first computationally efficient algorithm for this learning task, achieving a constant factor approximation, that succeeds for the class of {\em all} monotone activations with bounded moment of order $2 + \zeta,$ for $\zeta > 0.$ This class in particular includes all monotone Lipschitz functions and even discontinuous functions like (possibly biased) halfspaces. Prior work for the case of unknown activation either does not attain constant factor approximation or succeeds for a substantially smaller family of activations. The main conceptual novelty of our approach lies in developing an optimization framework that steps outside the boundaries of usual gradient methods and instead identifies a useful vector field to guide the algorithm updates by directly leveraging the problem structure, properties of Gaussian spaces, and regularity of monotone functions.

Robustly Learning Monotone Generalized Linear Models via Data Augmentation

Feb 12, 2025

Abstract:We study the task of learning Generalized Linear models (GLMs) in the agnostic model under the Gaussian distribution. We give the first polynomial-time algorithm that achieves a constant-factor approximation for \textit{any} monotone Lipschitz activation. Prior constant-factor GLM learners succeed for a substantially smaller class of activations. Our work resolves a well-known open problem, by developing a robust counterpart to the classical GLMtron algorithm (Kakade et al., 2011). Our robust learner applies more generally, encompassing all monotone activations with bounded $(2+\zeta)$-moments, for any fixed $\zeta>0$ -- a condition that is essentially necessary. To obtain our results, we leverage a novel data augmentation technique with decreasing Gaussian noise injection and prove a number of structural results that may be useful in other settings.

Sample and Computationally Efficient Robust Learning of Gaussian Single-Index Models

Nov 08, 2024Abstract:A single-index model (SIM) is a function of the form $\sigma(\mathbf{w}^{\ast} \cdot \mathbf{x})$, where $\sigma: \mathbb{R} \to \mathbb{R}$ is a known link function and $\mathbf{w}^{\ast}$ is a hidden unit vector. We study the task of learning SIMs in the agnostic (a.k.a. adversarial label noise) model with respect to the $L^2_2$-loss under the Gaussian distribution. Our main result is a sample and computationally efficient agnostic proper learner that attains $L^2_2$-error of $O(\mathrm{OPT})+\epsilon$, where $\mathrm{OPT}$ is the optimal loss. The sample complexity of our algorithm is $\tilde{O}(d^{\lceil k^{\ast}/2\rceil}+d/\epsilon)$, where $k^{\ast}$ is the information-exponent of $\sigma$ corresponding to the degree of its first non-zero Hermite coefficient. This sample bound nearly matches known CSQ lower bounds, even in the realizable setting. Prior algorithmic work in this setting had focused on learning in the realizable case or in the presence of semi-random noise. Prior computationally efficient robust learners required significantly stronger assumptions on the link function.

Robustly Learning Single-Index Models via Alignment Sharpness

Feb 27, 2024Abstract:We study the problem of learning Single-Index Models under the $L_2^2$ loss in the agnostic model. We give an efficient learning algorithm, achieving a constant factor approximation to the optimal loss, that succeeds under a range of distributions (including log-concave distributions) and a broad class of monotone and Lipschitz link functions. This is the first efficient constant factor approximate agnostic learner, even for Gaussian data and for any nontrivial class of link functions. Prior work for the case of unknown link function either works in the realizable setting or does not attain constant factor approximation. The main technical ingredient enabling our algorithm and analysis is a novel notion of a local error bound in optimization that we term alignment sharpness and that may be of broader interest.

Near-Optimal Bounds for Learning Gaussian Halfspaces with Random Classification Noise

Jul 13, 2023Abstract:We study the problem of learning general (i.e., not necessarily homogeneous) halfspaces with Random Classification Noise under the Gaussian distribution. We establish nearly-matching algorithmic and Statistical Query (SQ) lower bound results revealing a surprising information-computation gap for this basic problem. Specifically, the sample complexity of this learning problem is $\widetilde{\Theta}(d/\epsilon)$, where $d$ is the dimension and $\epsilon$ is the excess error. Our positive result is a computationally efficient learning algorithm with sample complexity $\tilde{O}(d/\epsilon + d/(\max\{p, \epsilon\})^2)$, where $p$ quantifies the bias of the target halfspace. On the lower bound side, we show that any efficient SQ algorithm (or low-degree test) for the problem requires sample complexity at least $\Omega(d^{1/2}/(\max\{p, \epsilon\})^2)$. Our lower bound suggests that this quadratic dependence on $1/\epsilon$ is inherent for efficient algorithms.

Information-Computation Tradeoffs for Learning Margin Halfspaces with Random Classification Noise

Jun 28, 2023Abstract:We study the problem of PAC learning $\gamma$-margin halfspaces with Random Classification Noise. We establish an information-computation tradeoff suggesting an inherent gap between the sample complexity of the problem and the sample complexity of computationally efficient algorithms. Concretely, the sample complexity of the problem is $\widetilde{\Theta}(1/(\gamma^2 \epsilon))$. We start by giving a simple efficient algorithm with sample complexity $\widetilde{O}(1/(\gamma^2 \epsilon^2))$. Our main result is a lower bound for Statistical Query (SQ) algorithms and low-degree polynomial tests suggesting that the quadratic dependence on $1/\epsilon$ in the sample complexity is inherent for computationally efficient algorithms. Specifically, our results imply a lower bound of $\widetilde{\Omega}(1/(\gamma^{1/2} \epsilon^2))$ on the sample complexity of any efficient SQ learner or low-degree test.

Robustly Learning a Single Neuron via Sharpness

Jun 13, 2023

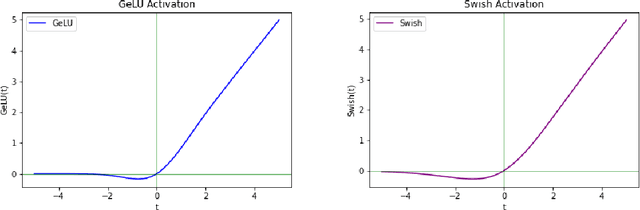

Abstract:We study the problem of learning a single neuron with respect to the $L_2^2$-loss in the presence of adversarial label noise. We give an efficient algorithm that, for a broad family of activations including ReLUs, approximates the optimal $L_2^2$-error within a constant factor. Our algorithm applies under much milder distributional assumptions compared to prior work. The key ingredient enabling our results is a novel connection to local error bounds from optimization theory.

Potential Function-based Framework for Making the Gradients Small in Convex and Min-Max Optimization

Jan 28, 2021Abstract:Making the gradients small is a fundamental optimization problem that has eluded unifying and simple convergence arguments in first-order optimization, so far primarily reserved for other convergence criteria, such as reducing the optimality gap. We introduce a novel potential function-based framework to study the convergence of standard methods for making the gradients small in smooth convex optimization and convex-concave min-max optimization. Our framework is intuitive and it provides a lens for viewing algorithms that make the gradients small as being driven by a trade-off between reducing either the gradient norm or a certain notion of an optimality gap. On the lower bounds side, we discuss tightness of the obtained convergence results for the convex setup and provide a new lower bound for minimizing norm of cocoercive operators that allows us to argue about optimality of methods in the min-max setup.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge