Pranali Sancheti

Temporal View Synthesis of Dynamic Scenes through 3D Object Motion Estimation with Multi-Plane Images

Aug 19, 2022

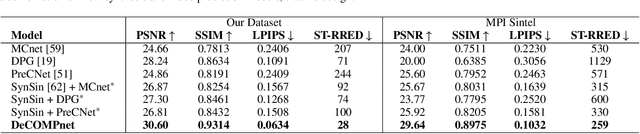

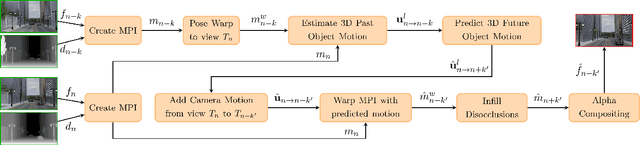

Abstract:The challenge of graphically rendering high frame-rate videos on low compute devices can be addressed through periodic prediction of future frames to enhance the user experience in virtual reality applications. This is studied through the problem of temporal view synthesis (TVS), where the goal is to predict the next frames of a video given the previous frames and the head poses of the previous and the next frames. In this work, we consider the TVS of dynamic scenes in which both the user and objects are moving. We design a framework that decouples the motion into user and object motion to effectively use the available user motion while predicting the next frames. We predict the motion of objects by isolating and estimating the 3D object motion in the past frames and then extrapolating it. We employ multi-plane images (MPI) as a 3D representation of the scenes and model the object motion as the 3D displacement between the corresponding points in the MPI representation. In order to handle the sparsity in MPIs while estimating the motion, we incorporate partial convolutions and masked correlation layers to estimate corresponding points. The predicted object motion is then integrated with the given user or camera motion to generate the next frame. Using a disocclusion infilling module, we synthesize the regions uncovered due to the camera and object motion. We develop a new synthetic dataset for TVS of dynamic scenes consisting of 800 videos at full HD resolution. We show through experiments on our dataset and the MPI Sintel dataset that our model outperforms all the competing methods in the literature.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge