Peter U. Diehl

Learning and Inferring Relations in Cortical Networks

Aug 29, 2016

Abstract:A pressing scientific challenge is to understand how brains work. Of particular interest is the neocortex,the part of the brain that is especially large in humans, capable of handling a wide variety of tasks including visual, auditory, language, motor, and abstract processing. These functionalities are processed in different self-organized regions of the neocortical sheet, and yet the anatomical structure carrying out the processing is relatively uniform across the sheet. We are at a loss to explain, simulate, or understand such a multi-functional homogeneous sheet-like computational structure - we do not have computational models which work in this way. Here we present an important step towards developing such models: we show how uniform modules of excitatory and inhibitory neurons can be connected bidirectionally in a network that, when exposed to input in the form of population codes, learns the input encodings as well as the relationships between the inputs. STDP learning rules lead the modules to self-organize into a relational network, which is able to infer missing inputs,restore noisy signals, decide between conflicting inputs, and combine cues to improve estimates. These networks show that it is possible for a homogeneous network of spiking units to self-organize so as to provide meaningful processing of its inputs. If such networks can be scaled up, they could provide an initial computational model relevant to the large scale anatomy of the neocortex.

Conversion of Artificial Recurrent Neural Networks to Spiking Neural Networks for Low-power Neuromorphic Hardware

Jan 16, 2016

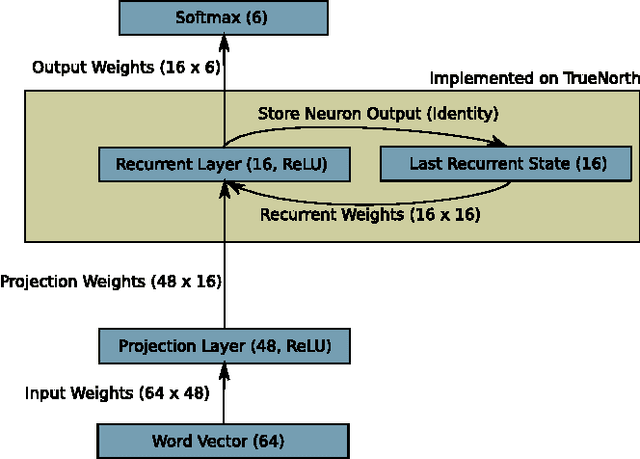

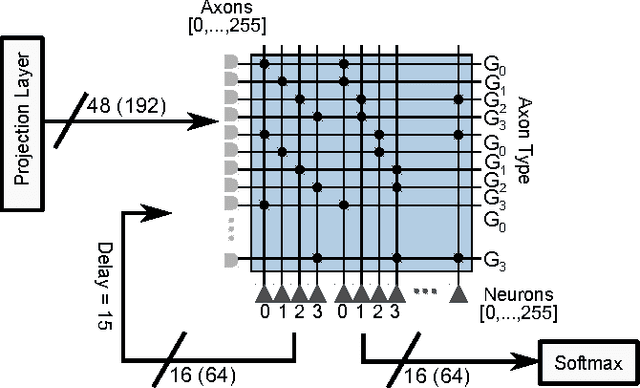

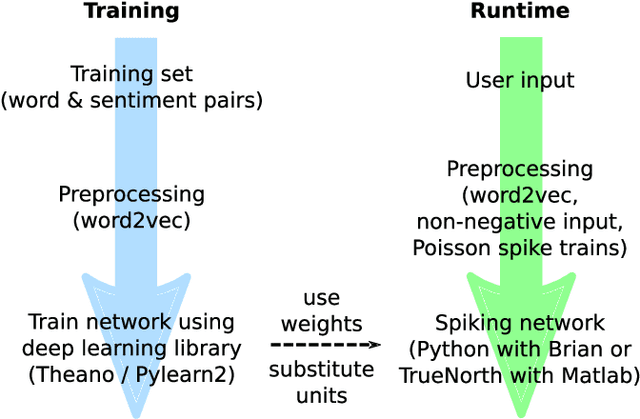

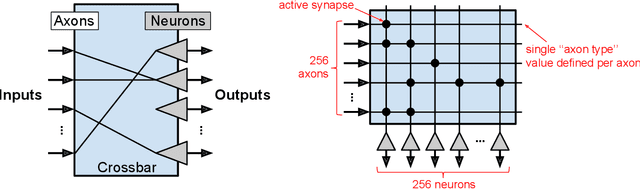

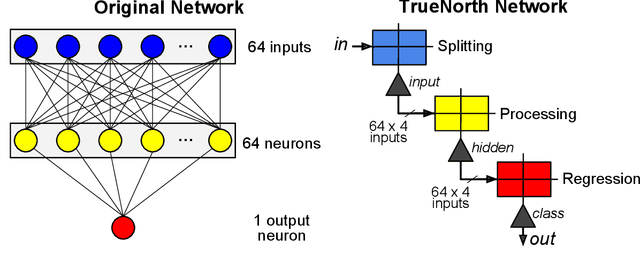

Abstract:In recent years the field of neuromorphic low-power systems that consume orders of magnitude less power gained significant momentum. However, their wider use is still hindered by the lack of algorithms that can harness the strengths of such architectures. While neuromorphic adaptations of representation learning algorithms are now emerging, efficient processing of temporal sequences or variable length-inputs remain difficult. Recurrent neural networks (RNN) are widely used in machine learning to solve a variety of sequence learning tasks. In this work we present a train-and-constrain methodology that enables the mapping of machine learned (Elman) RNNs on a substrate of spiking neurons, while being compatible with the capabilities of current and near-future neuromorphic systems. This "train-and-constrain" method consists of first training RNNs using backpropagation through time, then discretizing the weights and finally converting them to spiking RNNs by matching the responses of artificial neurons with those of the spiking neurons. We demonstrate our approach by mapping a natural language processing task (question classification), where we demonstrate the entire mapping process of the recurrent layer of the network on IBM's Neurosynaptic System "TrueNorth", a spike-based digital neuromorphic hardware architecture. TrueNorth imposes specific constraints on connectivity, neural and synaptic parameters. To satisfy these constraints, it was necessary to discretize the synaptic weights and neural activities to 16 levels, and to limit fan-in to 64 inputs. We find that short synaptic delays are sufficient to implement the dynamical (temporal) aspect of the RNN in the question classification task. The hardware-constrained model achieved 74% accuracy in question classification while using less than 0.025% of the cores on one TrueNorth chip, resulting in an estimated power consumption of ~17 uW.

TrueHappiness: Neuromorphic Emotion Recognition on TrueNorth

Jan 16, 2016

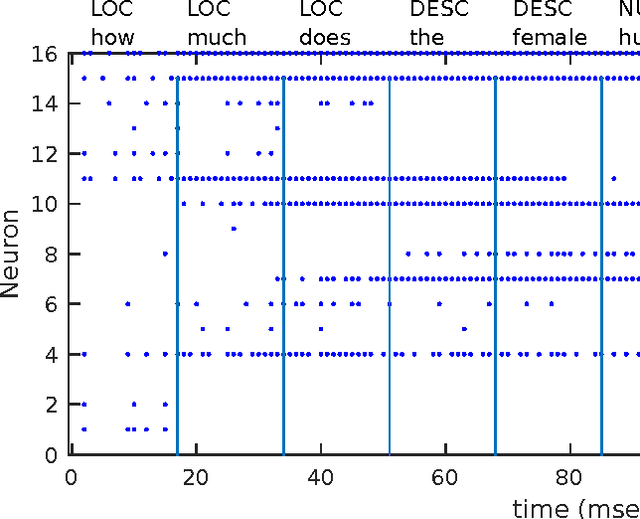

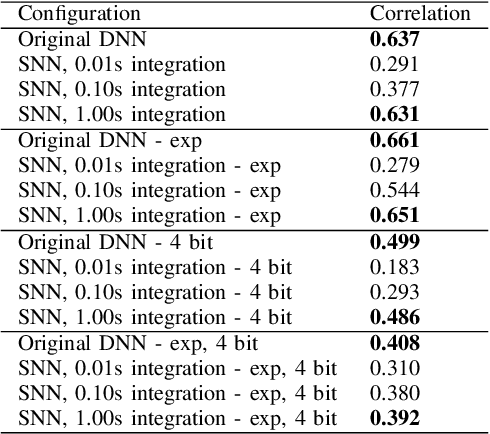

Abstract:We present an approach to constructing a neuromorphic device that responds to language input by producing neuron spikes in proportion to the strength of the appropriate positive or negative emotional response. Specifically, we perform a fine-grained sentiment analysis task with implementations on two different systems: one using conventional spiking neural network (SNN) simulators and the other one using IBM's Neurosynaptic System TrueNorth. Input words are projected into a high-dimensional semantic space and processed through a fully-connected neural network (FCNN) containing rectified linear units trained via backpropagation. After training, this FCNN is converted to a SNN by substituting the ReLUs with integrate-and-fire neurons. We show that there is practically no performance loss due to conversion to a spiking network on a sentiment analysis test set, i.e. correlations between predictions and human annotations differ by less than 0.02 comparing the original DNN and its spiking equivalent. Additionally, we show that the SNN generated with this technique can be mapped to existing neuromorphic hardware -- in our case, the TrueNorth chip. Mapping to the chip involves 4-bit synaptic weight discretization and adjustment of the neuron thresholds. The resulting end-to-end system can take a user input, i.e. a word in a vocabulary of over 300,000 words, and estimate its sentiment on TrueNorth with a power consumption of approximately 50 uW.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge