Pengtao Zhang

LongCat-Flash-Thinking-2601 Technical Report

Jan 23, 2026Abstract:We introduce LongCat-Flash-Thinking-2601, a 560-billion-parameter open-source Mixture-of-Experts (MoE) reasoning model with superior agentic reasoning capability. LongCat-Flash-Thinking-2601 achieves state-of-the-art performance among open-source models on a wide range of agentic benchmarks, including agentic search, agentic tool use, and tool-integrated reasoning. Beyond benchmark performance, the model demonstrates strong generalization to complex tool interactions and robust behavior under noisy real-world environments. Its advanced capability stems from a unified training framework that combines domain-parallel expert training with subsequent fusion, together with an end-to-end co-design of data construction, environments, algorithms, and infrastructure spanning from pre-training to post-training. In particular, the model's strong generalization capability in complex tool-use are driven by our in-depth exploration of environment scaling and principled task construction. To optimize long-tailed, skewed generation and multi-turn agentic interactions, and to enable stable training across over 10,000 environments spanning more than 20 domains, we systematically extend our asynchronous reinforcement learning framework, DORA, for stable and efficient large-scale multi-environment training. Furthermore, recognizing that real-world tasks are inherently noisy, we conduct a systematic analysis and decomposition of real-world noise patterns, and design targeted training procedures to explicitly incorporate such imperfections into the training process, resulting in improved robustness for real-world applications. To further enhance performance on complex reasoning tasks, we introduce a Heavy Thinking mode that enables effective test-time scaling by jointly expanding reasoning depth and width through intensive parallel thinking.

MemoNet:Memorizing Representations of All Cross Features Efficiently via Multi-Hash Codebook Network for CTR Prediction

Nov 03, 2022

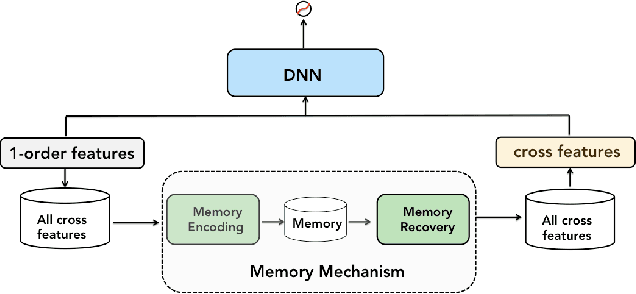

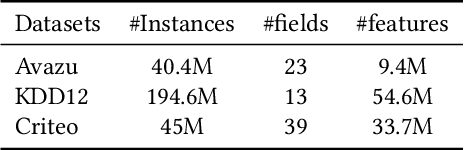

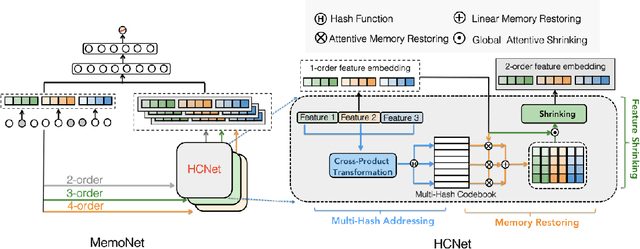

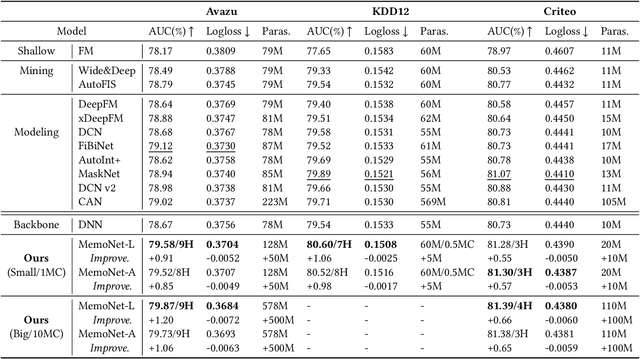

Abstract:New findings in natural language processing(NLP) demonstrate that the strong memorization capability contributes a lot to the success of large language models.This inspires us to explicitly bring an independent memory mechanism into CTR ranking model to learn and memorize all cross features'representations. In this paper,we propose multi-Hash Codebook NETwork(HCNet) as the memory mechanism for efficiently learning and memorizing representations of all cross features in CTR tasks.HCNet uses multi-hash codebook as the main memory place and the whole memory procedure consists of three phases: multi-hash addressing,memory restoring and feature shrinking.HCNet can be regarded as a general module and can be incorporated into any current deep CTR model.We also propose a new CTR model named MemoNet which combines HCNet with a DNN backbone.Extensive experimental results on three public datasets show that MemoNet reaches superior performance over state-of-the-art approaches and validate the effectiveness of HCNet as a strong memory module.Besides, MemoNet shows the prominent feature of big models in NLP,which means we can enlarge the size of codebook in HCNet to sustainably obtain performance gains.Our work demonstrates the importance and feasibility of learning and memorizing representations of all cross features ,which sheds light on a new promising research direction.

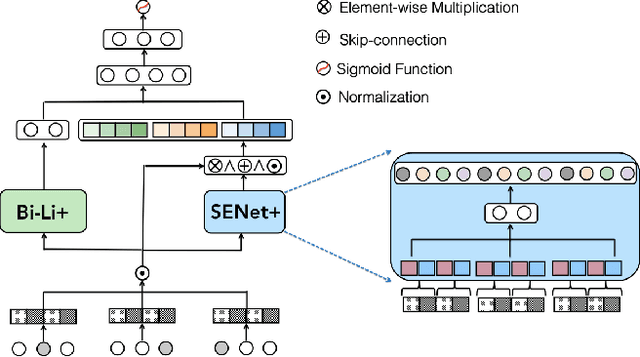

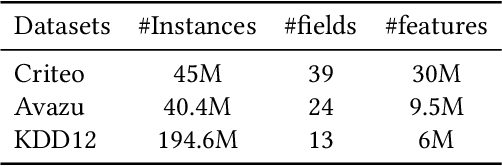

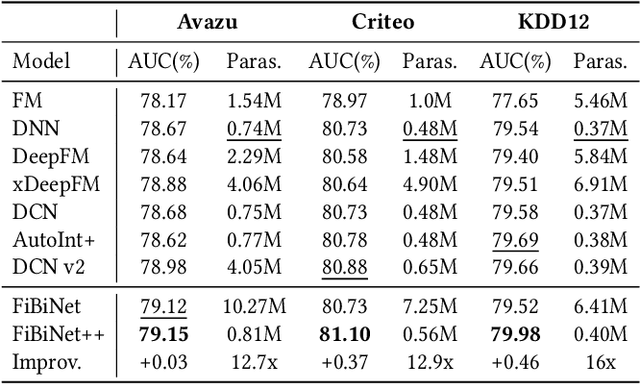

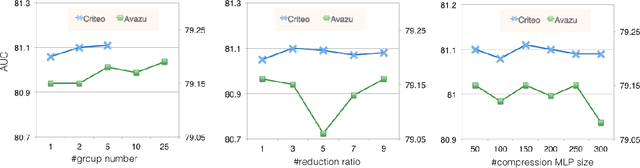

FiBiNet++:Improving FiBiNet by Greatly Reducing Model Size for CTR Prediction

Sep 12, 2022

Abstract:Click-Through Rate(CTR) estimation has become one of the most fundamental tasks in many real-world applications and various deep models have been proposed to resolve this problem. Some research has proved that FiBiNet is one of the best performance models and outperforms all other models on Avazu dataset.However, the large model size of FiBiNet hinders its wider applications.In this paper, we propose a novel FiBiNet++ model to redesign FiBiNet's model structure ,which greatly reducess model size while further improves its performance.Extensive experiments on three public datasets show that FiBiNet++ effectively reduces non-embedding model parameters of FiBiNet by 12x to 16x on three datasets and has comparable model size with DNN model which is the smallest one among deep CTR models.On the other hand, FiBiNet++ leads to significant performance improvements compared to state-of-the-art CTR methods,including FiBiNet.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge