Peiqi Xia

LFMamba: Light Field Image Super-Resolution with State Space Model

Jun 18, 2024

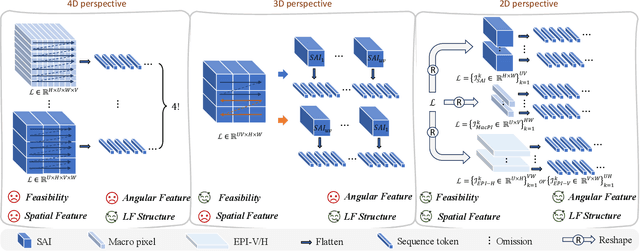

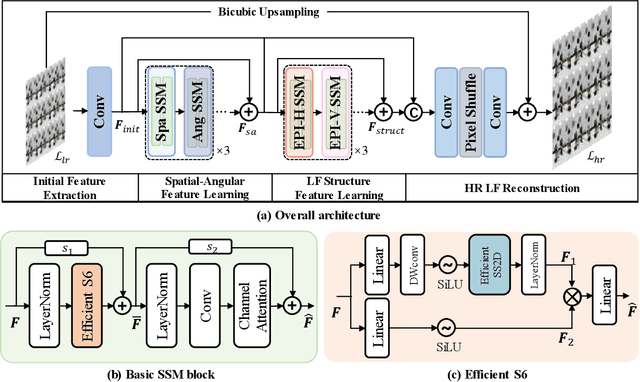

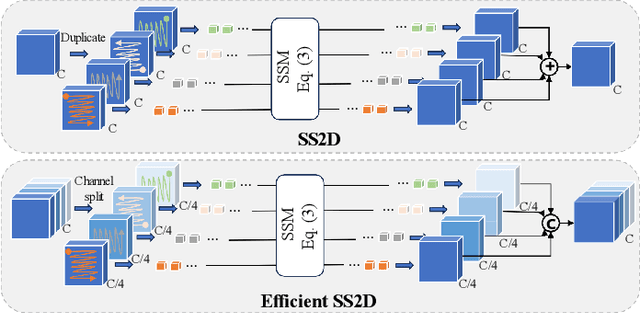

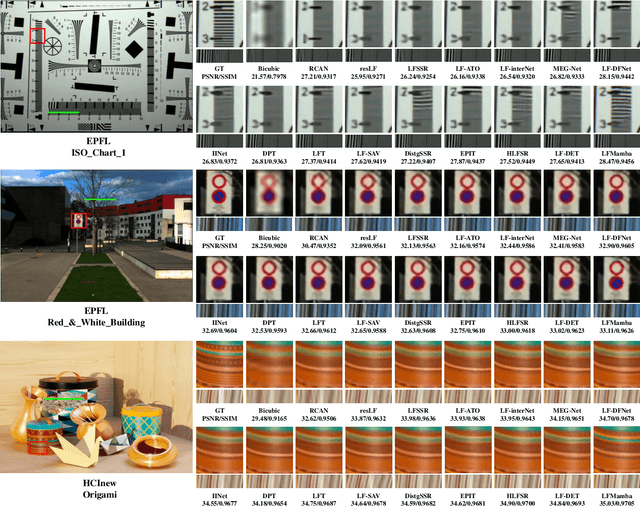

Abstract:Recent years have witnessed significant advancements in light field image super-resolution (LFSR) owing to the progress of modern neural networks. However, these methods often face challenges in capturing long-range dependencies (CNN-based) or encounter quadratic computational complexities (Transformer-based), which limit their performance. Recently, the State Space Model (SSM) with selective scanning mechanism (S6), exemplified by Mamba, has emerged as a superior alternative in various vision tasks compared to traditional CNN- and Transformer-based approaches, benefiting from its effective long-range sequence modeling capability and linear-time complexity. Therefore, integrating S6 into LFSR becomes compelling, especially considering the vast data volume of 4D light fields. However, the primary challenge lies in \emph{designing an appropriate scanning method for 4D light fields that effectively models light field features}. To tackle this, we employ SSMs on the informative 2D slices of 4D LFs to fully explore spatial contextual information, complementary angular information, and structure information. To achieve this, we carefully devise a basic SSM block characterized by an efficient SS2D mechanism that facilitates more effective and efficient feature learning on these 2D slices. Based on the above two designs, we further introduce an SSM-based network for LFSR termed LFMamba. Experimental results on LF benchmarks demonstrate the superior performance of LFMamba. Furthermore, extensive ablation studies are conducted to validate the efficacy and generalization ability of our proposed method. We expect that our LFMamba shed light on effective representation learning of LFs with state space models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge