Pawel Romanczuk

Fundamental Visual Navigation Algorithms: Indirect Sequential, Biased Diffusive, & Direct Pathing

Jul 18, 2024

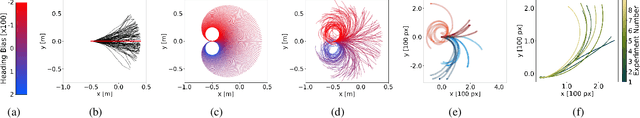

Abstract:Effective foraging in a predictable local environment requires coordinating movement with observable spatial context - in a word, navigation. Distinct from search, navigating to specific areas known to be valuable entails its own particularities. How space is understood through vision and parsed for navigation is often examined experimentally, with limited ability to manipulate sensory inputs and probe into the algorithmic level of decision-making. As a generalizable, minimal alternative to empirical means, we evolve and study embodied neural networks to explore information processing algorithms an organism may use for visual spatial navigation. Surprisingly, three distinct classes of algorithms emerged, each with its own set of rules and tradeoffs, and each appear to be highly relevant to observable biological navigation behaviors.

Purely vision-based collective movement of robots

Jun 24, 2024Abstract:Collective movement inspired by animal groups promises inherited benefits for robot swarms, such as enhanced sensing and efficiency. However, while animals move in groups using only their local senses, robots often obey central control or use direct communication, introducing systemic weaknesses to the swarm. In the hope of addressing such vulnerabilities, developing bio-inspired decentralized swarms has been a major focus in recent decades. Yet, creating robots that move efficiently together using only local sensory information remains an extraordinary challenge. In this work, we present a decentralized, purely vision-based swarm of terrestrial robots. Within this novel framework robots achieve collisionless, polarized motion exclusively through minimal visual interactions, computing everything on board based on their individual camera streams, making central processing or direct communication obsolete. With agent-based simulations, we further show that using this model, even with a strictly limited field of view and within confined spaces, ordered group motion can emerge, while also highlighting key limitations. Our results offer a multitude of practical applications from hybrid societies coordinating collective movement without any common communication protocol, to advanced, decentralized vision-based robot swarms capable of diverse tasks in ever-changing environments.

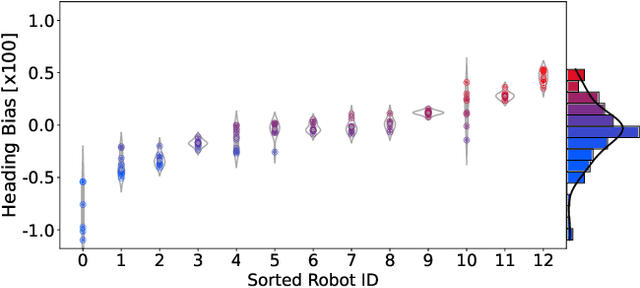

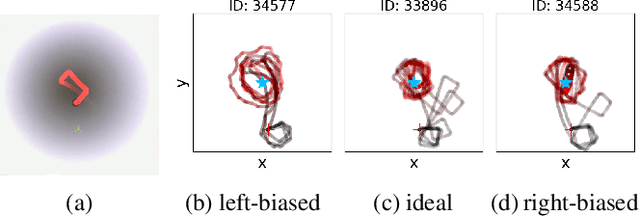

Individuality in Swarm Robots with the Case Study of Kilobots: Noise, Bug, or Feature?

May 25, 2023

Abstract:Inter-individual differences are studied in natural systems, such as fish, bees, and humans, as they contribute to the complexity of both individual and collective behaviors. However, individuality in artificial systems, such as robotic swarms, is undervalued or even overlooked. Agent-specific deviations from the norm in swarm robotics are usually understood as mere noise that can be minimized, for example, by calibration. We observe that robots have consistent deviations and argue that awareness and knowledge of these can be exploited to serve a task. We measure heterogeneity in robot swarms caused by individual differences in how robots act, sense, and oscillate. Our use case is Kilobots and we provide example behaviors where the performance of robots varies depending on individual differences. We show a non-intuitive example of phototaxis with Kilobots where the non-calibrated Kilobots show better performance than the calibrated supposedly ``ideal" one. We measure the inter-individual variations for heterogeneity in sensing and oscillation, too. We briefly discuss how these variations can enhance the complexity of collective behaviors. We suggest that by recognizing and exploring this new perspective on individuality, and hence diversity, in robotic swarms, we can gain a deeper understanding of these systems and potentially unlock new possibilities for their design and implementation of applications.

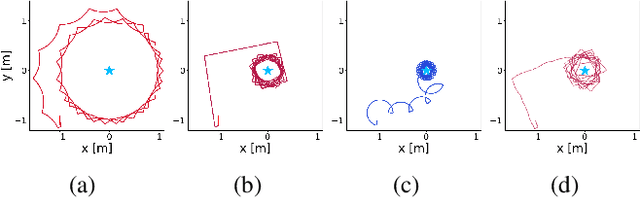

Estimation of continuous environments by robot swarms: Correlated networks and decision-making

Mar 15, 2023Abstract:Collective decision-making is an essential capability of large-scale multi-robot systems to establish autonomy on the swarm level. A large portion of literature on collective decision-making in swarm robotics focuses on discrete decisions selecting from a limited number of options. Here we assign a decentralized robot system with the task of exploring an unbounded environment, finding consensus on the mean of a measurable environmental feature, and aggregating at areas where that value is measured (e.g., a contour line). A unique quality of this task is a causal loop between the robots' dynamic network topology and their decision-making. For example, the network's mean node degree influences time to convergence while the currently agreed-on mean value influences the swarm's aggregation location, hence, also the network structure as well as the precision error. We propose a control algorithm and study it in real-world robot swarm experiments in different environments. We show that our approach is effective and achieves higher precision than a control experiment. We anticipate applications, for example, in containing pollution with surface vehicles.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge