Pavel Kral

On the Effects of Using word2vec Representations in Neural Networks for Dialogue Act Recognition

Oct 22, 2020

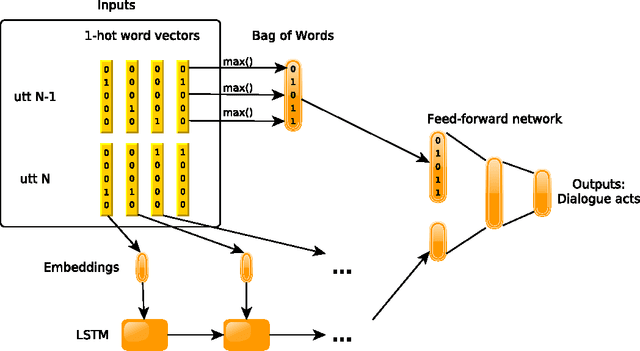

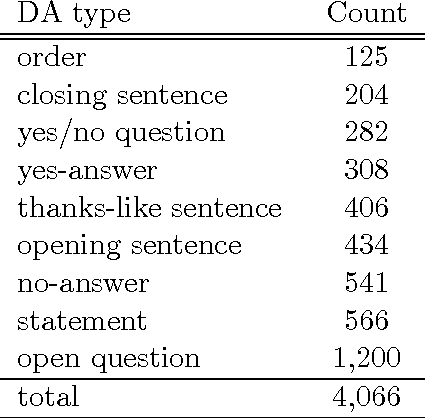

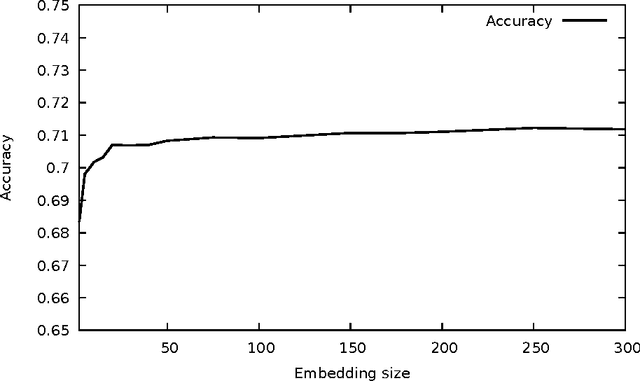

Abstract:Dialogue act recognition is an important component of a large number of natural language processing pipelines. Many research works have been carried out in this area, but relatively few investigate deep neural networks and word embeddings. This is surprising, given that both of these techniques have proven exceptionally good in most other language-related domains. We propose in this work a new deep neural network that explores recurrent models to capture word sequences within sentences, and further study the impact of pretrained word embeddings. We validate this model on three languages: English, French and Czech. The performance of the proposed approach is consistent across these languages and it is comparable to the state-of-the-art results in English. More importantly, we confirm that deep neural networks indeed outperform a Maximum Entropy classifier, which was expected. However , and this is more surprising, we also found that standard word2vec em-beddings do not seem to bring valuable information for this task and the proposed model, whatever the size of the training corpus is. We thus further analyse the resulting embeddings and conclude that a possible explanation may be related to the mismatch between the type of lexical-semantic information captured by the word2vec embeddings, and the kind of relations between words that is the most useful for the dialogue act recognition task.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge