Get our free extension to see links to code for papers anywhere online!Free add-on: code for papers everywhere!Free add-on: See code for papers anywhere!

Paul Barry

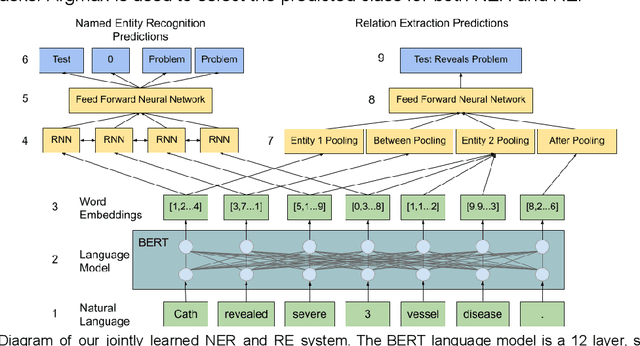

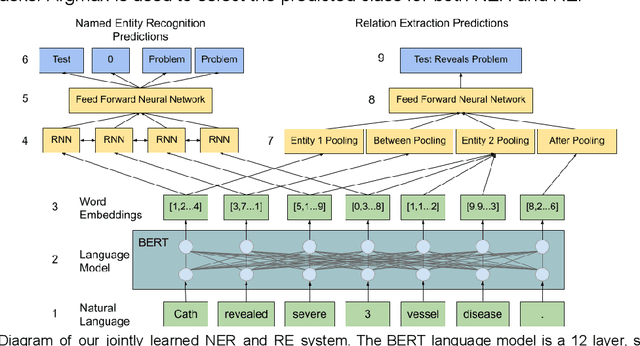

Jointly Learning Clinical Entities and Relations with Contextual Language Models and Explicit Context

Feb 17, 2021Figures and Tables:

Abstract:We hypothesize that explicit integration of contextual information into an Multi-task Learning framework would emphasize the significance of context for boosting performance in jointly learning Named Entity Recognition (NER) and Relation Extraction (RE). Our work proves this hypothesis by segmenting entities from their surrounding context and by building contextual representations using each independent segment. This relation representation allows for a joint NER/RE system that achieves near state-of-the-art (SOTA) performance on both NER and RE tasks while beating the SOTA RE system at end-to-end NER & RE with a 49.07 F1.

Via

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge