Patrizio Dazzi

High-Performance Serverless Computing: A Systematic Literature Review on Serverless for HPC, AI, and Big Data

Jan 14, 2026Abstract:The widespread deployment of large-scale, compute-intensive applications such as high-performance computing, artificial intelligence, and big data is leading to convergence between cloud and high-performance computing infrastructures. Cloud providers are increasingly integrating high-performance computing capabilities in their infrastructures, such as hardware accelerators and high-speed interconnects, while researchers in the high-performance computing community are starting to explore cloud-native paradigms to improve scalability, elasticity, and resource utilization. In this context, serverless computing emerges as a promising execution model to efficiently handle highly dynamic, parallel, and distributed workloads. This paper presents a comprehensive systematic literature review of 122 research articles published between 2018 and early 2025, exploring the use of the serverless paradigm to develop, deploy, and orchestrate compute-intensive applications across cloud, high-performance computing, and hybrid environments. From these, a taxonomy comprising eight primary research directions and nine targeted use case domains is proposed, alongside an analysis of recent publication trends and collaboration networks among authors, highlighting the growing interest and interconnections within this emerging research field. Overall, this work aims to offer a valuable foundation for both new researchers and experienced practitioners, guiding the development of next-generation serverless solutions for parallel compute-intensive applications.

Double Deep Q-Learning-based Path Selection and Service Placement for Latency-Sensitive Beyond 5G Applications

Sep 18, 2023

Abstract:Nowadays, as the need for capacity continues to grow, entirely novel services are emerging. A solid cloud-network integrated infrastructure is necessary to supply these services in a real-time responsive, and scalable way. Due to their diverse characteristics and limited capacity, communication and computing resources must be collaboratively managed to unleash their full potential. Although several innovative methods have been proposed to orchestrate the resources, most ignored network resources or relaxed the network as a simple graph, focusing only on cloud resources. This paper fills the gap by studying the joint problem of communication and computing resource allocation, dubbed CCRA, including function placement and assignment, traffic prioritization, and path selection considering capacity constraints and quality requirements, to minimize total cost. We formulate the problem as a non-linear programming model and propose two approaches, dubbed B\&B-CCRA and WF-CCRA, based on the Branch \& Bound and Water-Filling algorithms to solve it when the system is fully known. Then, for partially known systems, a Double Deep Q-Learning (DDQL) architecture is designed. Numerical simulations show that B\&B-CCRA optimally solves the problem, whereas WF-CCRA delivers near-optimal solutions in a substantially shorter time. Furthermore, it is demonstrated that DDQL-CCRA obtains near-optimal solutions in the absence of request-specific information.

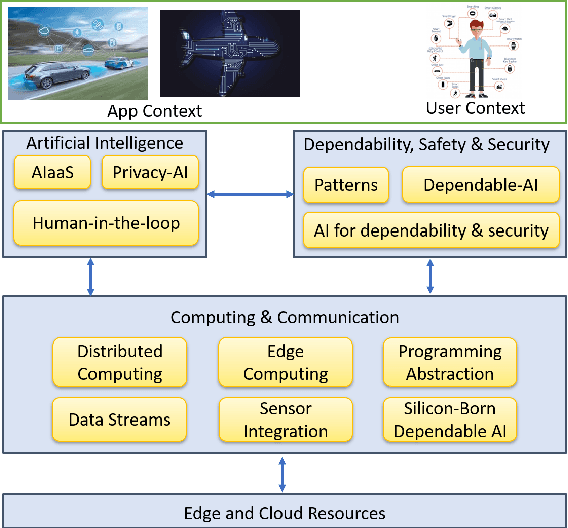

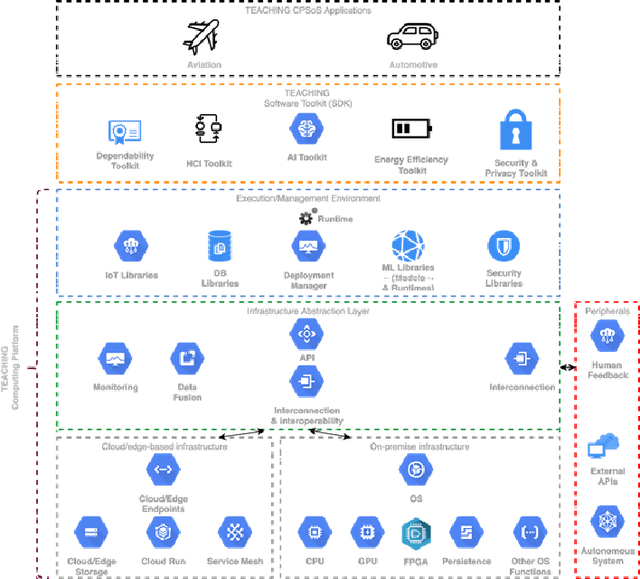

TEACHING -- Trustworthy autonomous cyber-physical applications through human-centred intelligence

Jul 14, 2021

Abstract:This paper discusses the perspective of the H2020 TEACHING project on the next generation of autonomous applications running in a distributed and highly heterogeneous environment comprising both virtual and physical resources spanning the edge-cloud continuum. TEACHING puts forward a human-centred vision leveraging the physiological, emotional, and cognitive state of the users as a driver for the adaptation and optimization of the autonomous applications. It does so by building a distributed, embedded and federated learning system complemented by methods and tools to enforce its dependability, security and privacy preservation. The paper discusses the main concepts of the TEACHING approach and singles out the main AI-related research challenges associated with it. Further, we provide a discussion of the design choices for the TEACHING system to tackle the aforementioned challenges

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge