Paolo Tirotta

Multilevel Sentence Embeddings for Personality Prediction

May 09, 2023

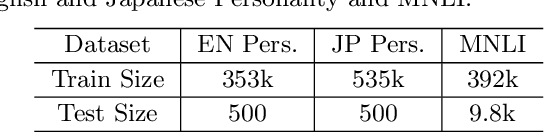

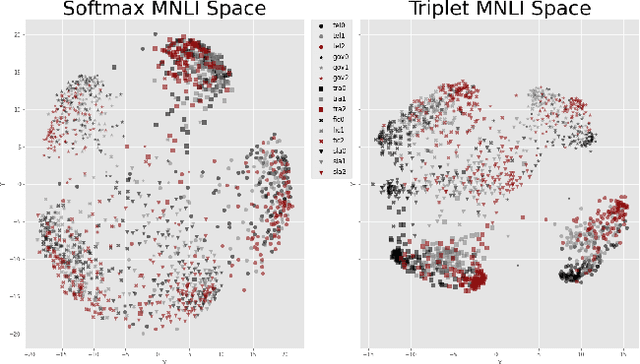

Abstract:Representing text into a multidimensional space can be done with sentence embedding models such as Sentence-BERT (SBERT). However, training these models when the data has a complex multilevel structure requires individually trained class-specific models, which increases time and computing costs. We propose a two step approach which enables us to map sentences according to their hierarchical memberships and polarity. At first we teach the upper level sentence space through an AdaCos loss function and then finetune with a novel loss function mainly based on the cosine similarity of intra-level pairs. We apply this method to three different datasets: two weakly supervised Big Five personality dataset obtained from English and Japanese Twitter data and the benchmark MNLI dataset. We show that our single model approach performs better than multiple class-specific classification models.

* Copyright is owned by JSAI

OptAGAN: Entropy-based finetuning on text VAE-GAN

Sep 01, 2021

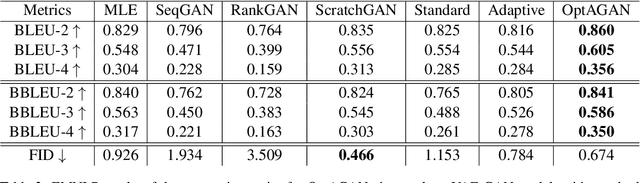

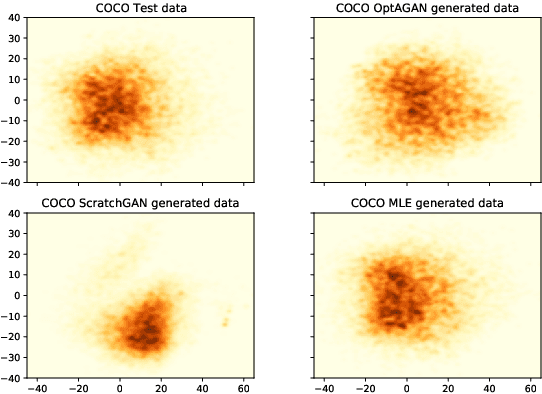

Abstract:Transfer learning through large pre-trained models has changed the landscape of current applications in natural language processing (NLP). Recently Optimus, a variational autoencoder (VAE) which combines two pre-trained models, BERT and GPT-2, has been released, and its combination with generative adversial networks (GANs) has been shown to produce novel, yet very human-looking text. The Optimus and GANs combination avoids the troublesome application of GANs to the discrete domain of text, and prevents the exposure bias of standard maximum likelihood methods. We combine the training of GANs in the latent space, with the finetuning of the decoder of Optimus for single word generation. This approach lets us model both the high-level features of the sentences, and the low-level word-by-word generation. We finetune using reinforcement learning (RL) by exploiting the structure of GPT-2 and by adding entropy-based intrinsically motivated rewards to balance between quality and diversity. We benchmark the results of the VAE-GAN model, and show the improvements brought by our RL finetuning on three widely used datasets for text generation, with results that greatly surpass the current state-of-the-art for the quality of the generated texts.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge