Panneer Selvam Santhalingam

FineHand: Learning Hand Shapes for American Sign Language Recognition

Mar 04, 2020

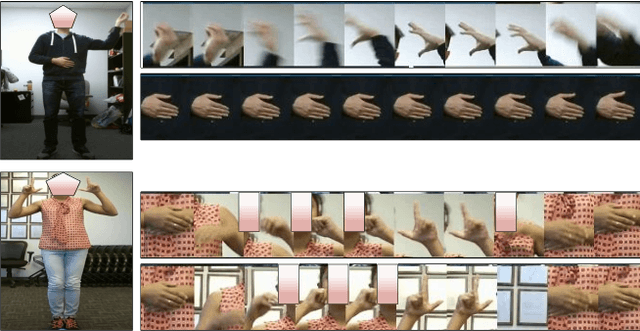

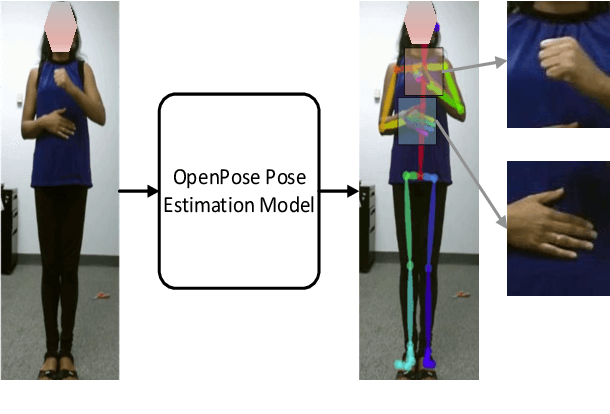

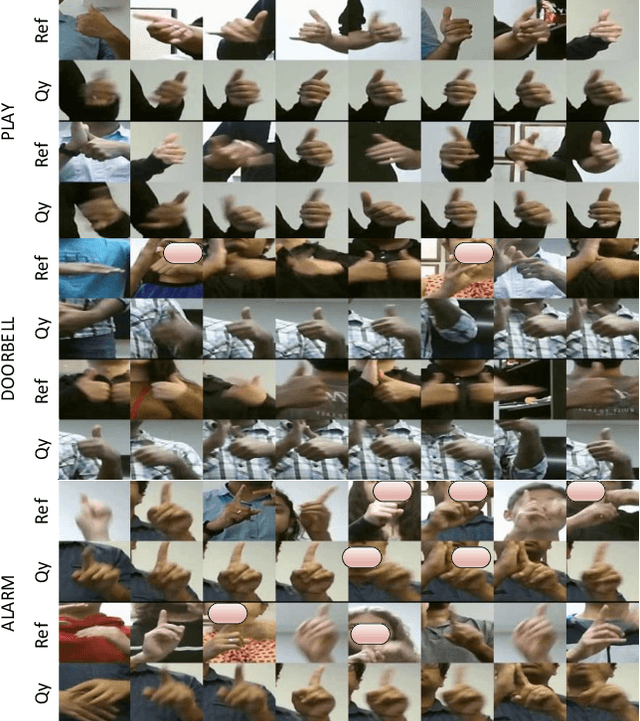

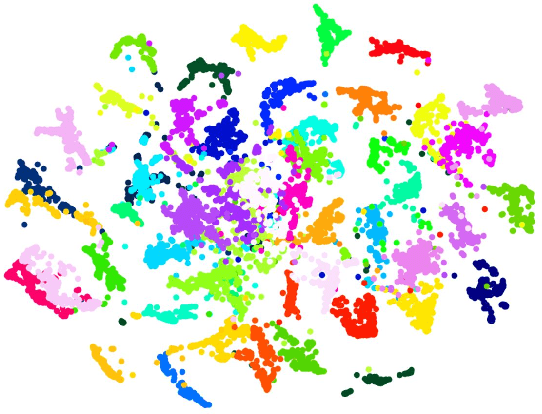

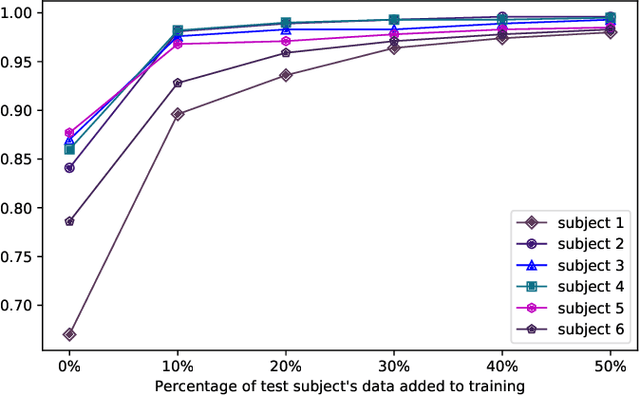

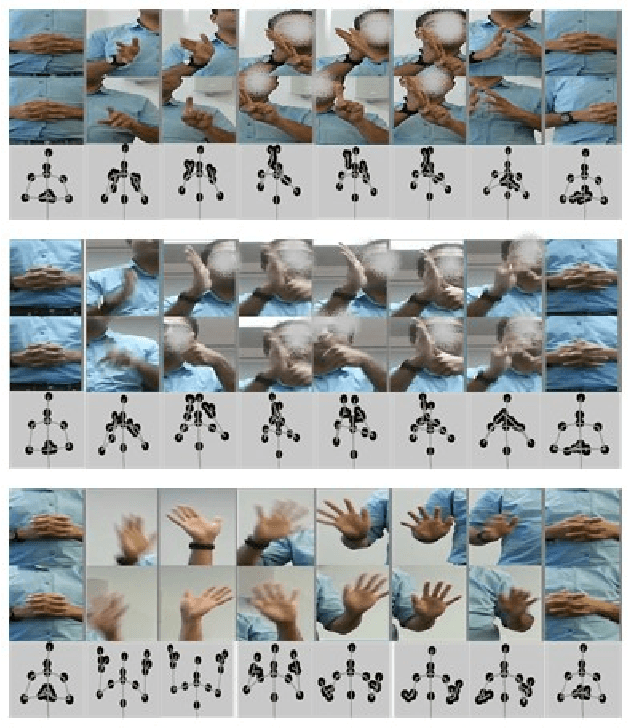

Abstract:American Sign Language recognition is a difficult gesture recognition problem, characterized by fast, highly articulate gestures. These are comprised of arm movements with different hand shapes, facial expression and head movements. Among these components, hand shape is the vital, often the most discriminative part of a gesture. In this work, we present an approach for effective learning of hand shape embeddings, which are discriminative for ASL gestures. For hand shape recognition our method uses a mix of manually labelled hand shapes and high confidence predictions to train deep convolutional neural network (CNN). The sequential gesture component is captured by recursive neural network (RNN) trained on the embeddings learned in the first stage. We will demonstrate that higher quality hand shape models can significantly improve the accuracy of final video gesture classification in challenging conditions with variety of speakers, different illumination and significant motion blurr. We compare our model to alternative approaches exploiting different modalities and representations of the data and show improved video gesture recognition accuracy on GMU-ASL51 benchmark dataset

Sign Language Recognition Analysis using Multimodal Data

Sep 24, 2019

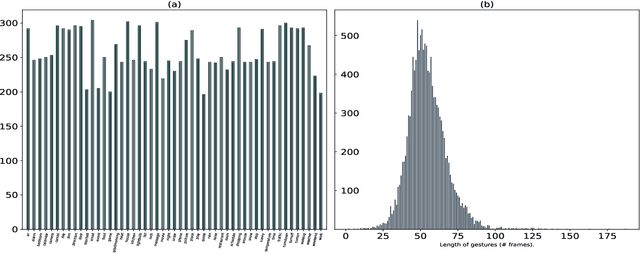

Abstract:Voice-controlled personal and home assistants (such as the Amazon Echo and Apple Siri) are becoming increasingly popular for a variety of applications. However, the benefits of these technologies are not readily accessible to Deaf or Hard-ofHearing (DHH) users. The objective of this study is to develop and evaluate a sign recognition system using multiple modalities that can be used by DHH signers to interact with voice-controlled devices. With the advancement of depth sensors, skeletal data is used for applications like video analysis and activity recognition. Despite having similarity with the well-studied human activity recognition, the use of 3D skeleton data in sign language recognition is rare. This is because unlike activity recognition, sign language is mostly dependent on hand shape pattern. In this work, we investigate the feasibility of using skeletal and RGB video data for sign language recognition using a combination of different deep learning architectures. We validate our results on a large-scale American Sign Language (ASL) dataset of 12 users and 13107 samples across 51 signs. It is named as GMUASL51. We collected the dataset over 6 months and it will be publicly released in the hope of spurring further machine learning research towards providing improved accessibility for digital assistants.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge