Othón González-Chávez

Are metrics measuring what they should? An evaluation of image captioning task metrics

Jul 04, 2022

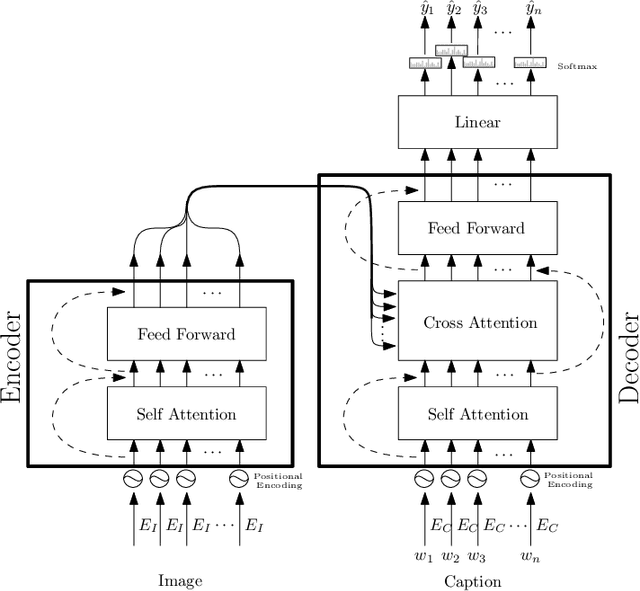

Abstract:Image Captioning is a current research task to describe the image content using the objects and their relationships in the scene. To tackle this task, two important research areas are used, artificial vision, and natural language processing. In Image Captioning, as in any computational intelligence task, the performance metrics are crucial for knowing how well (or bad) a method performs. In recent years, it has been observed that classical metrics based on n-grams are insufficient to capture the semantics and the critical meaning to describe the content in an image. Looking to measure how well or not the set of current and more recent metrics are doing, in this manuscript, we present an evaluation of several kinds of Image Captioning metrics and a comparison between them using the well-known MS COCO dataset. For this, we designed two scenarios; 1) a set of artificially build captions with several quality, and 2) a comparison of some state-of-the-art Image Captioning methods. We tried to answer the questions: Are the current metrics helping to produce high quality captions? How do actual metrics compare to each other? What are the metrics really measuring?

Video Captioning: a comparative review of where we are and which could be the route

Apr 13, 2022

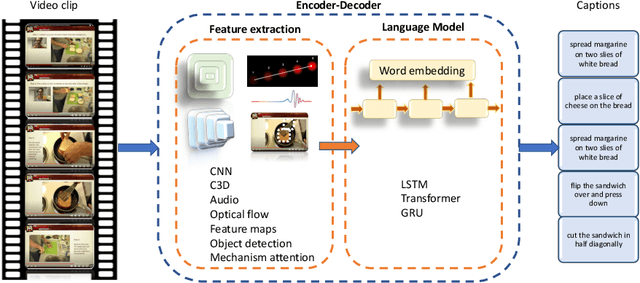

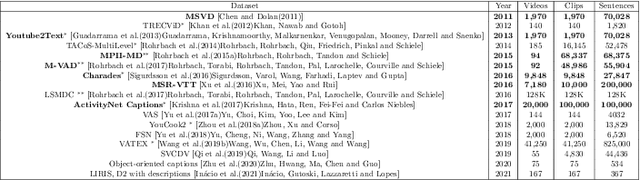

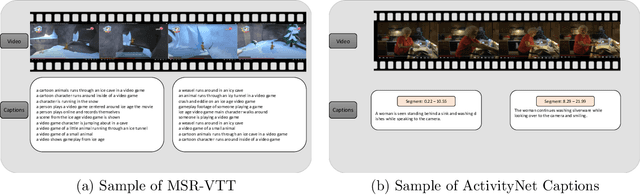

Abstract:Video captioning is the process of describing the content of a sequence of images capturing its semantic relationships and meanings. Dealing with this task with a single image is arduous, not to mention how difficult it is for a video (or images sequence). The amount and relevance of the applications of video captioning are vast, mainly to deal with a significant amount of video recordings in video surveillance, or assisting people visually impaired, to mention a few. To analyze where the efforts of our community to solve the video captioning task are, as well as what route could be better to follow, this manuscript presents an extensive review of more than 105 papers for the period of 2016 to 2021. As a result, the most-used datasets and metrics are identified. Also, the main approaches used and the best ones. We compute a set of rankings based on several performance metrics to obtain, according to its performance, the best method with the best result on the video captioning task. Finally, some insights are concluded about which could be the next steps or opportunity areas to improve dealing with this complex task.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge