Omid Aramoon

Don't Forget to Sign the Gradients!

Mar 05, 2021

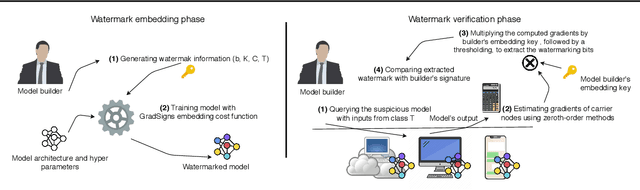

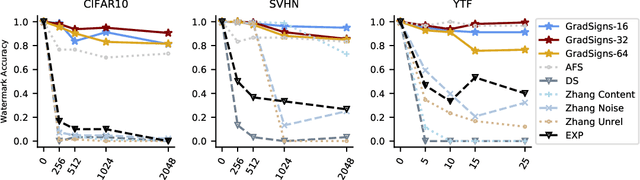

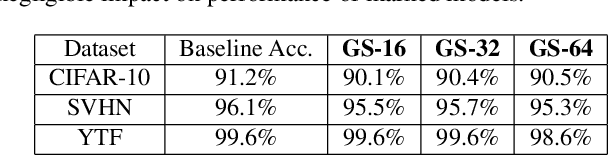

Abstract:Engineering a top-notch deep learning model is an expensive procedure that involves collecting data, hiring human resources with expertise in machine learning, and providing high computational resources. For that reason, deep learning models are considered as valuable Intellectual Properties (IPs) of the model vendors. To ensure reliable commercialization of deep learning models, it is crucial to develop techniques to protect model vendors against IP infringements. One of such techniques that recently has shown great promise is digital watermarking. However, current watermarking approaches can embed very limited amount of information and are vulnerable against watermark removal attacks. In this paper, we present GradSigns, a novel watermarking framework for deep neural networks (DNNs). GradSigns embeds the owner's signature into the gradient of the cross-entropy cost function with respect to inputs to the model. Our approach has a negligible impact on the performance of the protected model and it allows model vendors to remotely verify the watermark through prediction APIs. We evaluate GradSigns on DNNs trained for different image classification tasks using CIFAR-10, SVHN, and YTF datasets. Experimental results show that GradSigns is robust against all known counter-watermark attacks and can embed a large amount of information into DNNs.

Meta Federated Learning

Feb 10, 2021

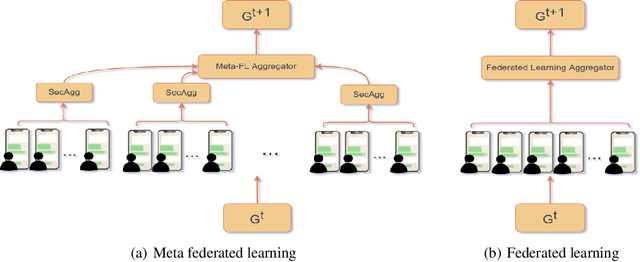

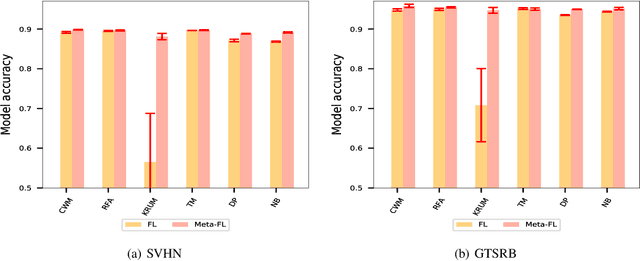

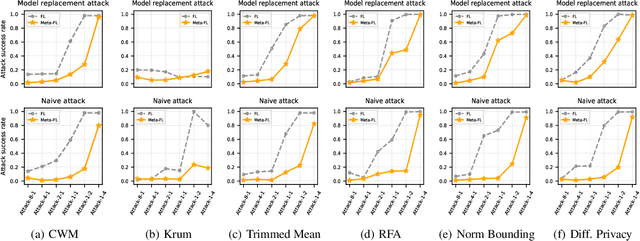

Abstract:Due to its distributed methodology alongside its privacy-preserving features, Federated Learning (FL) is vulnerable to training time adversarial attacks. In this study, our focus is on backdoor attacks in which the adversary's goal is to cause targeted misclassifications for inputs embedded with an adversarial trigger while maintaining an acceptable performance on the main learning task at hand. Contemporary defenses against backdoor attacks in federated learning require direct access to each individual client's update which is not feasible in recent FL settings where Secure Aggregation is deployed. In this study, we seek to answer the following question, Is it possible to defend against backdoor attacks when secure aggregation is in place?, a question that has not been addressed by prior arts. To this end, we propose Meta Federated Learning (Meta-FL), a novel variant of federated learning which not only is compatible with secure aggregation protocol but also facilitates defense against backdoor attacks. We perform a systematic evaluation of Meta-FL on two classification datasets: SVHN and GTSRB. The results show that Meta-FL not only achieves better utility than classic FL, but also enhances the performance of contemporary defenses in terms of robustness against adversarial attacks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge