Niko Tsakalakis

Explainability-by-Design: A Methodology to Support Explanations in Decision-Making Systems

Jun 13, 2022

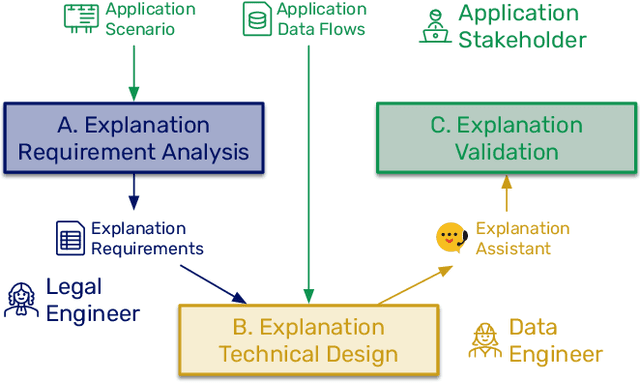

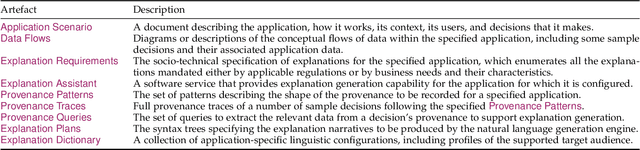

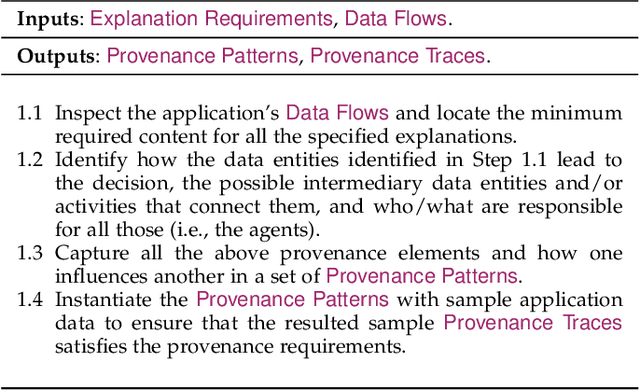

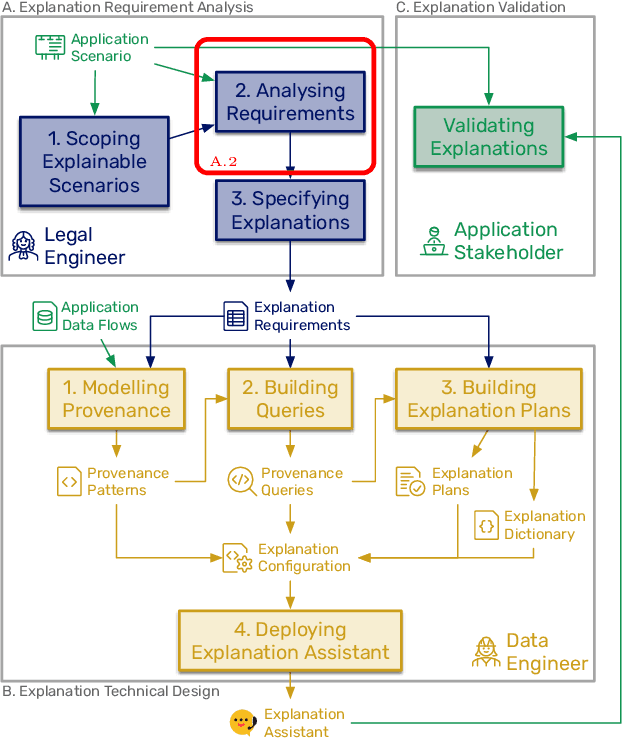

Abstract:Algorithms play a key role nowadays in many technological systems that control or affect various aspects of our lives. As a result, providing explanations to address the needs of users and organisations is increasingly expected by the laws and regulations, codes of conduct, and the public. However, as laws and regulations do not prescribe how to meet such expectations, organisations are often left to devise their own approaches to explainability, inevitably increasing the cost of compliance and good governance. Hence, we put forth "Explainability by Design", a holistic methodology characterised by proactive measures to include explanation capability in the design of decision-making systems. This paper describes the technical steps of the Explainability-by-Design methodology in a software engineering workflow to implement explanation capability from requirements elicited by domain experts for a specific application context. Outputs of the Explainability-by-Design methodology are a set of configurations, allowing a reusable service, called the Explanation Assistant, to exploit logs provided by applications and create provenance traces that can be queried to extract relevant data points, which in turn can be used in explanation plans to construct explanations personalised to their consumers. Following those steps, organisations will be able to design their decision-making systems to produce explanations that meet the specified requirements, be it from laws, regulations, or business needs. We apply the methodology to two applications, resulting in a deployment of the Explanation Assistant demonstrating explanations capabilities. Finally, the associated development costs are measured, showing that the approach to construct explanations is tractable in terms of development time, which can be as low as two hours per explanation sentence.

A taxonomy of explanations to support Explainability-by-Design

Jun 09, 2022

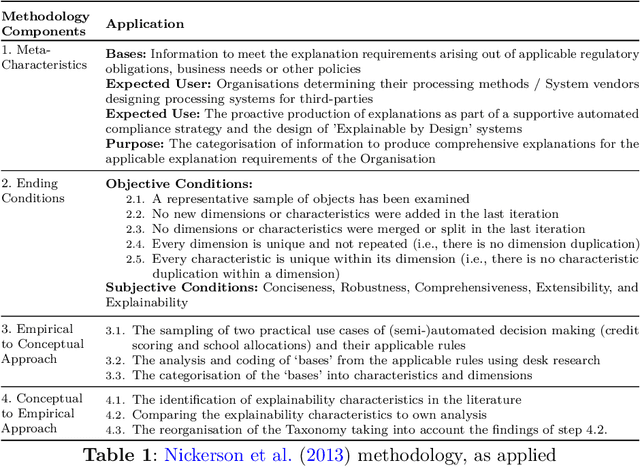

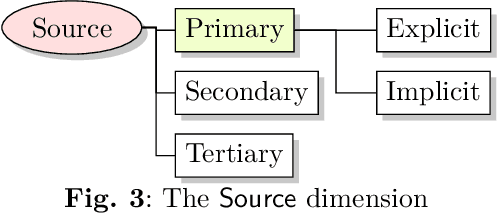

Abstract:As automated decision-making solutions are increasingly applied to all aspects of everyday life, capabilities to generate meaningful explanations for a variety of stakeholders (i.e., decision-makers, recipients of decisions, auditors, regulators...) become crucial. In this paper, we present a taxonomy of explanations that was developed as part of a holistic 'Explainability-by-Design' approach for the purposes of the project PLEAD. The taxonomy was built with a view to produce explanations for a wide range of requirements stemming from a variety of regulatory frameworks or policies set at the organizational level either to translate high-level compliance requirements or to meet business needs. The taxonomy comprises nine dimensions. It is used as a stand-alone classifier of explanations conceived as detective controls, in order to aid supportive automated compliance strategies. A machinereadable format of the taxonomy is provided in the form of a light ontology and the benefits of starting the Explainability-by-Design journey with such a taxonomy are demonstrated through a series of examples.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge