A taxonomy of explanations to support Explainability-by-Design

Paper and Code

Jun 09, 2022

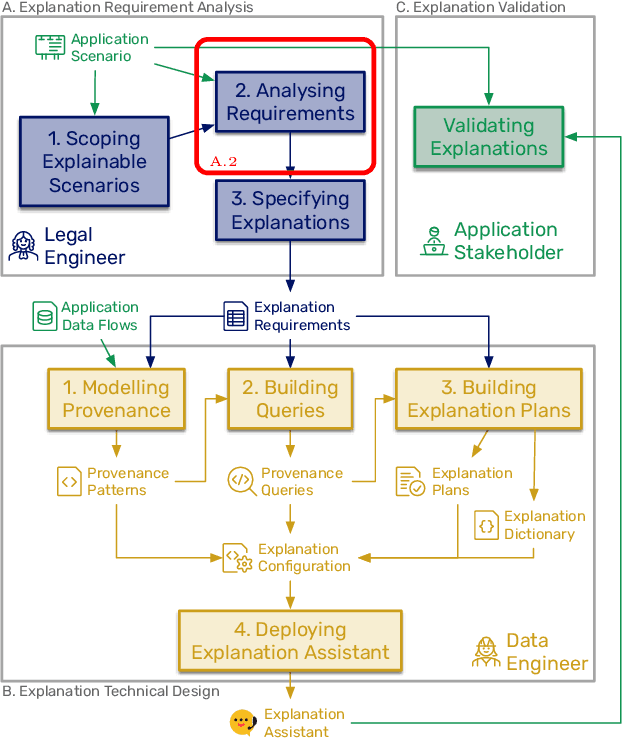

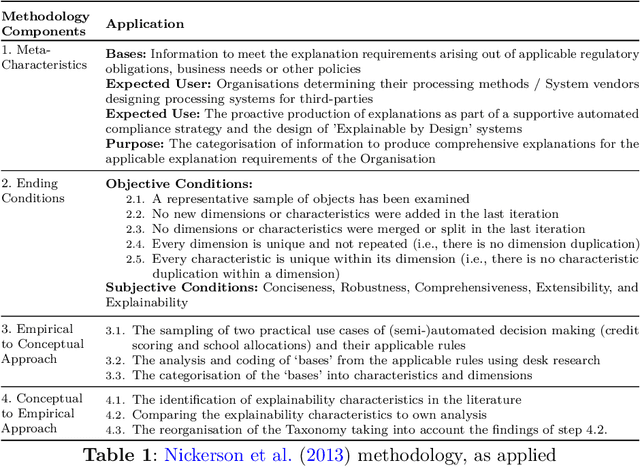

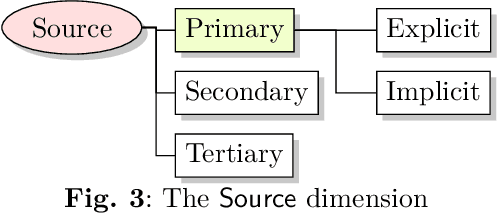

As automated decision-making solutions are increasingly applied to all aspects of everyday life, capabilities to generate meaningful explanations for a variety of stakeholders (i.e., decision-makers, recipients of decisions, auditors, regulators...) become crucial. In this paper, we present a taxonomy of explanations that was developed as part of a holistic 'Explainability-by-Design' approach for the purposes of the project PLEAD. The taxonomy was built with a view to produce explanations for a wide range of requirements stemming from a variety of regulatory frameworks or policies set at the organizational level either to translate high-level compliance requirements or to meet business needs. The taxonomy comprises nine dimensions. It is used as a stand-alone classifier of explanations conceived as detective controls, in order to aid supportive automated compliance strategies. A machinereadable format of the taxonomy is provided in the form of a light ontology and the benefits of starting the Explainability-by-Design journey with such a taxonomy are demonstrated through a series of examples.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge