Nattapong Thammasan

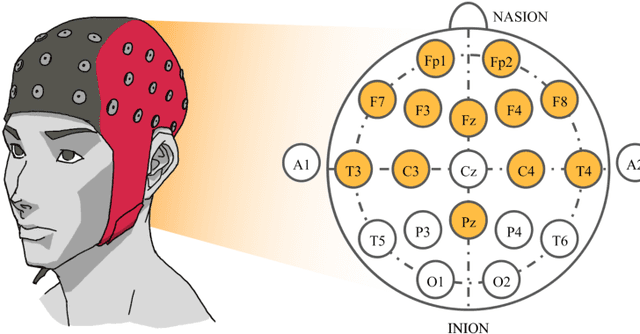

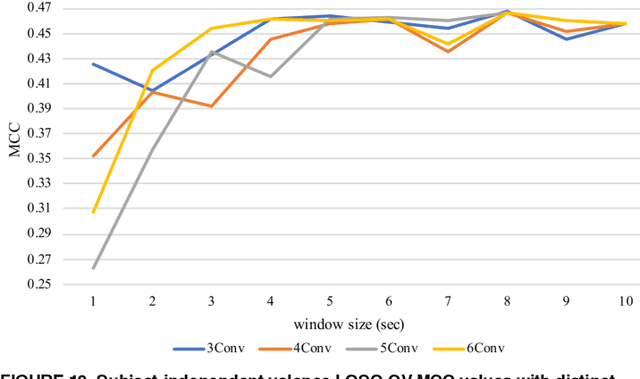

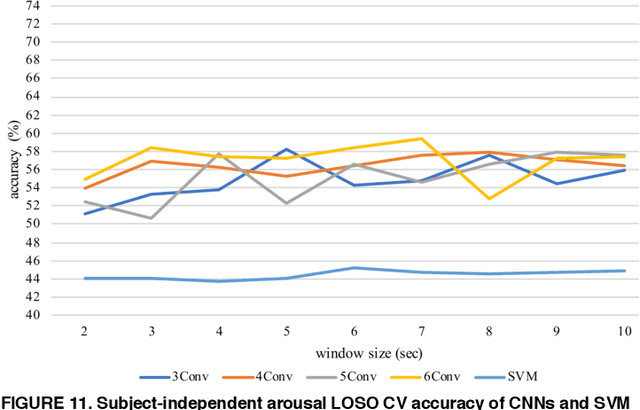

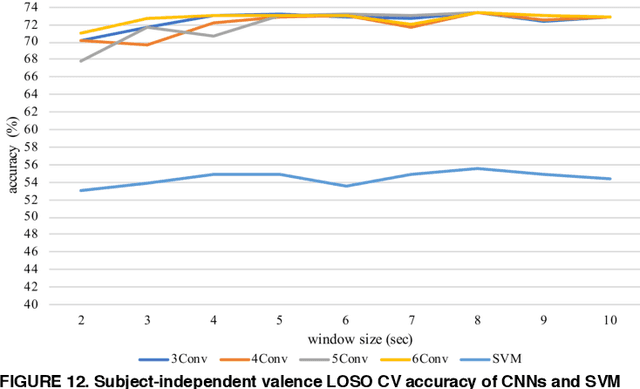

Spatiotemporal Emotion Recognition using Deep CNN Based on EEG during Music Listening

Oct 22, 2019

Abstract:Emotion recognition based on EEG has become an active research area. As one of the machine learning models, CNN has been utilized to solve diverse problems including issues in this domain. In this work, a study of CNN and its spatiotemporal feature extraction has been conducted in order to explore capabilities of the model in varied window sizes and electrode orders. Our investigation was conducted in subject-independent fashion. Results have shown that temporal information in distinct window sizes significantly affects recognition performance in both 10-fold and leave-one-subject-out cross validation. Spatial information from varying electrode order has modicum effect on classification. SVM classifier depending on spatiotemporal knowledge on the same dataset was previously employed and compared to these empirical results. Even though CNN and SVM have a homologous trend in window size effect, CNN outperformed SVM using leave-one-subject-out cross validation. This could be caused by different extracted features in the elicitation process.

Fusion of EEG and Musical Features in Continuous Music-emotion Recognition

Nov 30, 2016

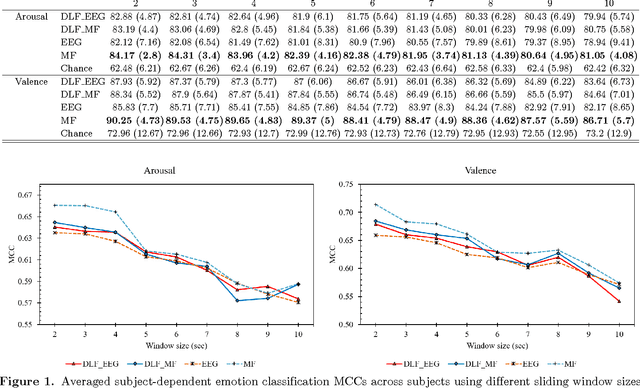

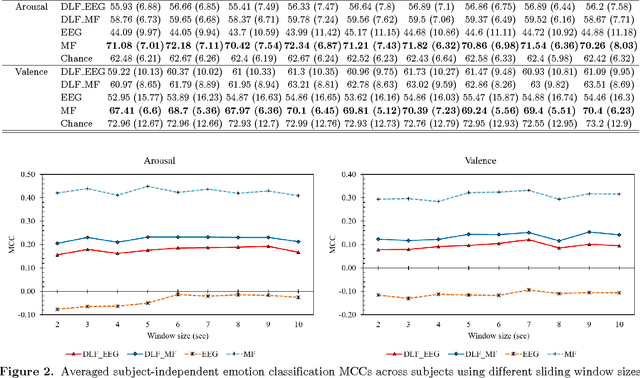

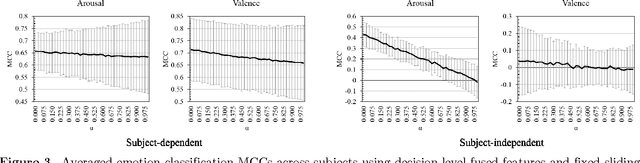

Abstract:Emotion estimation in music listening is confronting challenges to capture the emotion variation of listeners. Recent years have witnessed attempts to exploit multimodality fusing information from musical contents and physiological signals captured from listeners to improve the performance of emotion recognition. In this paper, we present a study of fusion of signals of electroencephalogram (EEG), a tool to capture brainwaves at a high-temporal resolution, and musical features at decision level in recognizing the time-varying binary classes of arousal and valence. Our empirical results showed that the fusion could outperform the performance of emotion recognition using only EEG modality that was suffered from inter-subject variability, and this suggested the promise of multimodal fusion in improving the accuracy of music-emotion recognition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge