Nathan Vance

SiNC+: Adaptive Camera-Based Vitals with Unsupervised Learning of Periodic Signals

Apr 20, 2024

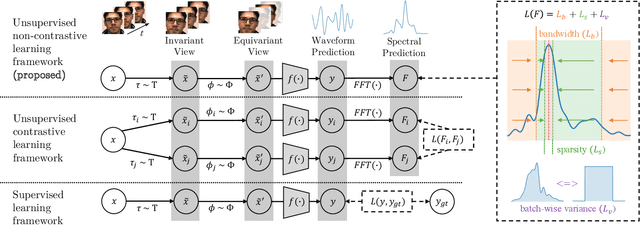

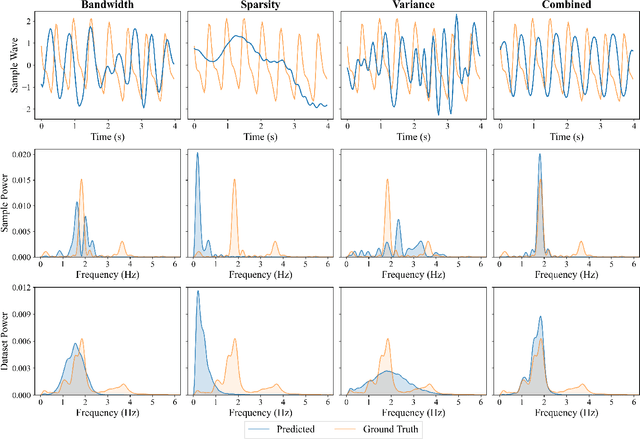

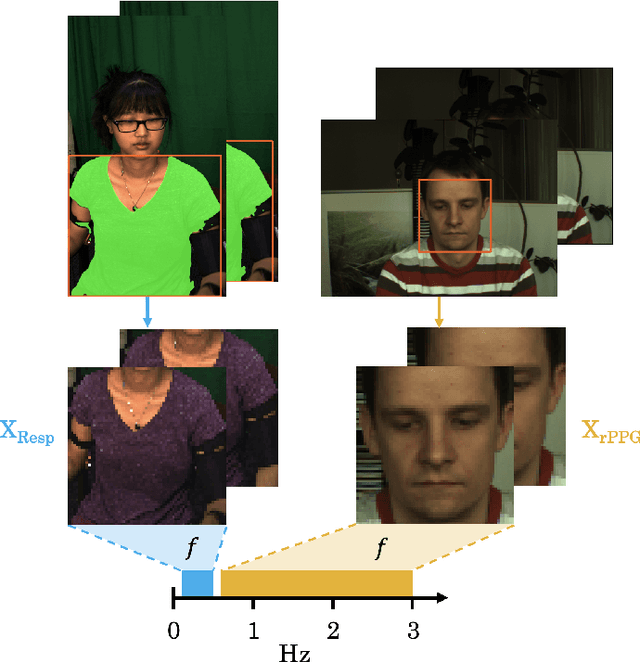

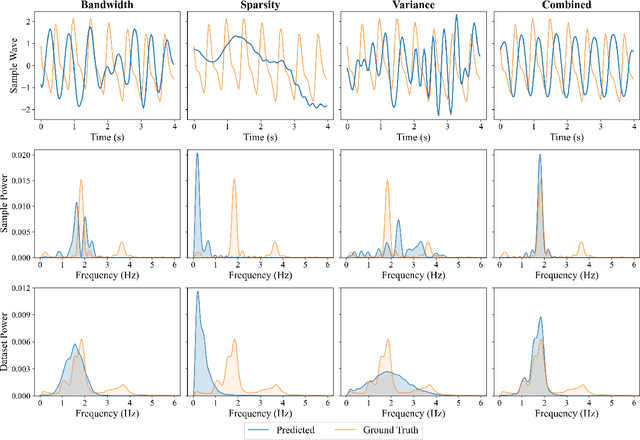

Abstract:Subtle periodic signals, such as blood volume pulse and respiration, can be extracted from RGB video, enabling noncontact health monitoring at low cost. Advancements in remote pulse estimation -- or remote photoplethysmography (rPPG) -- are currently driven by deep learning solutions. However, modern approaches are trained and evaluated on benchmark datasets with ground truth from contact-PPG sensors. We present the first non-contrastive unsupervised learning framework for signal regression to mitigate the need for labelled video data. With minimal assumptions of periodicity and finite bandwidth, our approach discovers the blood volume pulse directly from unlabelled videos. We find that encouraging sparse power spectra within normal physiological bandlimits and variance over batches of power spectra is sufficient for learning visual features of periodic signals. We perform the first experiments utilizing unlabelled video data not specifically created for rPPG to train robust pulse rate estimators. Given the limited inductive biases, we successfully applied the same approach to camera-based respiration by changing the bandlimits of the target signal. This shows that the approach is general enough for unsupervised learning of bandlimited quasi-periodic signals from different domains. Furthermore, we show that the framework is effective for finetuning models on unlabelled video from a single subject, allowing for personalized and adaptive signal regressors.

Measuring Domain Shifts using Deep Learning Remote Photoplethysmography Model Similarity

Apr 12, 2024

Abstract:Domain shift differences between training data for deep learning models and the deployment context can result in severe performance issues for models which fail to generalize. We study the domain shift problem under the context of remote photoplethysmography (rPPG), a technique for video-based heart rate inference. We propose metrics based on model similarity which may be used as a measure of domain shift, and we demonstrate high correlation between these metrics and empirical performance. One of the proposed metrics with viable correlations, DS-diff, does not assume access to the ground truth of the target domain, i.e. it may be applied to in-the-wild data. To that end, we investigate a model selection problem in which ground truth results for the evaluation domain is not known, demonstrating a 13.9% performance improvement over the average case baseline.

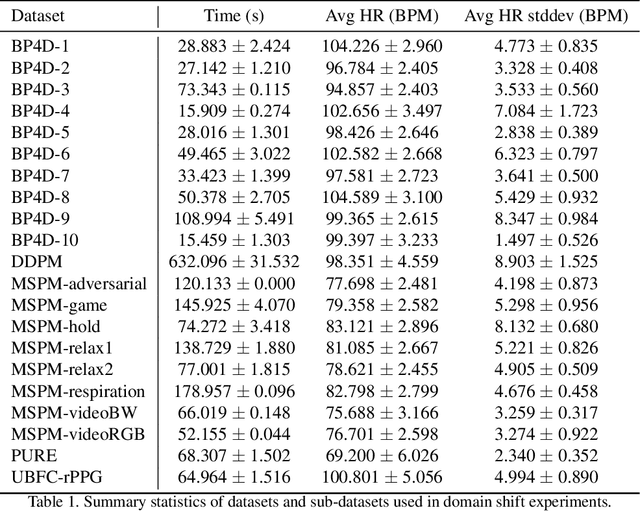

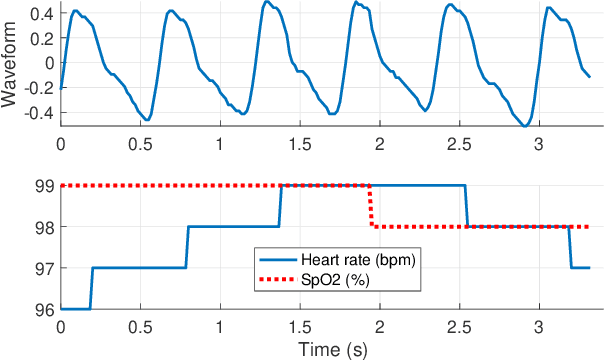

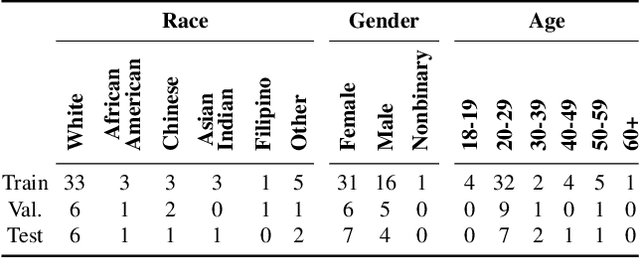

MSPM: A Multi-Site Physiological Monitoring Dataset for Remote Pulse, Respiration, and Blood Pressure Estimation

Feb 03, 2024Abstract:Visible-light cameras can capture subtle physiological biomarkers without physical contact with the subject. We present the Multi-Site Physiological Monitoring (MSPM) dataset, which is the first dataset collected to support the study of simultaneous camera-based vital signs estimation from multiple locations on the body. MSPM enables research on remote photoplethysmography (rPPG), respiration rate, and pulse transit time (PTT); it contains ground-truth measurements of pulse oximetry (at multiple body locations) and blood pressure using contacting sensors. We provide thorough experiments demonstrating the suitability of MSPM to support research on rPPG, respiration rate, and PTT. Cross-dataset rPPG experiments reveal that MSPM is a challenging yet high quality dataset, with intra-dataset pulse rate mean absolute error (MAE) below 4 beats per minute (BPM), and cross-dataset pulse rate MAE below 2 BPM in certain cases. Respiration experiments find a MAE of 1.09 breaths per minute by extracting motion features from the chest. PTT experiments find that across the pairs of different body sites, there is high correlation between remote PTT and contact-measured PTT, which facilitates the possibility for future camera-based PTT research.

Refining Remote Photoplethysmography Architectures using CKA and Empirical Methods

Jan 09, 2024

Abstract:Model architecture refinement is a challenging task in deep learning research fields such as remote photoplethysmography (rPPG). One architectural consideration, the depth of the model, can have significant consequences on the resulting performance. In rPPG models that are overprovisioned with more layers than necessary, redundancies exist, the removal of which can result in faster training and reduced computational load at inference time. With too few layers the models may exhibit sub-optimal error rates. We apply Centered Kernel Alignment (CKA) to an array of rPPG architectures of differing depths, demonstrating that shallower models do not learn the same representations as deeper models, and that after a certain depth, redundant layers are added without significantly increased functionality. An empirical study confirms these findings and shows how this method could be used to refine rPPG architectures.

Promoting Generalization in Cross-Dataset Remote Photoplethysmography

May 24, 2023

Abstract:Remote Photoplethysmography (rPPG), or the remote monitoring of a subject's heart rate using a camera, has seen a shift from handcrafted techniques to deep learning models. While current solutions offer substantial performance gains, we show that these models tend to learn a bias to pulse wave features inherent to the training dataset. We develop augmentations to mitigate this learned bias by expanding both the range and variability of heart rates that the model sees while training, resulting in improved model convergence when training and cross-dataset generalization at test time. Through a 3-way cross dataset analysis we demonstrate a reduction in mean absolute error from over 13 beats per minute to below 3 beats per minute. We compare our method with other recent rPPG systems, finding similar performance under a variety of evaluation parameters.

Full-Body Cardiovascular Sensing with Remote Photoplethysmography

Mar 16, 2023

Abstract:Remote photoplethysmography (rPPG) allows for noncontact monitoring of blood volume changes from a camera by detecting minor fluctuations in reflected light. Prior applications of rPPG focused on face videos. In this paper we explored the feasibility of rPPG from non-face body regions such as the arms, legs, and hands. We collected a new dataset titled Multi-Site Physiological Monitoring (MSPM), which will be released with this paper. The dataset consists of 90 frames per second video of exposed arms, legs, and face, along with 10 synchronized PPG recordings. We performed baseline heart rate estimation experiments from non-face regions with several state-of-the-art rPPG approaches, including chrominance-based (CHROM), plane-orthogonal-to-skin (POS) and RemotePulseNet (RPNet). To our knowledge, this is the first evaluation of the fidelity of rPPG signals simultaneously obtained from multiple regions of a human body. Our experiments showed that skin pixels from arms, legs, and hands are all potential sources of the blood volume pulse. The best-performing approach, POS, achieved a mean absolute error peaking at 7.11 beats per minute from non-facial body parts compared to 1.38 beats per minute from the face. Additionally, we performed experiments on pulse transit time (PTT) from both the contact PPG and rPPG signals. We found that remote PTT is possible with moderately high frame rate video when distal locations on the body are visible. These findings and the supporting dataset should facilitate new research on non-face rPPG and monitoring blood flow dynamics over the whole body with a camera.

Non-Contrastive Unsupervised Learning of Physiological Signals from Video

Mar 14, 2023

Abstract:Subtle periodic signals such as blood volume pulse and respiration can be extracted from RGB video, enabling remote health monitoring at low cost. Advancements in remote pulse estimation -- or remote photoplethysmography (rPPG) -- are currently driven by deep learning solutions. However, modern approaches are trained and evaluated on benchmark datasets with associated ground truth from contact-PPG sensors. We present the first non-contrastive unsupervised learning framework for signal regression to break free from the constraints of labelled video data. With minimal assumptions of periodicity and finite bandwidth, our approach is capable of discovering the blood volume pulse directly from unlabelled videos. We find that encouraging sparse power spectra within normal physiological bandlimits and variance over batches of power spectra is sufficient for learning visual features of periodic signals. We perform the first experiments utilizing unlabelled video data not specifically created for rPPG to train robust pulse rate estimators. Given the limited inductive biases and impressive empirical results, the approach is theoretically capable of discovering other periodic signals from video, enabling multiple physiological measurements without the need for ground truth signals. Codes to fully reproduce the experiments are made available along with the paper.

Hallucinated Heartbeats: Anomaly-Aware Remote Pulse Estimation

Mar 11, 2023

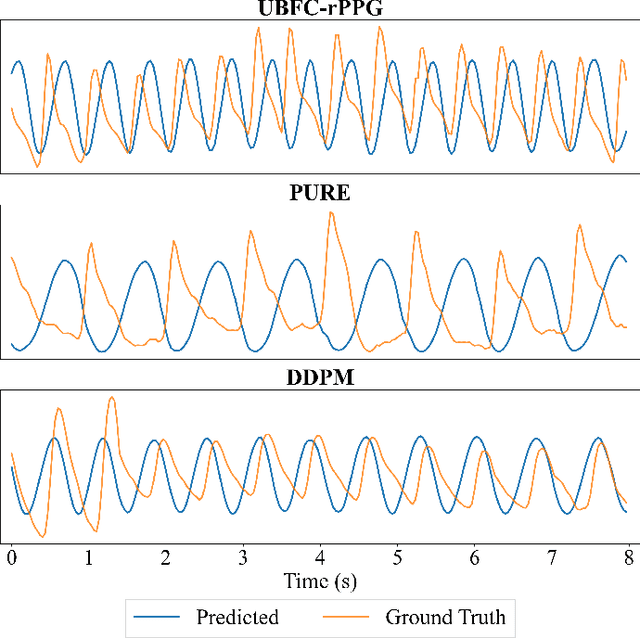

Abstract:Camera-based physiological monitoring, especially remote photoplethysmography (rPPG), is a promising tool for health diagnostics, and state-of-the-art pulse estimators have shown impressive performance on benchmark datasets. We argue that evaluations of modern solutions may be incomplete, as we uncover failure cases for videos without a live person, or in the presence of severe noise. We demonstrate that spatiotemporal deep learning models trained only with live samples "hallucinate" a genuine-shaped pulse on anomalous and noisy videos, which may have negative consequences when rPPG models are used by medical personnel. To address this, we offer: (a) An anomaly detection model, built on top of the predicted waveforms. We compare models trained in open-set (unknown abnormal predictions) and closed-set (abnormal predictions known when training) settings; (b) An anomaly-aware training regime that penalizes the model for predicting periodic signals from anomalous videos. Extensive experimentation with eight research datasets (rPPG-specific: DDPM, CDDPM, PURE, UBFC, ARPM; deep fakes: DFDC; face presentation attack detection: HKBU-MARs; rPPG outlier: KITTI) show better accuracy of anomaly detection for deep learning models incorporating the proposed training (75.8%), compared to models trained regularly (73.7%) and to hand-crafted rPPG methods (52-62%).

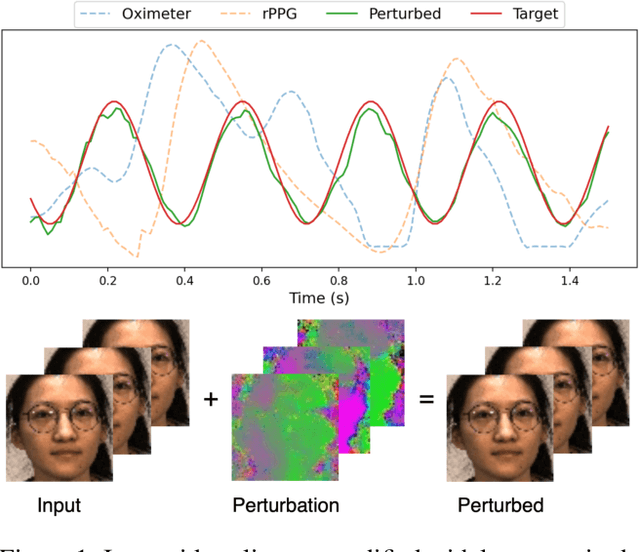

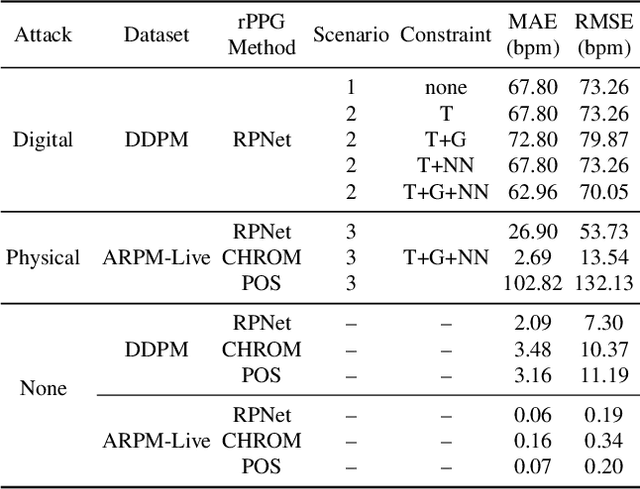

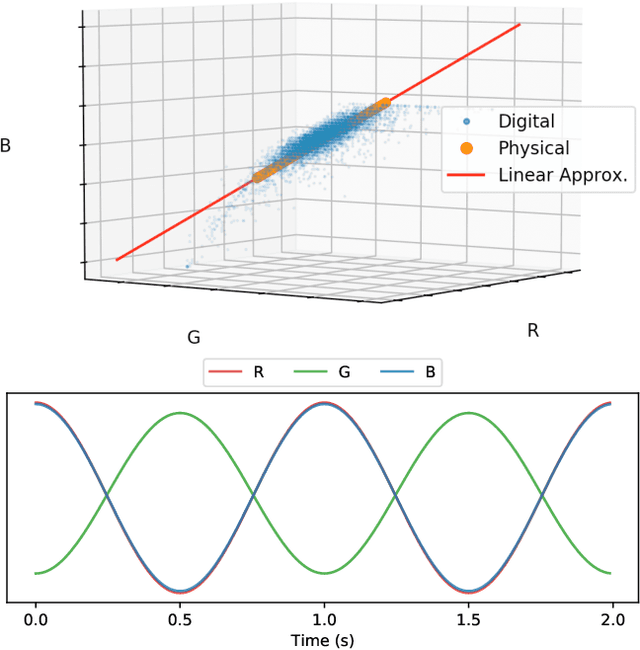

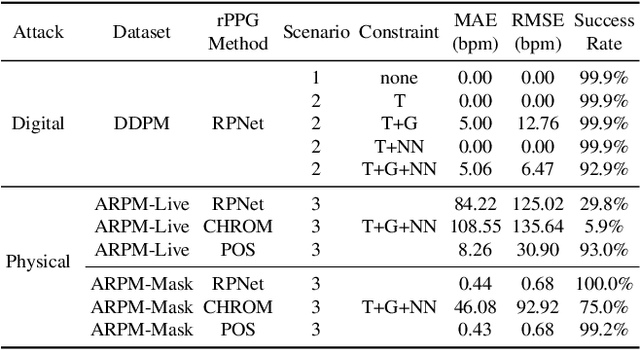

Digital and Physical-World Attacks on Remote Pulse Detection

Oct 21, 2021

Abstract:Remote photoplethysmography (rPPG) is a technique for estimating blood volume changes from reflected light without the need for a contact sensor. We present the first examples of presentation attacks in the digital and physical domains on rPPG from face video. Digital attacks are easily performed by adding imperceptible periodic noise to the input videos. Physical attacks are performed with illumination from visible spectrum LEDs placed in close proximity to the face, while still being difficult to perceive with the human eye. We also show that our attacks extend beyond medical applications, since the method can effectively generate a strong periodic pulse on 3D-printed face masks, which presents difficulties for pulse-based face presentation attack detection (PAD). The paper concludes with ideas for using this work to improve robustness of rPPG methods and pulse-based face PAD.

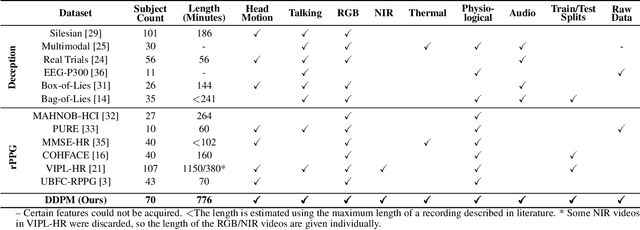

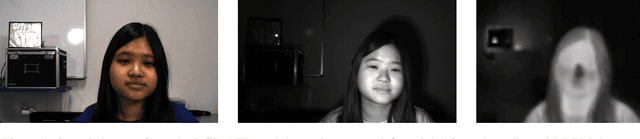

Deception Detection and Remote Physiological Monitoring: A Dataset and Baseline Experimental Results

Jun 11, 2021

Abstract:We present the Deception Detection and Physiological Monitoring (DDPM) dataset and initial baseline results on this dataset. Our application context is an interview scenario in which the interviewee attempts to deceive the interviewer on selected responses. The interviewee is recorded in RGB, near-infrared, and long-wave infrared, along with cardiac pulse, blood oxygenation, and audio. After collection, data were annotated for interviewer/interviewee, curated, ground-truthed, and organized into train / test parts for a set of canonical deception detection experiments. Baseline experiments found random accuracy for micro-expressions as an indicator of deception, but that saccades can give a statistically significant response. We also estimated subject heart rates from face videos (remotely) with a mean absolute error as low as 3.16 bpm. The database contains almost 13 hours of recordings of 70 subjects, and over 8 million visible-light, near-infrared, and thermal video frames, along with appropriate meta, audio and pulse oximeter data. To our knowledge, this is the only collection offering recordings of five modalities in an interview scenario that can be used in both deception detection and remote photoplethysmography research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge