Nan Jia

An Empirical Framework for Evaluating Semantic Preservation Using Hugging Face

Dec 08, 2025

Abstract:As machine learning (ML) becomes an integral part of high-autonomy systems, it is critical to ensure the trustworthiness of learning-enabled software systems (LESS). Yet, the nondeterministic and run-time-defined semantics of ML complicate traditional software refactoring. We define semantic preservation in LESS as the property that optimizations of intelligent components do not alter the system's overall functional behavior. This paper introduces an empirical framework to evaluate semantic preservation in LESS by mining model evolution data from HuggingFace. We extract commit histories, $\textit{Model Cards}$, and performance metrics from a large number of models. To establish baselines, we conducted case studies in three domains, tracing performance changes across versions. Our analysis demonstrates how $\textit{semantic drift}$ can be detected via evaluation metrics across commits and reveals common refactoring patterns based on commit message analysis. Although API constraints limited the possibility of estimating a full-scale threshold, our pipeline offers a foundation for defining community-accepted boundaries for semantic preservation. Our contributions include: (1) a large-scale dataset of ML model evolution, curated from 1.7 million Hugging Face entries via a reproducible pipeline using the native HF hub API, (2) a practical pipeline for the evaluation of semantic preservation for a subset of 536 models and 4000+ metrics and (3) empirical case studies illustrating semantic drift in practice. Together, these contributions advance the foundations for more maintainable and trustworthy ML systems.

Safe Automated Refactoring for Efficient Migration of Imperative Deep Learning Programs to Graph Execution

Apr 07, 2025Abstract:Efficiency is essential to support responsiveness w.r.t. ever-growing datasets, especially for Deep Learning (DL) systems. DL frameworks have traditionally embraced deferred execution-style DL code -- supporting symbolic, graph-based Deep Neural Network (DNN) computation. While scalable, such development is error-prone, non-intuitive, and difficult to debug. Consequently, more natural, imperative DL frameworks encouraging eager execution have emerged at the expense of run-time performance. Though hybrid approaches aim for the "best of both worlds," using them effectively requires subtle considerations to make code amenable to safe, accurate, and efficient graph execution. We present an automated refactoring approach that assists developers in specifying whether their otherwise eagerly-executed imperative DL code could be reliably and efficiently executed as graphs while preserving semantics. The approach, based on a novel imperative tensor analysis, automatically determines when it is safe and potentially advantageous to migrate imperative DL code to graph execution. The approach is implemented as a PyDev Eclipse IDE plug-in that integrates the WALA Ariadne analysis framework and evaluated on 19 Python projects consisting of 132.05 KLOC. We found that 326 of 766 candidate functions (42.56%) were refactorable, and an average speedup of 2.16 on performance tests was observed. The results indicate that the approach is useful in optimizing imperative DL code to its full potential.

M-DEW: Extending Dynamic Ensemble Weighting to Handle Missing Values

Apr 30, 2024

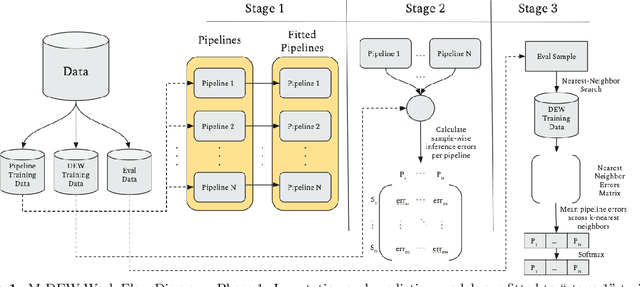

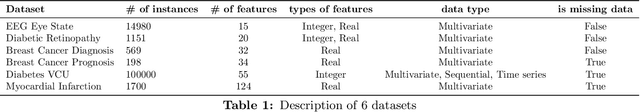

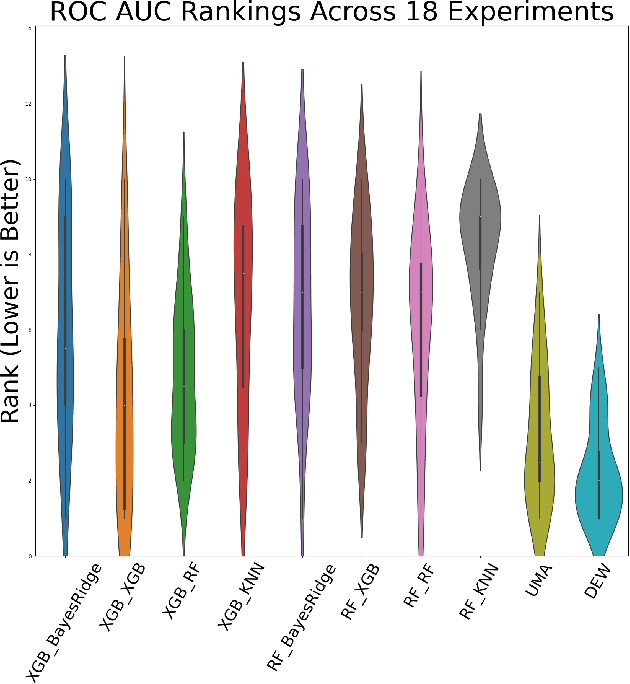

Abstract:Missing value imputation is a crucial preprocessing step for many machine learning problems. However, it is often considered as a separate subtask from downstream applications such as classification, regression, or clustering, and thus is not optimized together with them. We hypothesize that treating the imputation model and downstream task model together and optimizing over full pipelines will yield better results than treating them separately. Our work describes a novel AutoML technique for making downstream predictions with missing data that automatically handles preprocessing, model weighting, and selection during inference time, with minimal compute overhead. Specifically we develop M-DEW, a Dynamic missingness-aware Ensemble Weighting (DEW) approach, that constructs a set of two-stage imputation-prediction pipelines, trains each component separately, and dynamically calculates a set of pipeline weights for each sample during inference time. We thus extend previous work on dynamic ensemble weighting to handle missing data at the level of full imputation-prediction pipelines, improving performance and calibration on downstream machine learning tasks over standard model averaging techniques. M-DEW is shown to outperform the state-of-the-art in that it produces statistically significant reductions in model perplexity in 17 out of 18 experiments, while improving average precision in 13 out of 18 experiments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge