Muhammed Fatih Balın

(LA)yer-neigh(BOR) Sampling: Defusing Neighborhood Explosion in GNNs

Oct 24, 2022Abstract:Graph Neural Networks have recently received a significant attention, however, training them at a large scale still remains a challenge. Minibatch training coupled with sampling is used to alleviate this challenge. Even so existing approaches either suffer from the neighborhood explosion phenomenon or do not have good performance. To deal with these issues, we propose a new sampling algorithm called LAyer-neighBOR sampling (LABOR). It is designed to be a direct replacement for Neighborhood Sampling with the same fanout hyperparameter while sampling much fewer vertices, without sacrificing quality. By design, the variance of the estimator of each vertex matches Neighbor Sampling from the point of view of a single vertex. In our experiments, we demonstrate the superiority of our approach when it comes to model convergence behaviour against Neighbor Sampling and also the other Layer Sampling approaches under the same limited vertex sampling budget constraints.

MG-GCN: Scalable Multi-GPU GCN Training Framework

Oct 17, 2021

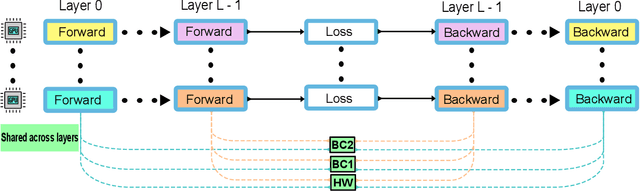

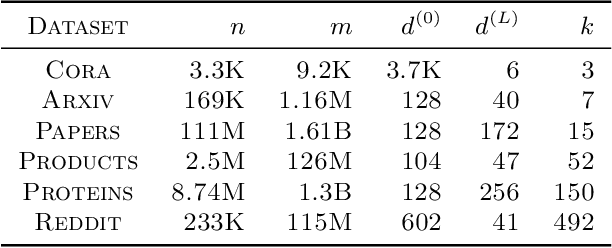

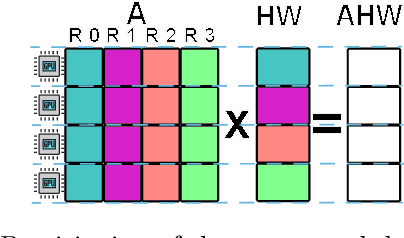

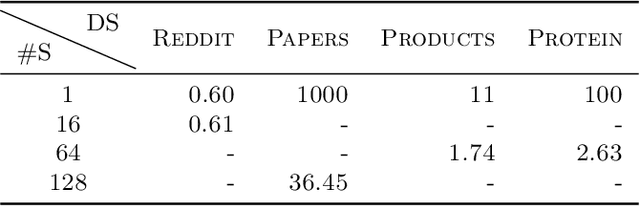

Abstract:Full batch training of Graph Convolutional Network (GCN) models is not feasible on a single GPU for large graphs containing tens of millions of vertices or more. Recent work has shown that, for the graphs used in the machine learning community, communication becomes a bottleneck and scaling is blocked outside of the single machine regime. Thus, we propose MG-GCN, a multi-GPU GCN training framework taking advantage of the high-speed communication links between the GPUs present in multi-GPU systems. MG-GCN employs multiple High-Performance Computing optimizations, including efficient re-use of memory buffers to reduce the memory footprint of training GNN models, as well as communication and computation overlap. These optimizations enable execution on larger datasets, that generally do not fit into memory of a single GPU in state-of-the-art implementations. Furthermore, they contribute to achieve superior speedup compared to the state-of-the-art. For example, MG-GCN achieves super-linear speedup with respect to DGL, on the Reddit graph on both DGX-1 (V100) and DGX-A100.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge