Moritz Kampelmühler

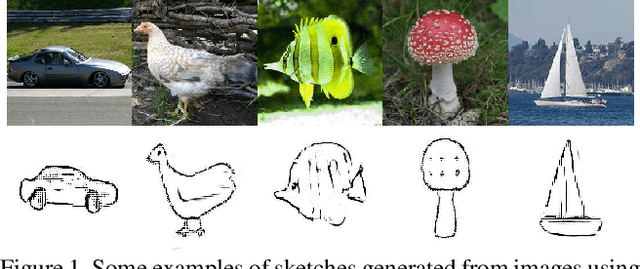

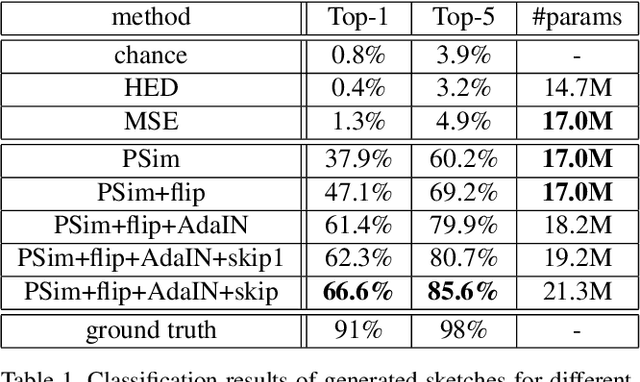

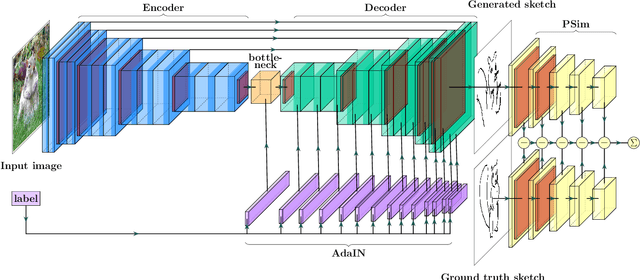

Synthesizing human-like sketches from natural images using a conditional convolutional decoder

Mar 16, 2020

Abstract:Humans are able to precisely communicate diverse concepts by employing sketches, a highly reduced and abstract shape based representation of visual content. We propose, for the first time, a fully convolutional end-to-end architecture that is able to synthesize human-like sketches of objects in natural images with potentially cluttered background. To enable an architecture to learn this highly abstract mapping, we employ the following key components: (1) a fully convolutional encoder-decoder structure, (2) a perceptual similarity loss function operating in an abstract feature space and (3) conditioning of the decoder on the label of the object that shall be sketched. Given the combination of these architectural concepts, we can train our structure in an end-to-end supervised fashion on a collection of sketch-image pairs. The generated sketches of our architecture can be classified with 85.6% Top-5 accuracy and we verify their visual quality via a user study. We find that deep features as a perceptual similarity metric enable image translation with large domain gaps and our findings further show that convolutional neural networks trained on image classification tasks implicitly learn to encode shape information. Code is available under https://github.com/kampelmuehler/synthesizing_human_like_sketches

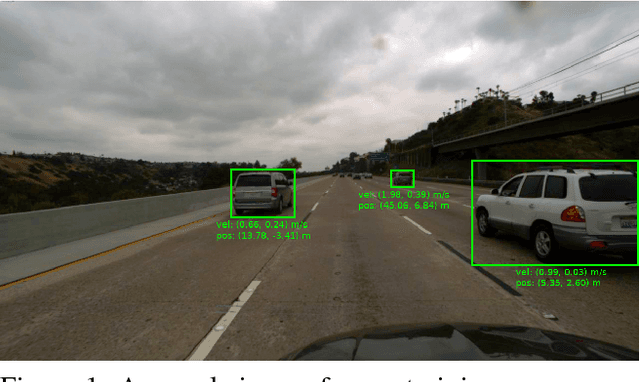

Camera-based vehicle velocity estimation from monocular video

Feb 20, 2018

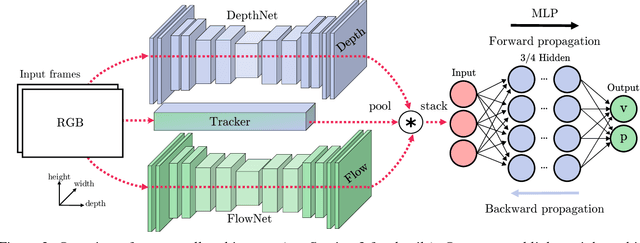

Abstract:This paper documents the winning entry at the CVPR2017 vehicle velocity estimation challenge. Velocity estimation is an emerging task in autonomous driving which has not yet been thoroughly explored. The goal is to estimate the relative velocity of a specific vehicle from a sequence of images. In this paper, we present a light-weight approach for directly regressing vehicle velocities from their trajectories using a multilayer perceptron. Another contribution is an explorative study of features for monocular vehicle velocity estimation. We find that light-weight trajectory based features outperform depth and motion cues extracted from deep ConvNets, especially for far-distance predictions where current disparity and optical flow estimators are challenged significantly. Our light-weight approach is real-time capable on a single CPU and outperforms all competing entries in the velocity estimation challenge. On the test set, we report an average error of 1.12 m/s which is comparable to a (ground-truth) system that combines LiDAR and radar techniques to achieve an error of around 0.71 m/s.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge