Morgan Harvey

The Barriers to Online Clothing Websites for Visually Impaired People: An Interview and Observation Approach to Understanding Needs

May 19, 2023

Abstract:Visually impaired (VI) people often face challenges when performing everyday tasks and identify shopping for clothes as one of the most challenging. Many engage in online shopping, which eliminates some challenges of physical shopping. However, clothes shopping online suffers from many other limitations and barriers. More research is needed to address these challenges, and extant works often base their findings on interviews alone, providing only subjective, recall-biased information. We conducted two complementary studies using both observational and interview approaches to fill a gap in understanding about VI people's behaviour when selecting and purchasing clothes online. Our findings show that shopping websites suffer from inaccurate, misleading, and contradictory clothing descriptions; that VI people mainly rely on (unreliable) search tools and check product descriptions by reviewing customer comments. Our findings also indicate that VI people are hesitant to accept assistance from automated, but that trust in such systems could be improved if researchers can develop systems that better accommodate users' needs and preferences.

An Analysis of Classification Approaches for Hit Song Prediction using Engineered Metadata Features with Lyrics and Audio Features

Jan 31, 2023

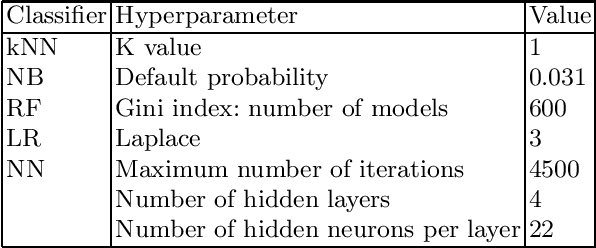

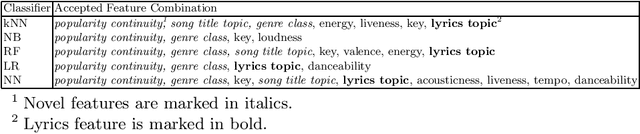

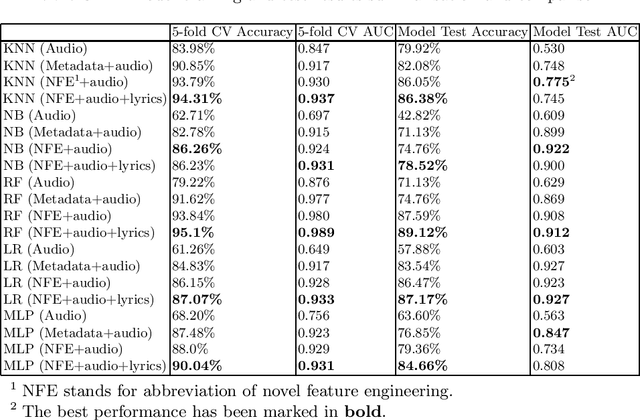

Abstract:Hit song prediction, one of the emerging fields in music information retrieval (MIR), remains a considerable challenge. Being able to understand what makes a given song a hit is clearly beneficial to the whole music industry. Previous approaches to hit song prediction have focused on using audio features of a record. This study aims to improve the prediction result of the top 10 hits among Billboard Hot 100 songs using more alternative metadata, including song audio features provided by Spotify, song lyrics, and novel metadata-based features (title topic, popularity continuity and genre class). Five machine learning approaches are applied, including: k-nearest neighbours, Naive Bayes, Random Forest, Logistic Regression and Multilayer Perceptron. Our results show that Random Forest (RF) and Logistic Regression (LR) with all features (including novel features, song audio features and lyrics features) outperforms other models, achieving 89.1% and 87.2% accuracy, and 0.91 and 0.93 AUC, respectively. Our findings also demonstrate the utility of our novel music metadata features, which contributed most to the models' discriminative performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge