Momir Adžemović

Learning Association via Track-Detection Matching for Multi-Object Tracking

Dec 26, 2025

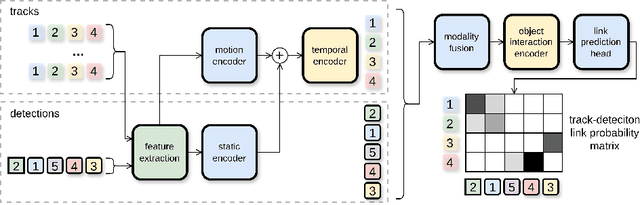

Abstract:Multi-object tracking aims to maintain object identities over time by associating detections across video frames. Two dominant paradigms exist in literature: tracking-by-detection methods, which are computationally efficient but rely on handcrafted association heuristics, and end-to-end approaches, which learn association from data at the cost of higher computational complexity. We propose Track-Detection Link Prediction (TDLP), a tracking-by-detection method that performs per-frame association via link prediction between tracks and detections, i.e., by predicting the correct continuation of each track at every frame. TDLP is architecturally designed primarily for geometric features such as bounding boxes, while optionally incorporating additional cues, including pose and appearance. Unlike heuristic-based methods, TDLP learns association directly from data without handcrafted rules, while remaining modular and computationally efficient compared to end-to-end trackers. Extensive experiments on multiple benchmarks demonstrate that TDLP consistently surpasses state-of-the-art performance across both tracking-by-detection and end-to-end methods. Finally, we provide a detailed analysis comparing link prediction with metric learning-based association and show that link prediction is more effective, particularly when handling heterogeneous features such as detection bounding boxes. Our code is available at \href{https://github.com/Robotmurlock/TDLP}{https://github.com/Robotmurlock/TDLP}.

Deep Learning-Based Multi-Object Tracking: A Comprehensive Survey from Foundations to State-of-the-Art

Jun 16, 2025

Abstract:Multi-object tracking (MOT) is a core task in computer vision that involves detecting objects in video frames and associating them across time. The rise of deep learning has significantly advanced MOT, particularly within the tracking-by-detection paradigm, which remains the dominant approach. Advancements in modern deep learning-based methods accelerated in 2022 with the introduction of ByteTrack for tracking-by-detection and MOTR for end-to-end tracking. Our survey provides an in-depth analysis of deep learning-based MOT methods, systematically categorizing tracking-by-detection approaches into five groups: joint detection and embedding, heuristic-based, motion-based, affinity learning, and offline methods. In addition, we examine end-to-end tracking methods and compare them with existing alternative approaches. We evaluate the performance of recent trackers across multiple benchmarks and specifically assess their generality by comparing results across different domains. Our findings indicate that heuristic-based methods achieve state-of-the-art results on densely populated datasets with linear object motion, while deep learning-based association methods, in both tracking-by-detection and end-to-end approaches, excel in scenarios with complex motion patterns.

Engineering an Efficient Object Tracker for Non-Linear Motion

Jun 30, 2024Abstract:The goal of multi-object tracking is to detect and track all objects in a scene while maintaining unique identifiers for each, by associating their bounding boxes across video frames. This association relies on matching motion and appearance patterns of detected objects. This task is especially hard in case of scenarios involving dynamic and non-linear motion patterns. In this paper, we introduce DeepMoveSORT, a novel, carefully engineered multi-object tracker designed specifically for such scenarios. In addition to standard methods of appearance-based association, we improve motion-based association by employing deep learnable filters (instead of the most commonly used Kalman filter) and a rich set of newly proposed heuristics. Our improvements to motion-based association methods are severalfold. First, we propose a new transformer-based filter architecture, TransFilter, which uses an object's motion history for both motion prediction and noise filtering. We further enhance the filter's performance by careful handling of its motion history and accounting for camera motion. Second, we propose a set of heuristics that exploit cues from the position, shape, and confidence of detected bounding boxes to improve association performance. Our experimental evaluation demonstrates that DeepMoveSORT outperforms existing trackers in scenarios featuring non-linear motion, surpassing state-of-the-art results on three such datasets. We also perform a thorough ablation study to evaluate the contributions of different tracker components which we proposed. Based on our study, we conclude that using a learnable filter instead of the Kalman filter, along with appearance-based association is key to achieving strong general tracking performance.

Beyond Kalman Filters: Deep Learning-Based Filters for Improved Object Tracking

Feb 15, 2024Abstract:Traditional tracking-by-detection systems typically employ Kalman filters (KF) for state estimation. However, the KF requires domain-specific design choices and it is ill-suited to handling non-linear motion patterns. To address these limitations, we propose two innovative data-driven filtering methods. Our first method employs a Bayesian filter with a trainable motion model to predict an object's future location and combines its predictions with observations gained from an object detector to enhance bounding box prediction accuracy. Moreover, it dispenses with most domain-specific design choices characteristic of the KF. The second method, an end-to-end trainable filter, goes a step further by learning to correct detector errors, further minimizing the need for domain expertise. Additionally, we introduce a range of motion model architectures based on Recurrent Neural Networks, Neural Ordinary Differential Equations, and Conditional Neural Processes, that are combined with the proposed filtering methods. Our extensive evaluation across multiple datasets demonstrates that our proposed filters outperform the traditional KF in object tracking, especially in the case of non-linear motion patterns -- the use case our filters are best suited to. We also conduct noise robustness analysis of our filters with convincing positive results. We further propose a new cost function for associating observations with tracks. Our tracker, which incorporates this new association cost with our proposed filters, outperforms the conventional SORT method and other motion-based trackers in multi-object tracking according to multiple metrics on motion-rich DanceTrack and SportsMOT datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge