Mohammad Faisal Amir

Edge-Host Partitioning of Deep Neural Networks with Feature Space Encoding for Resource-Constrained Internet-of-Things Platforms

Feb 11, 2018

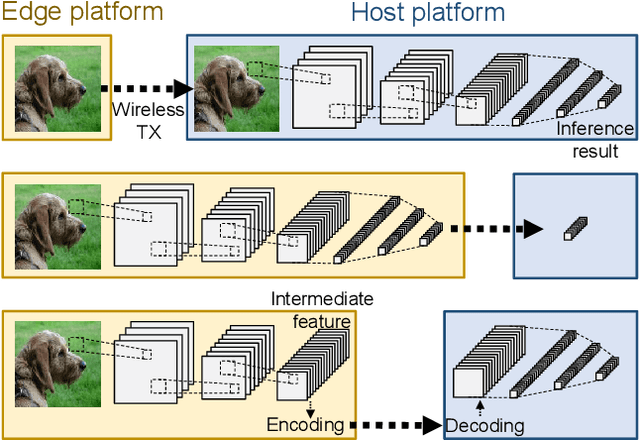

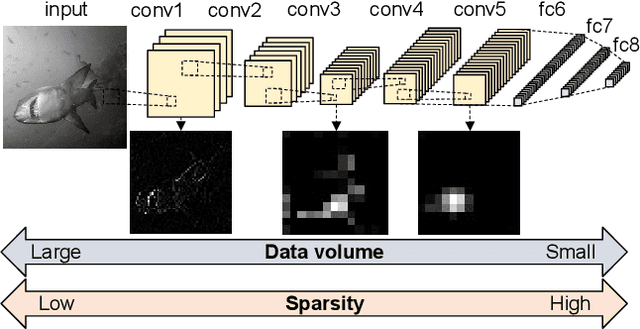

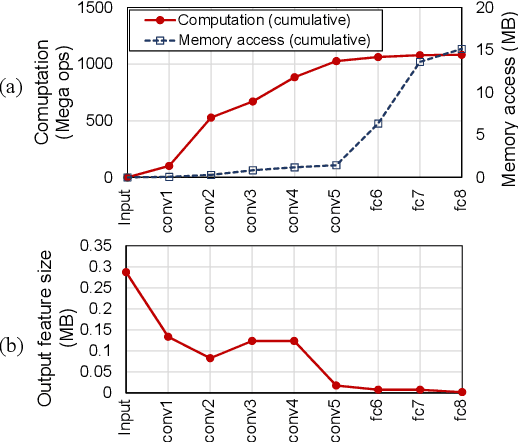

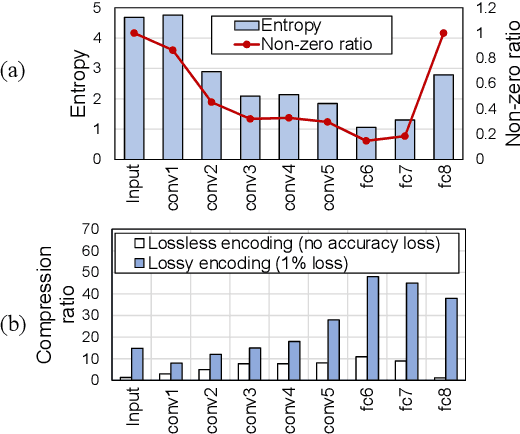

Abstract:This paper introduces partitioning an inference task of a deep neural network between an edge and a host platform in the IoT environment. We present a DNN as an encoding pipeline, and propose to transmit the output feature space of an intermediate layer to the host. The lossless or lossy encoding of the feature space is proposed to enhance the maximum input rate supported by the edge platform and/or reduce the energy of the edge platform. Simulation results show that partitioning a DNN at the end of convolutional (feature extraction) layers coupled with feature space encoding enables significant improvement in the energy-efficiency and throughput over the baseline configurations that perform the entire inference at the edge or at the host.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge