Mohammad Aghapour

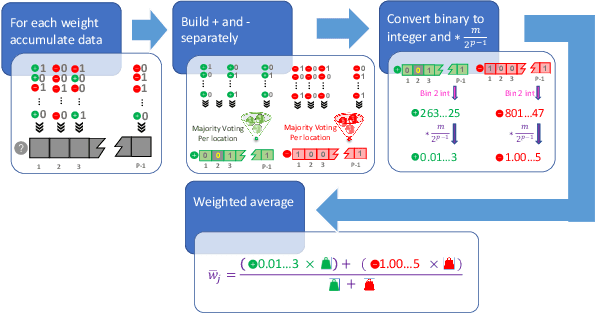

Two-Bit Aggregation for Communication Efficient and Differentially Private Federated Learning

Oct 06, 2021

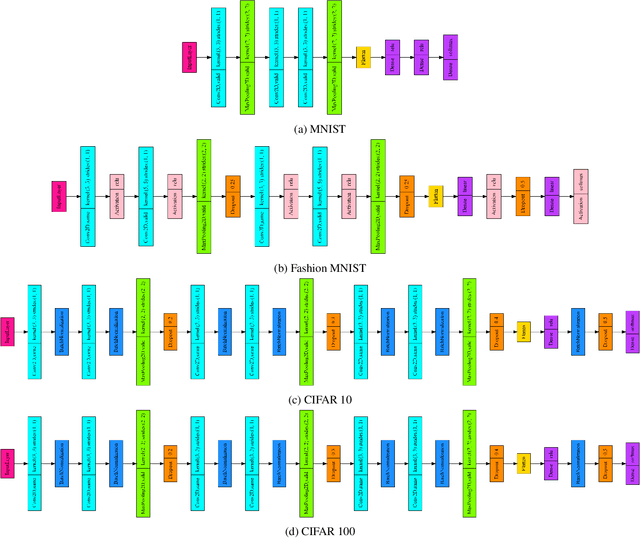

Abstract:In federated learning (FL), a machine learning model is trained on multiple nodes in a decentralized manner, while keeping the data local and not shared with other nodes. However, FL requires the nodes to also send information on the model parameters to a central server for aggregation. However, the information sent from the nodes to the server may reveal some details about each node's local data, thus raising privacy concerns. Furthermore, the repetitive uplink transmission from the nodes to the server may result in a communication overhead and network congestion. To address these two challenges, in this paper, a novel two-bit aggregation algorithm is proposed with guaranteed differential privacy and reduced uplink communication overhead. Extensive experiments demonstrate that the proposed aggregation algorithm can achieve the same performance as state-of-the-art approaches on datasets such as MNIST, Fashion MNIST, CIFAR-10, and CIFAR-100, while ensuring differential privacy and improving communication efficiency.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge